LDSA: Learning Dynamic Subtask Assignment in Cooperative Multi-Agent Reinforcement Learning

Paper and Code

May 05, 2022

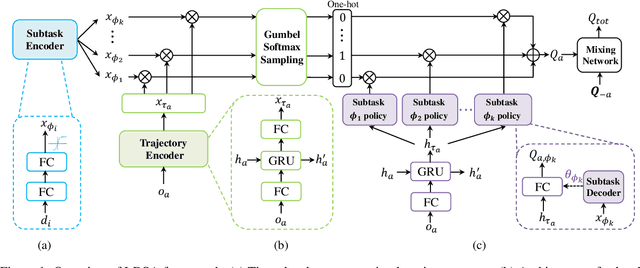

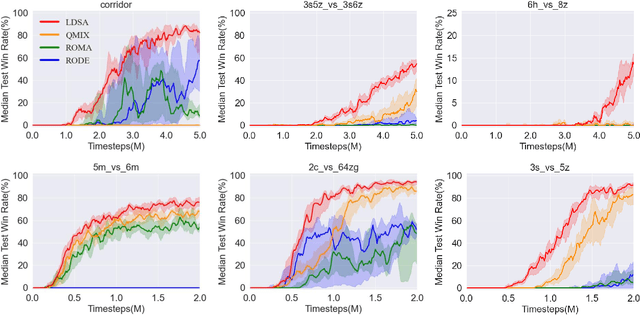

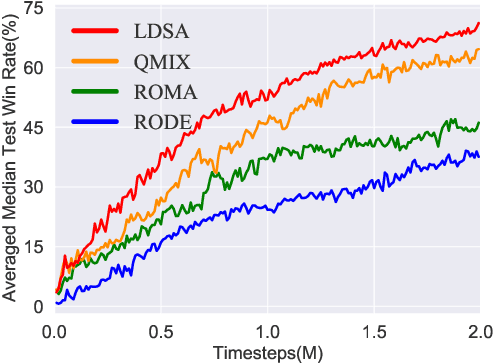

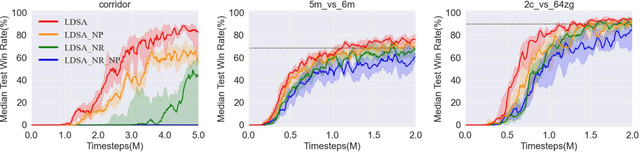

Cooperative multi-agent reinforcement learning (MARL) has made prominent progress in recent years. For training efficiency and scalability, most of the MARL algorithms make all agents share the same policy or value network. However, many complex multi-agent tasks require agents with a variety of specific abilities to handle different subtasks. Sharing parameters indiscriminately may lead to similar behaviors across all agents, which will limit the exploration efficiency and be detrimental to the final performance. To balance the training complexity and the diversity of agents' behaviors, we propose a novel framework for learning dynamic subtask assignment (LDSA) in cooperative MARL. Specifically, we first introduce a subtask encoder that constructs a vector representation for each subtask according to its identity. To reasonably assign agents to different subtasks, we propose an ability-based subtask selection strategy, which can dynamically group agents with similar abilities into the same subtask. Then, we condition the subtask policy on its representation and agents dealing with the same subtask share their experiences to train the subtask policy. We further introduce two regularizers to increase the representation difference between subtasks and avoid agents changing subtasks frequently to stabilize training, respectively. Empirical results show that LDSA learns reasonable and effective subtask assignment for better collaboration and significantly improves the learning performance on the challenging StarCraft II micromanagement benchmark.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge