KQA Pro: A Large Diagnostic Dataset for Complex Question Answering over Knowledge Base

Paper and Code

Jul 08, 2020

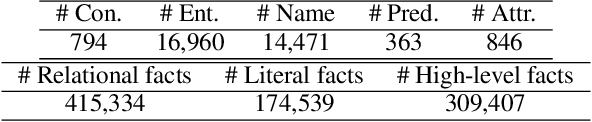

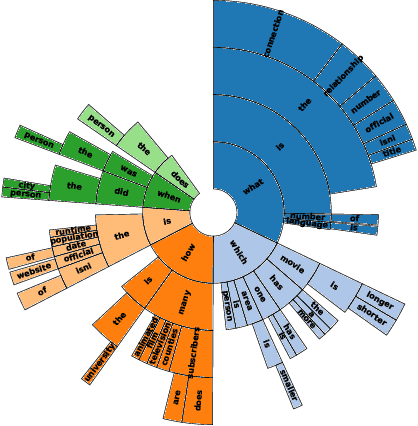

Complex question answering over knowledge base (Complex KBQA) is challenging because it requires the compositional reasoning capability. Existing benchmarks have three shortcomings that limit the development of Complex KBQA: 1) they only provide QA pairs without explicit reasoning processes; 2) questions are either generated by templates, leading to poor diversity, or on a small scale; and 3) they mostly only consider the relations among entities but not attributes. To this end, we introduce KQA Pro, a large-scale dataset for Complex KBQA. We generate questions, SPARQLs, and functional programs with recursive templates and then paraphrase the questions by crowdsourcing, giving rise to around 120K diverse instances. The SPARQLs and programs depict the reasoning processes in various manners, which can benefit a large spectrum of QA methods. We contribute a unified codebase and conduct extensive evaluations for baselines and state-of-the-arts: a blind GRU obtains 31.58\%, the best model achieves only 35.15\%, and humans top at 97.5\%, which offers great research potential to fill the gap.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge