Interactive Dual-Conformer with Scene-Inspired Mask for Soft Sound Event Detection

Paper and Code

Dec 07, 2023

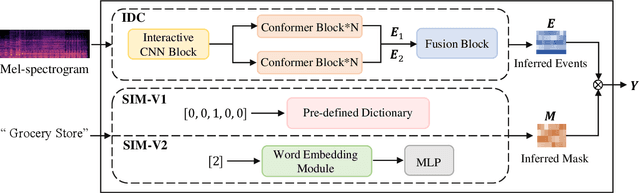

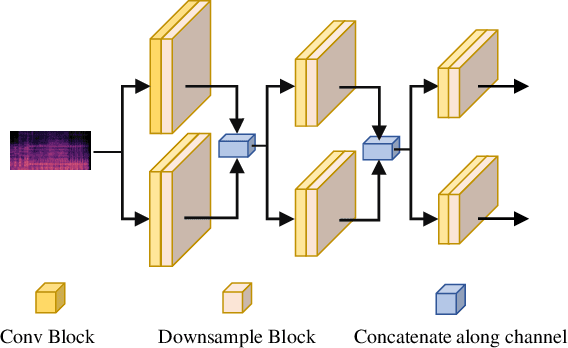

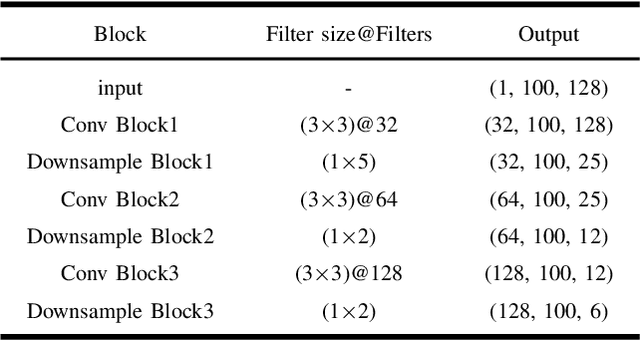

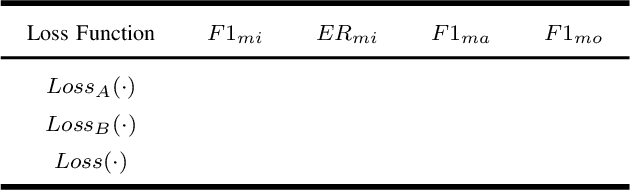

Traditional binary hard labels for sound event detection (SED) lack details about the complexity and variability of sound event distributions. Recently, a novel annotation workflow is proposed to generate fine-grained non-binary soft labels, resulting in a new real-life dataset named MAESTRO Real for SED. In this paper, we first propose an interactive dual-conformer (IDC) module, in which a cross-interaction mechanism is applied to effectively exploit the information from soft labels. In addition, a novel scene-inspired mask (SIM) based on soft labels is incorporated for more precise SED predictions. The SIM is initially generated through a statistical approach, referred as SIM-V1. However, the fixed artificial mask may mismatch the SED model, resulting in limited effectiveness. Therefore, we further propose SIM-V2, which employs a word embedding model for adaptive SIM estimation. Experimental results show that the proposed IDC module can effectively utilize the information from soft labels, and the integration of SIM-V1 can further improve the accuracy. In addition, the impact of different word embedding dimensions on SIM-V2 is explored, and the results show that the appropriate dimension can enable SIM-V2 achieve superior performance than SIM-V1. In DCASE 2023 Challenge Task4B, the proposed system achieved the top ranking performance on the evaluation dataset of MAESTRO Real.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge