In Search of Lost Online Test-time Adaptation: A Survey

Paper and Code

Oct 31, 2023

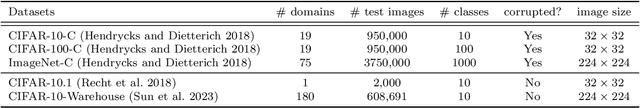

In this paper, we present a comprehensive survey on online test-time adaptation (OTTA), a paradigm focused on adapting machine learning models to novel data distributions upon batch arrival. Despite the proliferation of OTTA methods recently, the field is mired in issues like ambiguous settings, antiquated backbones, and inconsistent hyperparameter tuning, obfuscating the real challenges and making reproducibility elusive. For clarity and a rigorous comparison, we classify OTTA techniques into three primary categories and subject them to benchmarks using the potent Vision Transformer (ViT) backbone to discover genuinely effective strategies. Our benchmarks span not only conventional corrupted datasets such as CIFAR-10/100-C and ImageNet-C but also real-world shifts embodied in CIFAR-10.1 and CIFAR-10-Warehouse, encapsulating variations across search engines and synthesized data by diffusion models. To gauge efficiency in online scenarios, we introduce novel evaluation metrics, inclusive of FLOPs, shedding light on the trade-offs between adaptation accuracy and computational overhead. Our findings diverge from existing literature, indicating: (1) transformers exhibit heightened resilience to diverse domain shifts, (2) the efficacy of many OTTA methods hinges on ample batch sizes, and (3) stability in optimization and resistance to perturbations are critical during adaptation, especially when the batch size is 1. Motivated by these insights, we pointed out promising directions for future research. The source code will be made available.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge