IMFNet: Interpretable Multimodal Fusion for Point Cloud Registration

Paper and Code

Nov 18, 2021

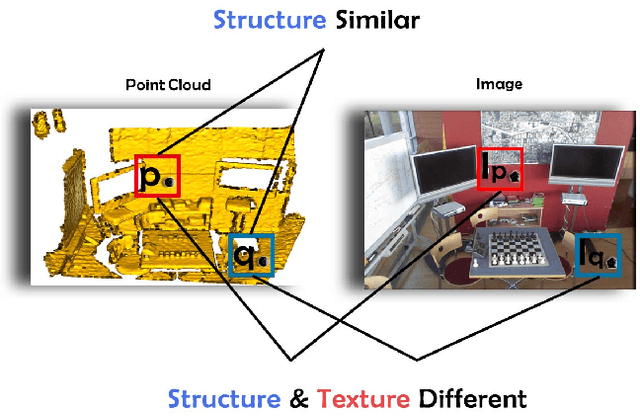

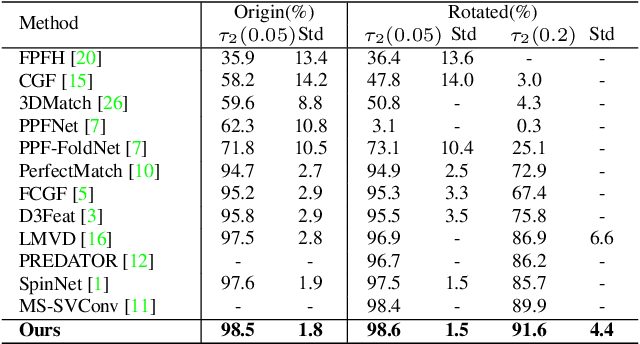

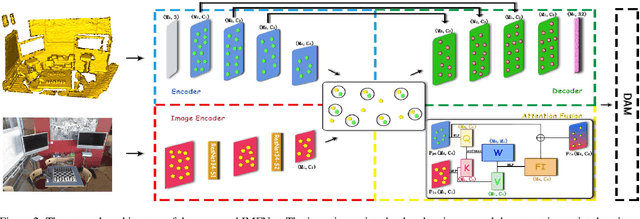

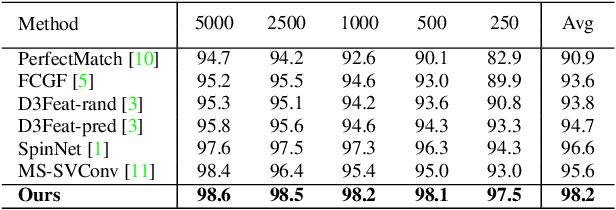

The existing state-of-the-art point descriptor relies on structure information only, which omit the texture information. However, texture information is crucial for our humans to distinguish a scene part. Moreover, the current learning-based point descriptors are all black boxes which are unclear how the original points contribute to the final descriptor. In this paper, we propose a new multimodal fusion method to generate a point cloud registration descriptor by considering both structure and texture information. Specifically, a novel attention-fusion module is designed to extract the weighted texture information for the descriptor extraction. In addition, we propose an interpretable module to explain the original points in contributing to the final descriptor. We use the descriptor element as the loss to backpropagate to the target layer and consider the gradient as the significance of this point to the final descriptor. This paper moves one step further to explainable deep learning in the registration task. Comprehensive experiments on 3DMatch, 3DLoMatch and KITTI demonstrate that the multimodal fusion descriptor achieves state-of-the-art accuracy and improve the descriptor's distinctiveness. We also demonstrate that our interpretable module in explaining the registration descriptor extraction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge