Hierarchical 3D fully convolutional networks for multi-organ segmentation

Paper and Code

Apr 21, 2017

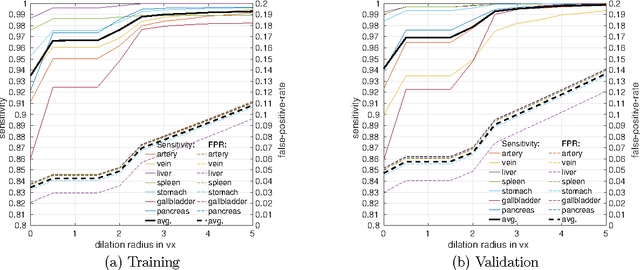

Recent advances in 3D fully convolutional networks (FCN) have made it feasible to produce dense voxel-wise predictions of full volumetric images. In this work, we show that a multi-class 3D FCN trained on manually labeled CT scans of seven abdominal structures (artery, vein, liver, spleen, stomach, gallbladder, and pancreas) can achieve competitive segmentation results, while avoiding the need for handcrafting features or training organ-specific models. To this end, we propose a two-stage, coarse-to-fine approach that trains an FCN model to roughly delineate the organs of interest in the first stage (seeing $\sim$40% of the voxels within a simple, automatically generated binary mask of the patient's body). We then use these predictions of the first-stage FCN to define a candidate region that will be used to train a second FCN. This step reduces the number of voxels the FCN has to classify to $\sim$10% while maintaining a recall high of $>$99%. This second-stage FCN can now focus on more detailed segmentation of the organs. We respectively utilize training and validation sets consisting of 281 and 50 clinical CT images. Our hierarchical approach provides an improved Dice score of 7.5 percentage points per organ on average in our validation set. We furthermore test our models on a completely unseen data collection acquired at a different hospital that includes 150 CT scans with three anatomical labels (liver, spleen, and pancreas). In such challenging organs as the pancreas, our hierarchical approach improves the mean Dice score from 68.5 to 82.2%, achieving the highest reported average score on this dataset.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge