Graphs as Tools to Improve Deep Learning Methods

Paper and Code

Oct 08, 2021

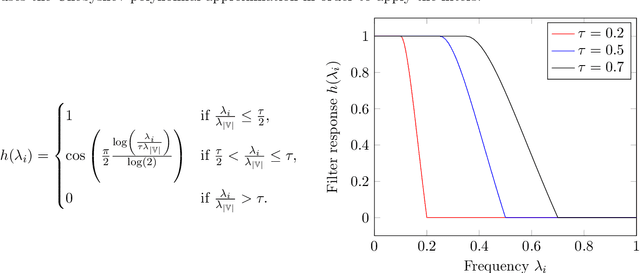

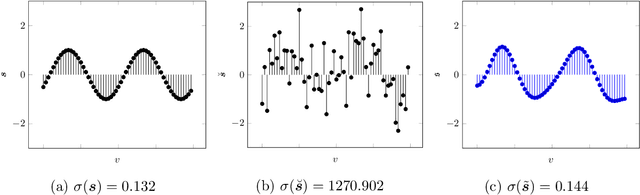

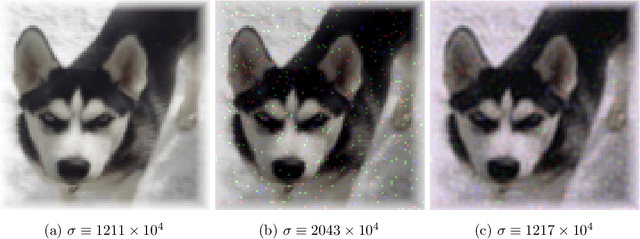

In recent years, deep neural networks (DNNs) have known an important rise in popularity. However, although they are state-of-the-art in many machine learning challenges, they still suffer from several limitations. For example, DNNs require a lot of training data, which might not be available in some practical applications. In addition, when small perturbations are added to the inputs, DNNs are prone to misclassification errors. DNNs are also viewed as black-boxes and as such their decisions are often criticized for their lack of interpretability. In this chapter, we review recent works that aim at using graphs as tools to improve deep learning methods. These graphs are defined considering a specific layer in a deep learning architecture. Their vertices represent distinct samples, and their edges depend on the similarity of the corresponding intermediate representations. These graphs can then be leveraged using various methodologies, many of which built on top of graph signal processing. This chapter is composed of four main parts: tools for visualizing intermediate layers in a DNN, denoising data representations, optimizing graph objective functions and regularizing the learning process.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge