Generating faces for affect analysis

Paper and Code

Nov 12, 2018

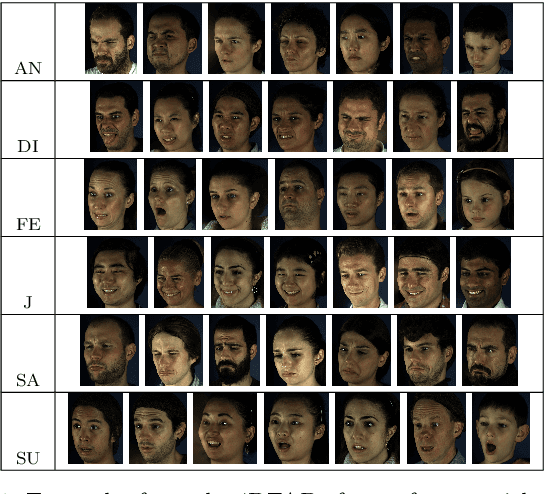

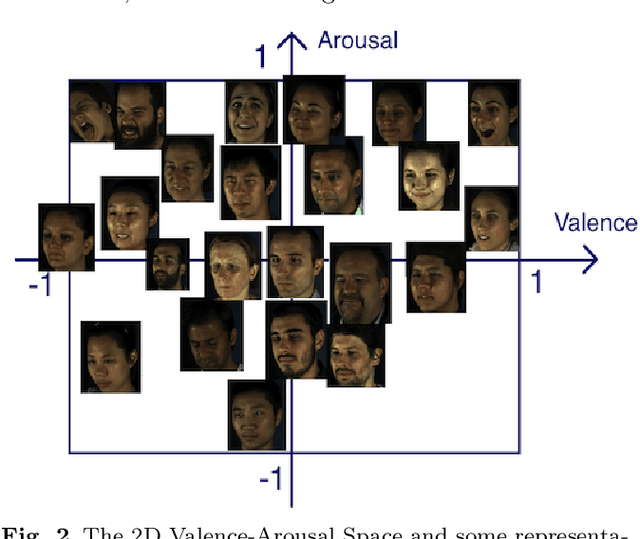

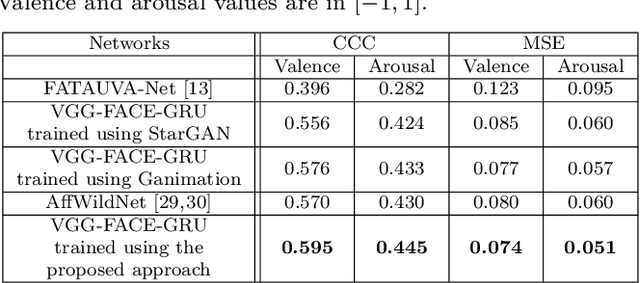

This paper presents a novel approach for synthesizing facial affect; either categorical, in terms of the six basic expressions (i.e., anger, disgust, fear, happiness, sadness and surprise), or dimensional, in terms of valence (i.e., how positive or negative is an emotion) and arousal (i.e., power of the emotion activation). In the Valence-Arousal case, a system is created, based on VA annotation of 600,000 frames from the 4DFAB database; in the categorical case, the system is based on the selection of apex frames of posed expression sequences from the 4DFAB. The proposed system accepts at its input: i) either the basic facial expression, or the pair of valence-arousal emotional state descriptors, which need to be synthesized and ii) a neutral 2D image of a person on which the corresponding affect will be synthesized. The proposed approach consists of the following steps: First, based on the provided desired emotional state, a set of 3D facial meshes is produced from the 4DFAB database and is used to build a blendshape model that generates the new facial affect. To synthesize this affect on the 2D neutral image, 3D Morphable Models fitting is performed and the reconstructed face is then deformed to generate the target facial expressions. Finally, the new face is rendered into the original image. Qualitative experimental studies illustrate the generation of realistic images, when the neutral image is sampled from a variety of well known lab-controlled or in-the-wild databases, including Aff-Wild, RECOLA, AffectNet, AFEW, Multi-PIE, AFEW-VA, BU-3DFE, Bosphorus, RAF-DB. Also, quantitative experiments are conducted, in which deep neural networks, trained using the generated images from each of the above databases in a data-augmentation framework, provide affect recognition; better performances are achieved through the presented approach when compared with the current state-of-the-art.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge