Fusing Visuo-Tactile Perception into Kernelized Synergies for Robust Grasping and Fine Manipulation of Non-rigid Objects

Paper and Code

Sep 15, 2021

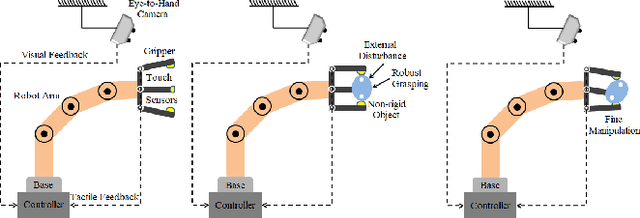

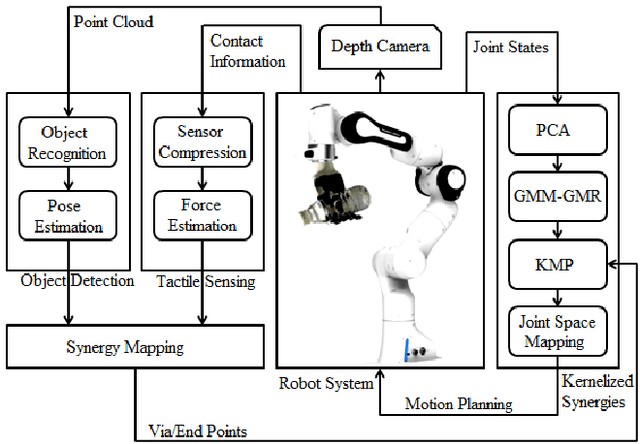

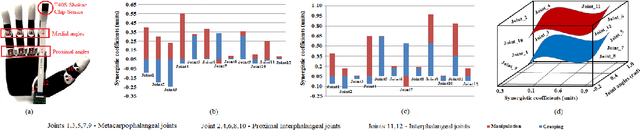

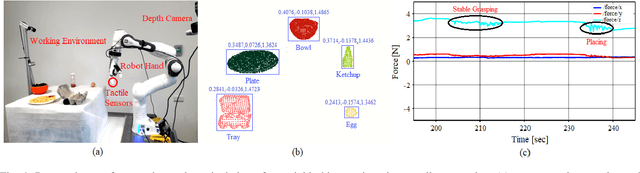

Handling non-rigid objects using robot hands necessities a framework that does not only incorporate human-level dexterity and cognition but also the multi-sensory information and system dynamics for robust and fine interactions. In this research, our previously developed kernelized synergies framework, inspired from human behaviour on reusing same subspace for grasping and manipulation, is augmented with visuo-tactile perception for autonomous and flexible adaptation to unknown objects. To detect objects and estimate their poses, a simplified visual pipeline using RANSAC algorithm with Euclidean clustering and SVM classifier is exploited. To modulate interaction efforts while grasping and manipulating non-rigid objects, the tactile feedback using T40S shokac chip sensor, generating 3D force information, is incorporated. Moreover, different kernel functions are examined in the kernelized synergies framework, to evaluate its performance and potential against task reproducibility, execution, generalization and synergistic re-usability. Experiments performed with robot arm-hand system validates the capability and usability of upgraded framework on stably grasping and dexterously manipulating the non-rigid objects.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge