From Face to Natural Image: Learning Real Degradation for Blind Image Super-Resolution

Paper and Code

Oct 03, 2022

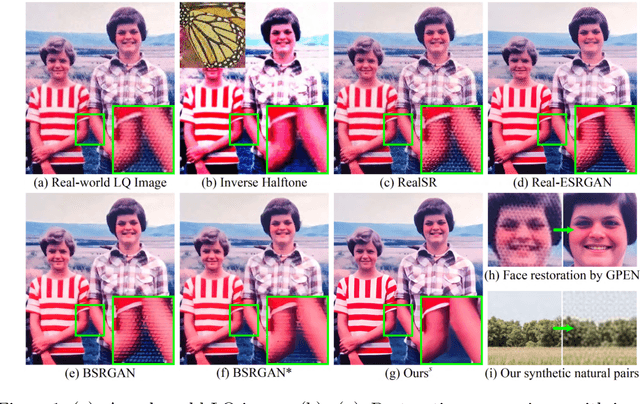

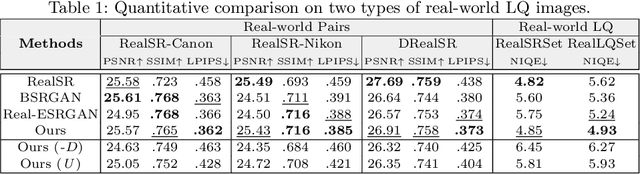

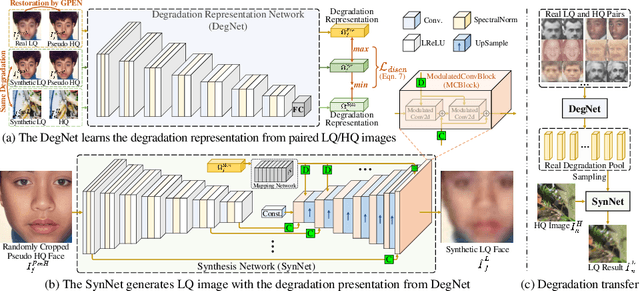

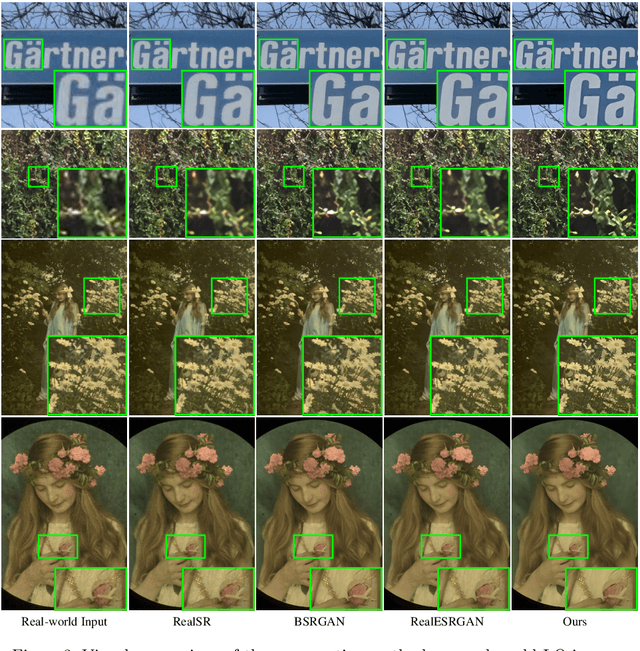

Designing proper training pairs is critical for super-resolving the real-world low-quality (LQ) images, yet suffers from the difficulties in either acquiring paired ground-truth HQ images or synthesizing photo-realistic degraded observations. Recent works mainly circumvent this by simulating the degradation with handcrafted or estimated degradation parameters. However, existing synthetic degradation models are incapable to model complicated real degradation types, resulting in limited improvement on these scenarios, \eg, old photos. Notably, face images, which have the same degradation process with the natural images, can be robustly restored with photo-realistic textures by exploiting their specific structure priors. In this work, we use these real-world LQ face images and their restored HQ counterparts to model the complex real degradation (namely ReDegNet), and then transfer it to HQ natural images to synthesize their realistic LQ ones. Specifically, we take these paired HQ and LQ face images as inputs to explicitly predict the degradation-aware and content-independent representations, which control the degraded image generation. Subsequently, we transfer these real degradation representations from face to natural images to synthesize the degraded LQ natural images. Experiments show that our ReDegNet can well learn the real degradation process from face images, and the restoration network trained with our synthetic pairs performs favorably against SOTAs. More importantly, our method provides a new manner to handle the unsynthesizable real-world scenarios by learning their degradation representations through face images within them, which can be used for specifically fine-tuning. The source code is available at https://github.com/csxmli2016/ReDegNet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge