Enhancing self-supervised monocular depth estimation with traditional visual odometry

Paper and Code

Aug 12, 2019

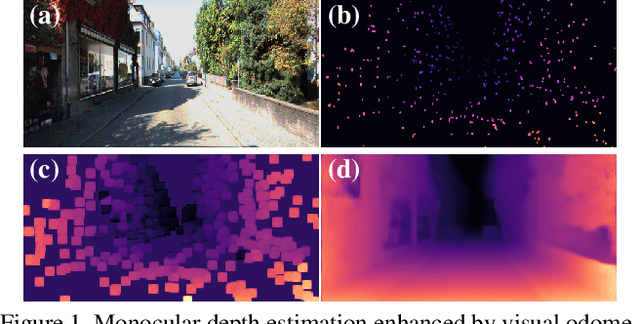

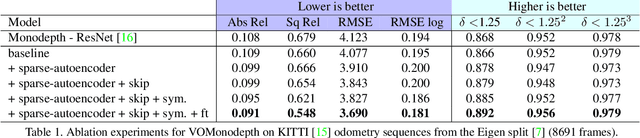

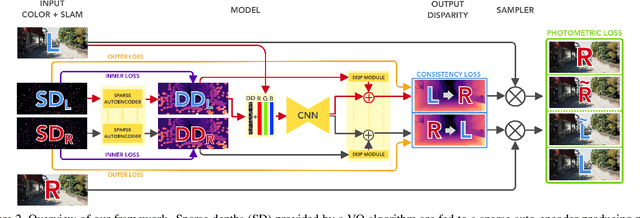

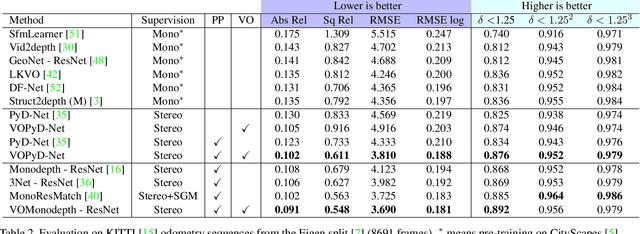

Estimating depth from a single image represents an attractive alternative to more traditional approaches leveraging multiple cameras. In this field, deep learning yielded outstanding results at the cost of needing large amounts of data labeled with precise depth measurements for training. An issue softened by self-supervised approaches leveraging monocular sequences or stereo pairs in place of expensive ground truth depth annotations. This paper enables to further improve monocular depth estimation by integrating into existing self-supervised networks a geometrical prior. Specifically, we propose a sparsity-invariant autoencoder able to process the output of conventional visual odometry algorithms working in synergy with depth-from-mono networks. Experimental results on the KITTI dataset show that by exploiting the geometrical prior, our proposal: i) outperforms existing approaches in the literature and ii) couples well with both compact and complex depth-from-mono architectures, allowing for its deployment on high-end GPUs as well as on embedded devices (e.g., NVIDIA Jetson TX2).

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge