Efficient Prediction of Peptide Self-assembly through Sequential and Graphical Encoding

Paper and Code

Jul 17, 2023

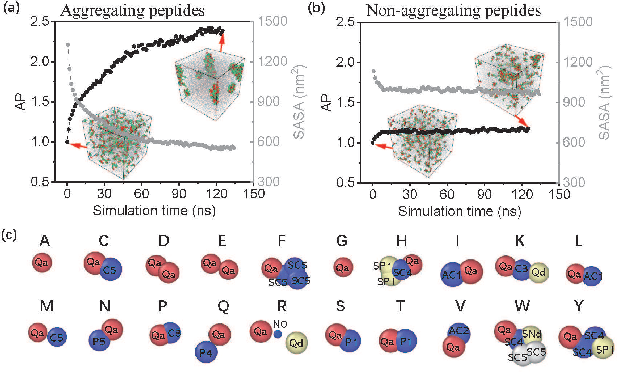

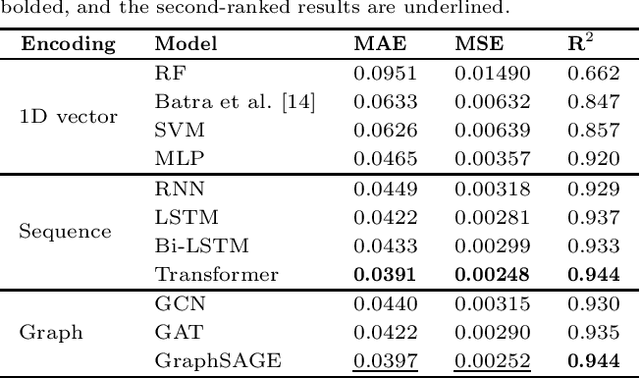

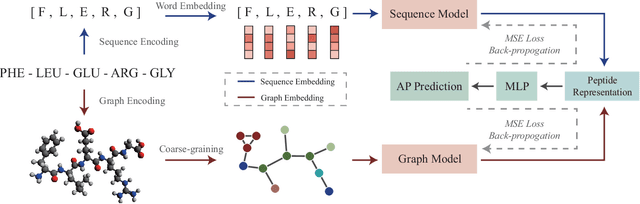

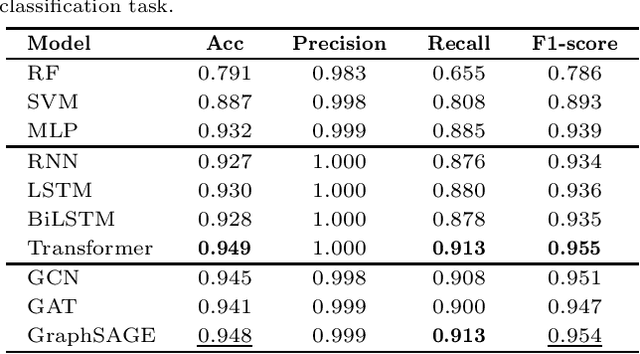

In recent years, there has been an explosion of research on the application of deep learning to the prediction of various peptide properties, due to the significant development and market potential of peptides. Molecular dynamics has enabled the efficient collection of large peptide datasets, providing reliable training data for deep learning. However, the lack of systematic analysis of the peptide encoding, which is essential for AI-assisted peptide-related tasks, makes it an urgent problem to be solved for the improvement of prediction accuracy. To address this issue, we first collect a high-quality, colossal simulation dataset of peptide self-assembly containing over 62,000 samples generated by coarse-grained molecular dynamics (CGMD). Then, we systematically investigate the effect of peptide encoding of amino acids into sequences and molecular graphs using state-of-the-art sequential (i.e., RNN, LSTM, and Transformer) and structural deep learning models (i.e., GCN, GAT, and GraphSAGE), on the accuracy of peptide self-assembly prediction, an essential physiochemical process prior to any peptide-related applications. Extensive benchmarking studies have proven Transformer to be the most powerful sequence-encoding-based deep learning model, pushing the limit of peptide self-assembly prediction to decapeptides. In summary, this work provides a comprehensive benchmark analysis of peptide encoding with advanced deep learning models, serving as a guide for a wide range of peptide-related predictions such as isoelectric points, hydration free energy, etc.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge