DnSwin: Toward Real-World Denoising via Continuous Wavelet Sliding-Transformer

Paper and Code

Jul 28, 2022

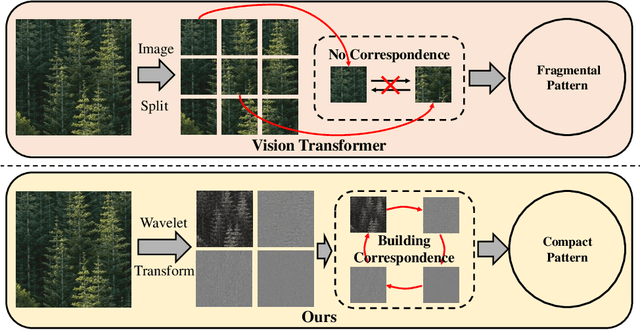

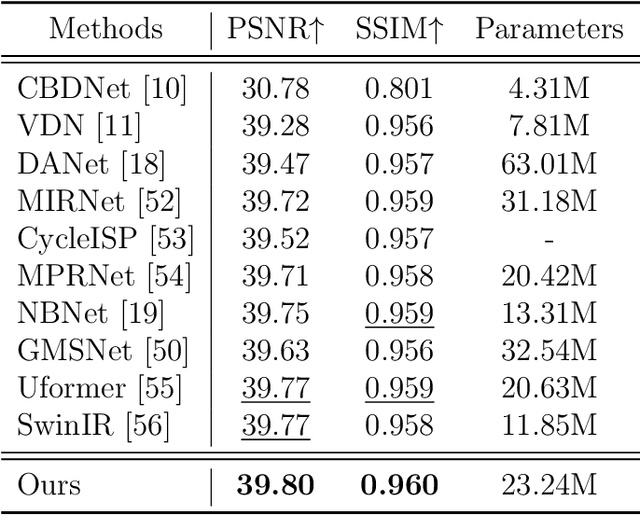

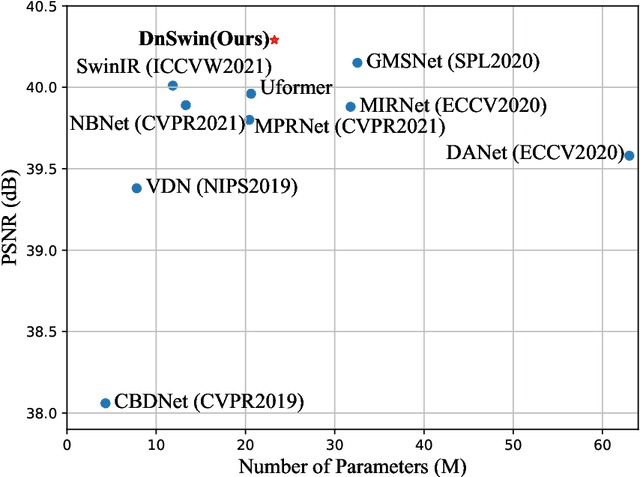

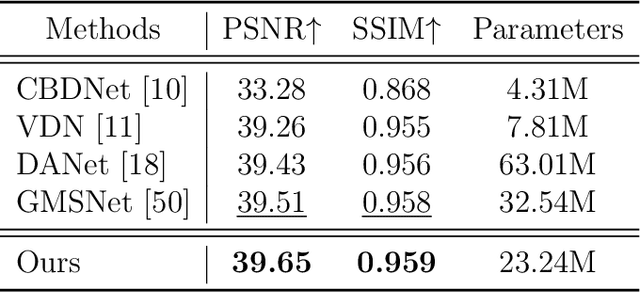

Real-world image denoising is a practical image restoration problem that aims to obtain clean images from in-the-wild noisy input. Recently, Vision Transformer (ViT) exhibits a strong ability to capture long-range dependencies and many researchers attempt to apply ViT to image denoising tasks. However, real-world image is an isolated frame that makes the ViT build the long-range dependencies on the internal patches, which divides images into patches and disarranges the noise pattern and gradient continuity. In this article, we propose to resolve this issue by using a continuous Wavelet Sliding-Transformer that builds frequency correspondence under real-world scenes, called DnSwin. Specifically, we first extract the bottom features from noisy input images by using a CNN encoder. The key to DnSwin is to separate high-frequency and low-frequency information from the features and build frequency dependencies. To this end, we propose Wavelet Sliding-Window Transformer that utilizes discrete wavelet transform, self-attention and inverse discrete wavelet transform to extract deep features. Finally, we reconstruct the deep features into denoised images using a CNN decoder. Both quantitative and qualitative evaluations on real-world denoising benchmarks demonstrate that the proposed DnSwin performs favorably against the state-of-the-art methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge