Differentially Private Data Generative Models

Paper and Code

Dec 06, 2018

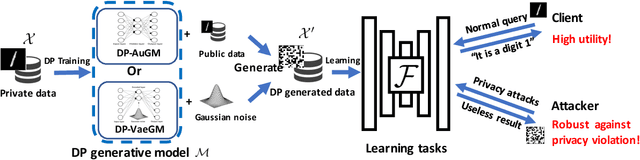

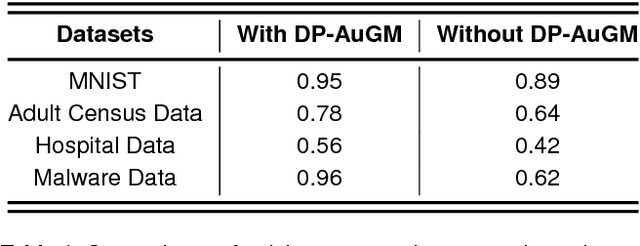

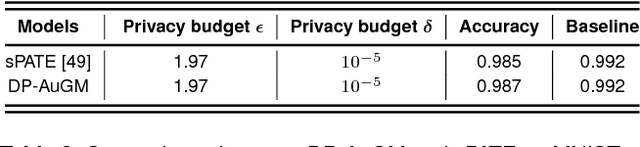

Deep neural networks (DNNs) have recently been widely adopted in various applications, and such success is largely due to a combination of algorithmic breakthroughs, computation resource improvements, and access to a large amount of data. However, the large-scale data collections required for deep learning often contain sensitive information, therefore raising many privacy concerns. Prior research has shown several successful attacks in inferring sensitive training data information, such as model inversion, membership inference, and generative adversarial networks (GAN) based leakage attacks against collaborative deep learning. In this paper, to enable learning efficiency as well as to generate data with privacy guarantees and high utility, we propose a differentially private autoencoder-based generative model (DP-AuGM) and a differentially private variational autoencoder-based generative model (DP-VaeGM). We evaluate the robustness of two proposed models. We show that DP-AuGM can effectively defend against the model inversion, membership inference, and GAN-based attacks. We also show that DP-VaeGM is robust against the membership inference attack. We conjecture that the key to defend against the model inversion and GAN-based attacks is not due to differential privacy but the perturbation of training data. Finally, we demonstrate that both DP-AuGM and DP-VaeGM can be easily integrated with real-world machine learning applications, such as machine learning as a service and federated learning, which are otherwise threatened by the membership inference attack and the GAN-based attack, respectively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge