Differentiable Architecture Search with Ensemble Gumbel-Softmax

Paper and Code

May 06, 2019

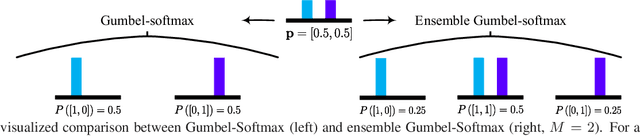

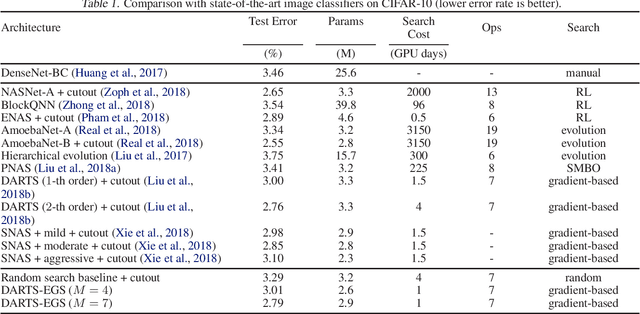

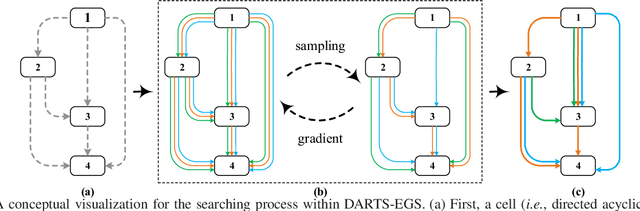

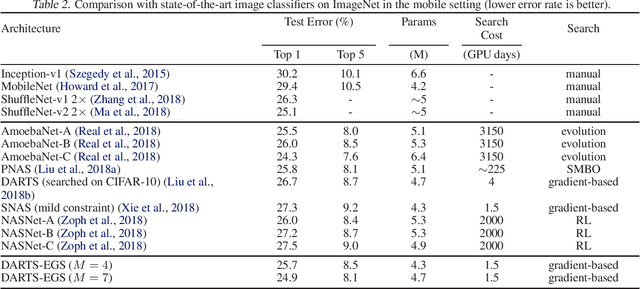

For network architecture search (NAS), it is crucial but challenging to simultaneously guarantee both effectiveness and efficiency. Towards achieving this goal, we develop a differentiable NAS solution, where the search space includes arbitrary feed-forward network consisting of the predefined number of connections. Benefiting from a proposed ensemble Gumbel-Softmax estimator, our method optimizes both the architecture of a deep network and its parameters in the same round of backward propagation, yielding an end-to-end mechanism of searching network architectures. Extensive experiments on a variety of popular datasets strongly evidence that our method is capable of discovering high-performance architectures, while guaranteeing the requisite efficiency during searching.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge