Design Automation for Fast, Lightweight, and Effective Deep Learning Models: A Survey

Paper and Code

Aug 22, 2022

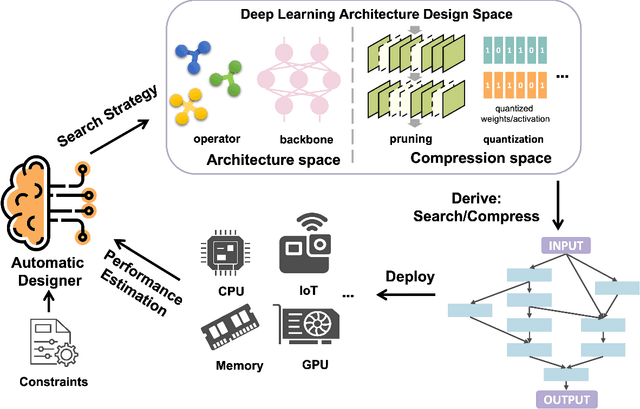

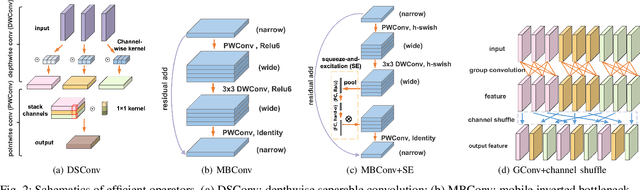

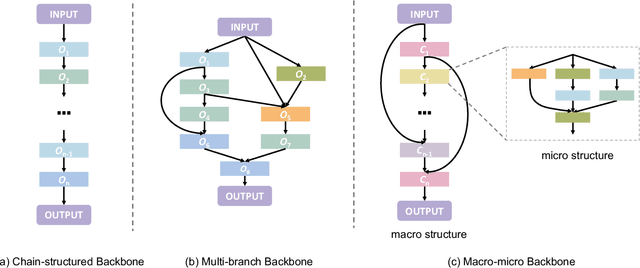

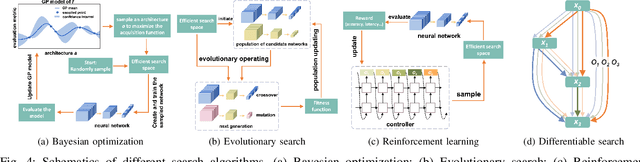

Deep learning technologies have demonstrated remarkable effectiveness in a wide range of tasks, and deep learning holds the potential to advance a multitude of applications, including in edge computing, where deep models are deployed on edge devices to enable instant data processing and response. A key challenge is that while the application of deep models often incurs substantial memory and computational costs, edge devices typically offer only very limited storage and computational capabilities that may vary substantially across devices. These characteristics make it difficult to build deep learning solutions that unleash the potential of edge devices while complying with their constraints. A promising approach to addressing this challenge is to automate the design of effective deep learning models that are lightweight, require only a little storage, and incur only low computational overheads. This survey offers comprehensive coverage of studies of design automation techniques for deep learning models targeting edge computing. It offers an overview and comparison of key metrics that are used commonly to quantify the proficiency of models in terms of effectiveness, lightness, and computational costs. The survey then proceeds to cover three categories of the state-of-the-art of deep model design automation techniques: automated neural architecture search, automated model compression, and joint automated design and compression. Finally, the survey covers open issues and directions for future research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge