Deep weakly-supervised learning methods for classification and localization in histology images: a survey

Paper and Code

Sep 26, 2019

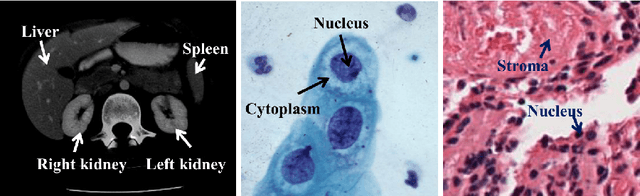

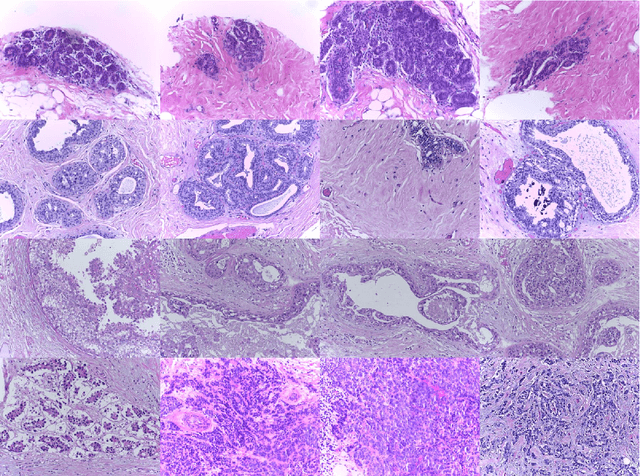

Using state-of-the-art deep learning models for the computer-assisted diagnosis of diseases like cancer raises several challenges related to the nature and availability of labeled histology images. In particular, cancer grading and localization in these images normally relies on both image- and pixel-level labels, the latter requiring a costly annotation process. In this survey, deep weakly-supervised learning (WSL) architectures are investigated to identify and locate diseases in histology image, without the need for pixel-level annotations. Given a training dataset with globally-annotated images, these models allow to simultaneously classify histology images, while localizing the corresponding regions of interest. These models are organized into two main approaches -- (1) bottom-up approaches (based on forward-pass information through a network, either by spatial pooling of representations/scores, or by detecting class regions), and (2) top-down approaches (based on backward-pass information within a network, inspired by human visual attention). Since relevant WSL models have mainly been developed in the computer vision community, and validated on natural scene images, we assess the extent to which they apply to histology images which have challenging properties, e.g., large size, non-salient and highly unstructured regions, stain heterogeneity, and coarse/ambiguous labels. The most relevant deep WSL models (e.g., CAM, WILDCAT and Deep MIL) are compared experimentally in terms of accuracy (classification and pixel-level localization) on several public benchmark histology datasets for breast and colon cancer (BACH ICIAR 2018, BreakHis, CAMELYON16, and GlaS). Results indicate that several deep learning models, and in particular WILDCAT and deep MIL can provide a high level of classification accuracy, although pixel-wise localization of cancer regions remains an issue for such images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge