Data-Driven Bandit Learning for Proactive Cache Placement in Fog-Assisted IoT Systems

Paper and Code

Aug 01, 2020

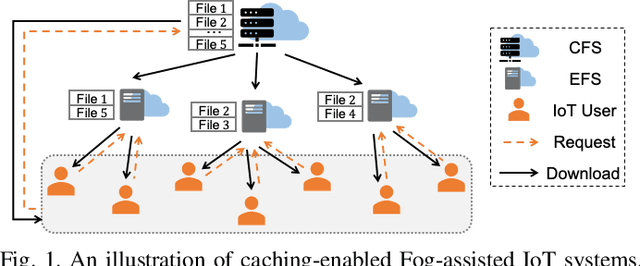

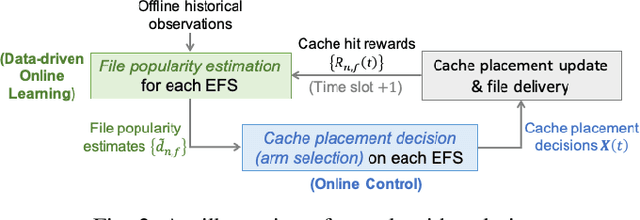

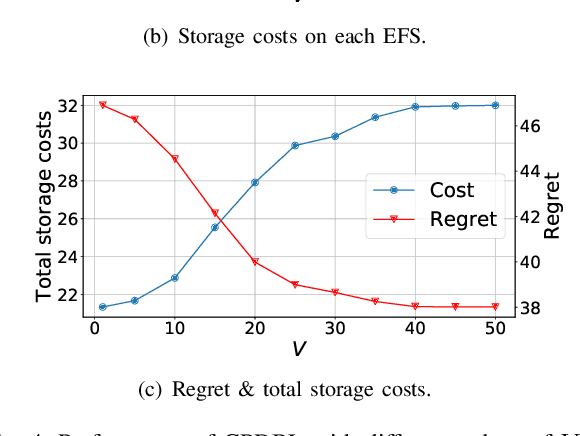

In Fog-assisted IoT systems, it is a common practice to cache popular content at the network edge to achieve high quality of service. Due to uncertainties in practice such as unknown file popularities, cache placement scheme design is still an open problem with unresolved challenges: 1) how to maintain time-averaged storage costs under budgets, 2) how to incorporate online learning to aid cache placement to minimize performance loss (a.k.a. regret), and 3) how to exploit offline history information to further reduce regret. In this paper, we formulate the cache placement problem with unknown file popularities as a constrained combinatorial multi-armed bandit (CMAB) problem. To solve the problem, we employ virtual queue techniques to manage time-averaged constraints, and adopt data-driven bandit learning methods to integrate offline history information into online learning to handle exploration-exploitation tradeoff. With an effective combination of online control and data-driven online learning, we devise a Cache Placement scheme with Data-driven Bandit Learning called CPDBL. Our theoretical analysis and simulations show that CPDBL achieves a sublinear time-averaged regret under long-term storage cost constraints.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge