Contrastive Explanations of Plans Through Model Restrictions

Paper and Code

Mar 29, 2021

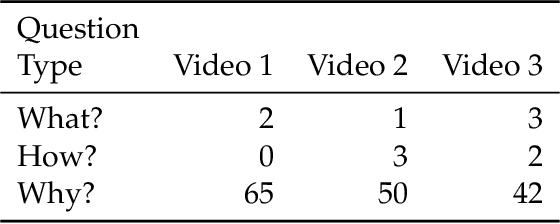

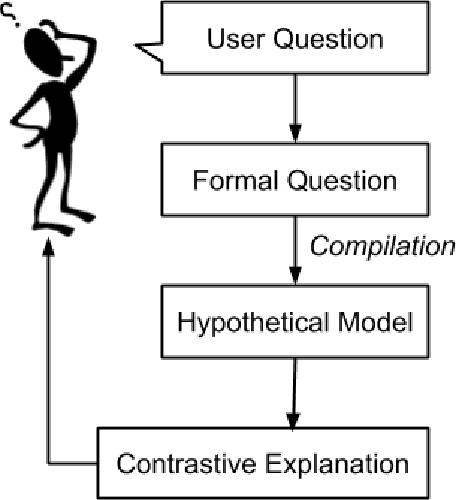

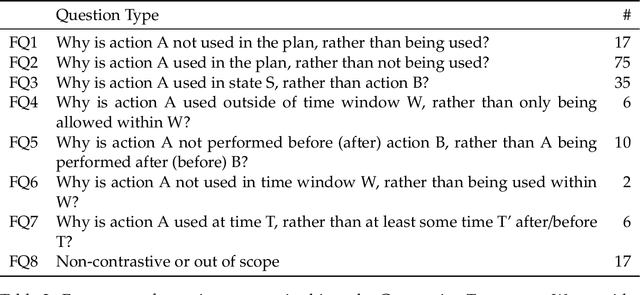

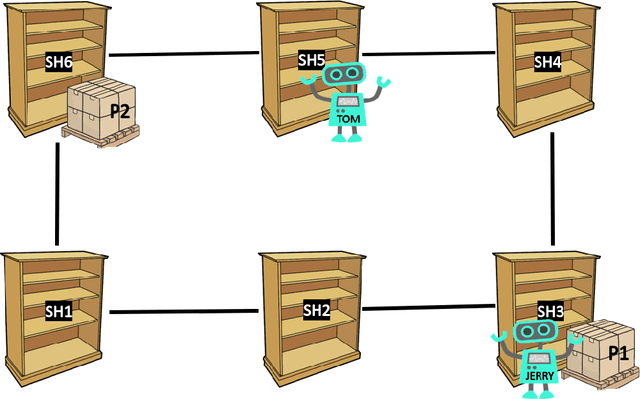

In automated planning, the need for explanations arises when there is a mismatch between a proposed plan and the user's expectation. We frame Explainable AI Planning in the context of the plan negotiation problem, in which a succession of hypothetical planning problems are generated and solved. The object of the negotiation is for the user to understand and ultimately arrive at a satisfactory plan. We present the results of a user study that demonstrates that when users ask questions about plans, those questions are contrastive, i.e. "why A rather than B?". We use the data from this study to construct a taxonomy of user questions that often arise during plan negotiation. We formally define our approach to plan negotiation through model restriction as an iterative process. This approach generates hypothetical problems and contrastive plans by restricting the model through constraints implied by user questions. We formally define model-based compilations in PDDL2.1 of each constraint derived from a user question in the taxonomy, and empirically evaluate the compilations in terms of computational complexity. The compilations were implemented as part of an explanation framework that employs iterative model restriction. We demonstrate its benefits in a second user study.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge