CM-NAS: Rethinking Cross-Modality Neural Architectures for Visible-Infrared Person Re-Identification

Paper and Code

Jan 21, 2021

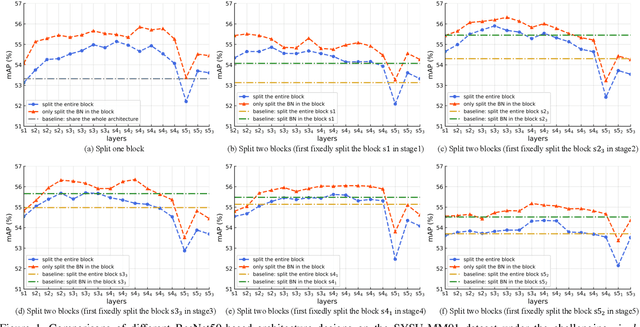

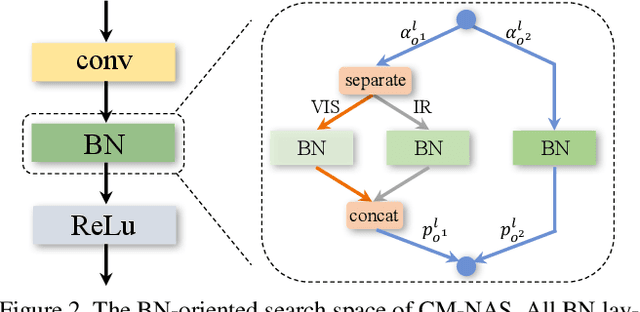

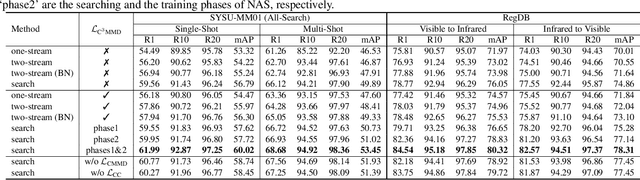

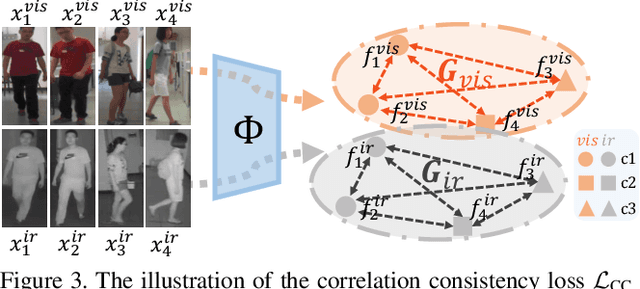

Visible-Infrared person re-identification (VI-ReID) aims at matching cross-modality pedestrian images, breaking through the limitation of single-modality person ReID in dark environment. In order to mitigate the impact of large modality discrepancy, existing works manually design various two-stream architectures to separately learn modality-specific and modality-sharable representations. Such a manual design routine, however, highly depends on massive experiments and empirical practice, which is time consuming and labor intensive. In this paper, we systematically study the manually designed architectures, and identify that appropriately splitting Batch Normalization (BN) layers to learn modality-specific representations will bring a great boost towards cross-modality matching. Based on this observation, the essential objective is to find the optimal splitting scheme for each BN layer. To this end, we propose a novel method, named Cross-Modality Neural Architecture Search (CM-NAS). It consists of a BN-oriented search space in which the standard optimization can be fulfilled subject to the cross-modality task. Besides, in order to better guide the search process, we further formulate a new Correlation Consistency based Class-specific Maximum Mean Discrepancy (C3MMD) loss. Apart from the modality discrepancy, it also concerns the similarity correlations, which have been overlooked before, in the two modalities. Resorting to these advantages, our method outperforms state-of-the-art counterparts in extensive experiments, improving the Rank-1/mAP by 6.70%/6.13% on SYSU-MM01 and 12.17%/11.23% on RegDB. The source code will be released soon.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge