Bolt-on Differential Privacy for Scalable Stochastic Gradient Descent-based Analytics

Paper and Code

Mar 23, 2017

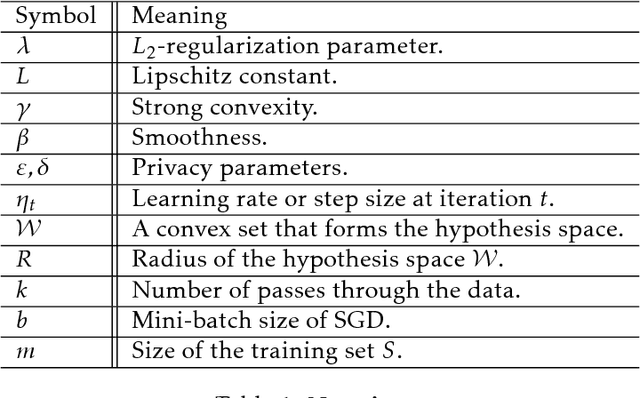

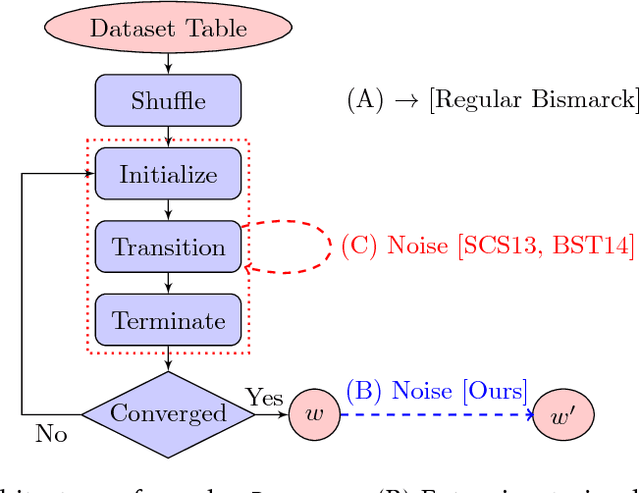

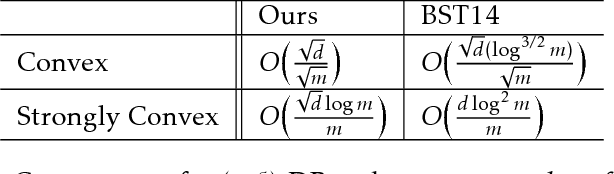

While significant progress has been made separately on analytics systems for scalable stochastic gradient descent (SGD) and private SGD, none of the major scalable analytics frameworks have incorporated differentially private SGD. There are two inter-related issues for this disconnect between research and practice: (1) low model accuracy due to added noise to guarantee privacy, and (2) high development and runtime overhead of the private algorithms. This paper takes a first step to remedy this disconnect and proposes a private SGD algorithm to address \emph{both} issues in an integrated manner. In contrast to the white-box approach adopted by previous work, we revisit and use the classical technique of {\em output perturbation} to devise a novel "bolt-on" approach to private SGD. While our approach trivially addresses (2), it makes (1) even more challenging. We address this challenge by providing a novel analysis of the $L_2$-sensitivity of SGD, which allows, under the same privacy guarantees, better convergence of SGD when only a constant number of passes can be made over the data. We integrate our algorithm, as well as other state-of-the-art differentially private SGD, into Bismarck, a popular scalable SGD-based analytics system on top of an RDBMS. Extensive experiments show that our algorithm can be easily integrated, incurs virtually no overhead, scales well, and most importantly, yields substantially better (up to 4X) test accuracy than the state-of-the-art algorithms on many real datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge