Benchmarking Discrete Optimization Heuristics with IOHprofiler

Paper and Code

Dec 19, 2019

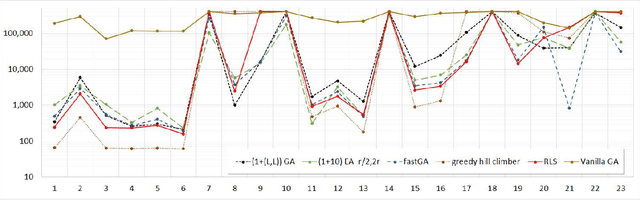

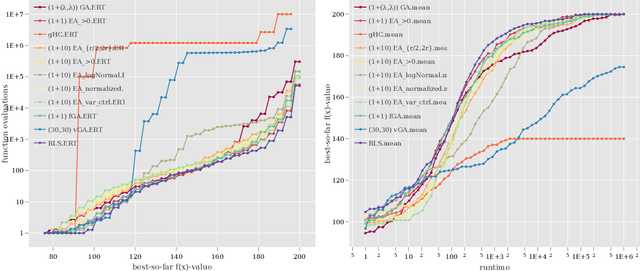

Automated benchmarking environments aim to support researchers in understanding how different algorithms perform on different types of optimization problems. Such comparisons provide insights into the strengths and weaknesses of different approaches, which can be leveraged into designing new algorithms and into the automation of algorithm selection and configuration. With the ultimate goal to create a meaningful benchmark set for iterative optimization heuristics, we have recently released IOHprofiler, a software built to create detailed performance comparisons between iterative optimization heuristics. With this present work we demonstrate that IOHprofiler provides a suitable environment for automated benchmarking. We compile and assess a selection of 23 discrete optimization problems that subscribe to different types of fitness landscapes. For each selected problem we compare performances of twelve different heuristics, which are as of now available as baseline algorithms in IOHprofiler. We also provide a new module for IOHprofiler which extents the fixed-target and fixed-budget results for the individual problems by ECDF results, which allows one to derive aggregated performance statistics for groups of problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge