ANUBIS: Skeleton Action Recognition Dataset, Review, and Benchmark

Paper and Code

May 08, 2022

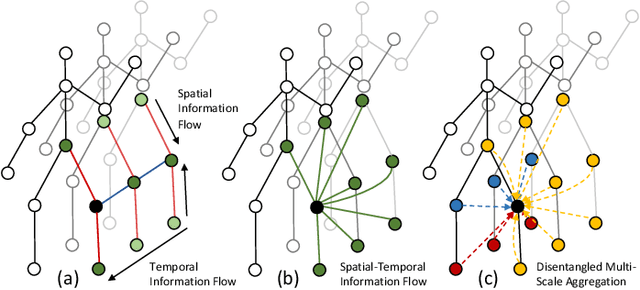

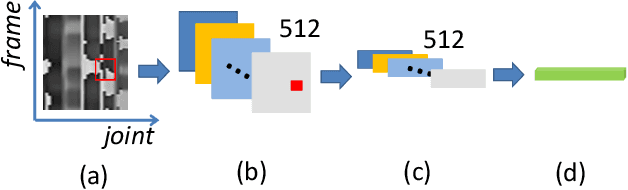

Skeleton-based action recognition, as a subarea of action recognition, is swiftly accumulating attention and popularity. The task is to recognize actions performed by human articulation points. Compared with other data modalities, 3D human skeleton representations have extensive unique desirable characteristics, including succinctness, robustness, racial-impartiality, and many more. We aim to provide a roadmap for new and existing researchers a on the landscapes of skeleton-based action recognition for new and existing researchers. To this end, we present a review in the form of a taxonomy on existing works of skeleton-based action recognition. We partition them into four major categories: (1) datasets; (2) extracting spatial features; (3) capturing temporal patterns; (4) improving signal quality. For each method, we provide concise yet informatively-sufficient descriptions. To promote more fair and comprehensive evaluation on existing approaches of skeleton-based action recognition, we collect ANUBIS, a large-scale human skeleton dataset. Compared with previously collected dataset, ANUBIS are advantageous in the following four aspects: (1) employing more recently released sensors; (2) containing novel back view; (3) encouraging high enthusiasm of subjects; (4) including actions of the COVID pandemic era. Using ANUBIS, we comparably benchmark performance of current skeleton-based action recognizers. At the end of this paper, we outlook future development of skeleton-based action recognition by listing several new technical problems. We believe they are valuable to solve in order to commercialize skeleton-based action recognition in the near future. The dataset of ANUBIS is available at: http://hcc-workshop.anu.edu.au/webs/anu101/home.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge