Analyzing Adversarial Robustness of Deep Neural Networks in Pixel Space: a Semantic Perspective

Paper and Code

Jun 18, 2021

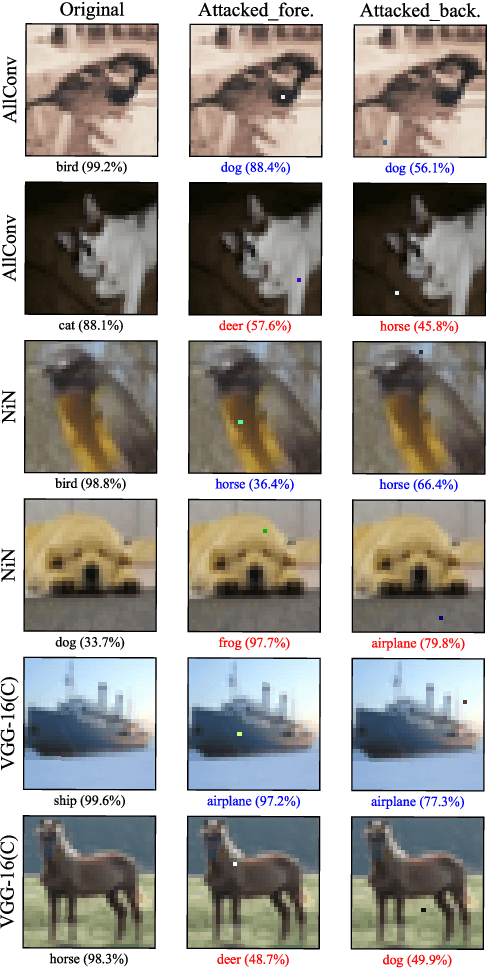

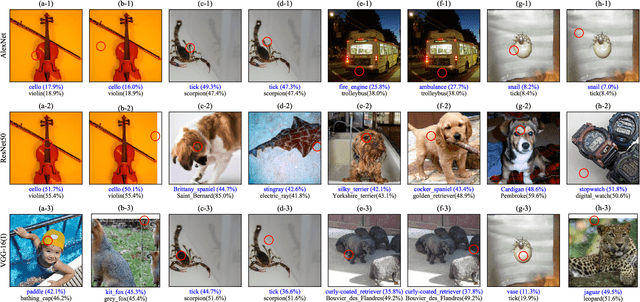

The vulnerability of deep neural networks to adversarial examples, which are crafted maliciously by modifying the inputs with imperceptible perturbations to misled the network produce incorrect outputs, reveals the lack of robustness and poses security concerns. Previous works study the adversarial robustness of image classifiers on image level and use all the pixel information in an image indiscriminately, lacking of exploration of regions with different semantic meanings in the pixel space of an image. In this work, we fill this gap and explore the pixel space of the adversarial image by proposing an algorithm to looking for possible perturbations pixel by pixel in different regions of the segmented image. The extensive experimental results on CIFAR-10 and ImageNet verify that searching for the modified pixel in only some pixels of an image can successfully launch the one-pixel adversarial attacks without requiring all the pixels of the entire image, and there exist multiple vulnerable points scattered in different regions of an image. We also demonstrate that the adversarial robustness of different regions on the image varies with the amount of semantic information contained.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge