Adversarial Robustness Guarantees for Gaussian Processes

Paper and Code

Apr 07, 2021

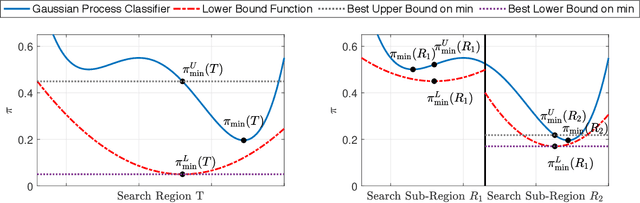

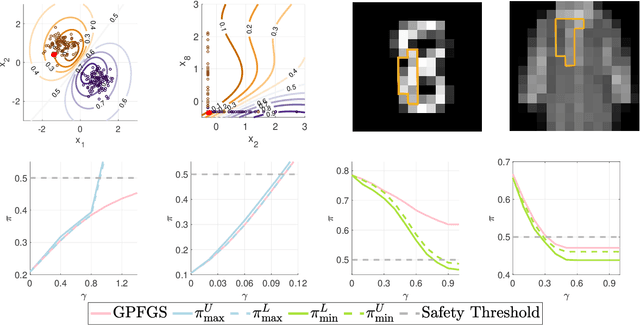

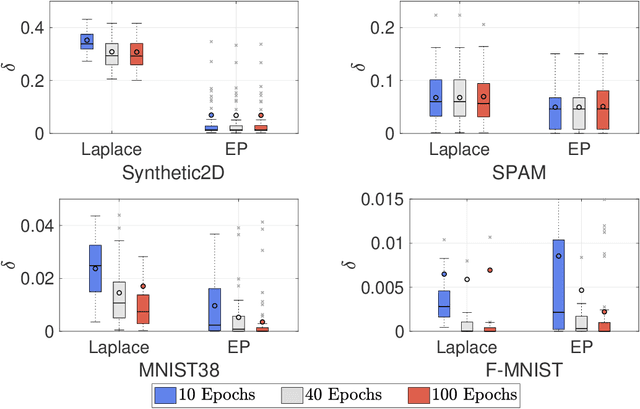

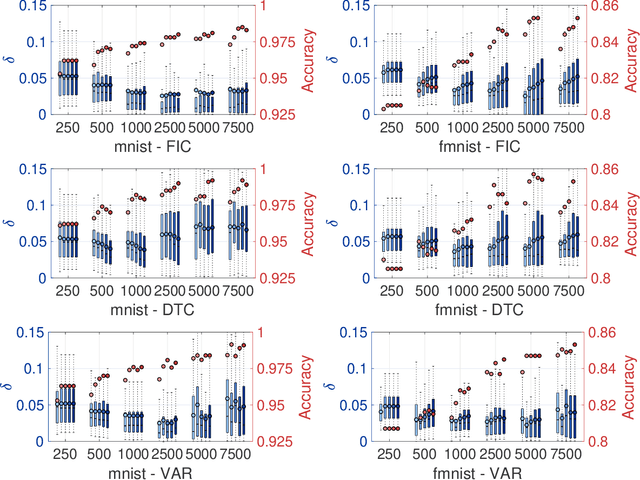

Gaussian processes (GPs) enable principled computation of model uncertainty, making them attractive for safety-critical applications. Such scenarios demand that GP decisions are not only accurate, but also robust to perturbations. In this paper we present a framework to analyse adversarial robustness of GPs, defined as invariance of the model's decision to bounded perturbations. Given a compact subset of the input space $T\subseteq \mathbb{R}^d$, a point $x^*$ and a GP, we provide provable guarantees of adversarial robustness of the GP by computing lower and upper bounds on its prediction range in $T$. We develop a branch-and-bound scheme to refine the bounds and show, for any $\epsilon > 0$, that our algorithm is guaranteed to converge to values $\epsilon$-close to the actual values in finitely many iterations. The algorithm is anytime and can handle both regression and classification tasks, with analytical formulation for most kernels used in practice. We evaluate our methods on a collection of synthetic and standard benchmark datasets, including SPAM, MNIST and FashionMNIST. We study the effect of approximate inference techniques on robustness and demonstrate how our method can be used for interpretability. Our empirical results suggest that the adversarial robustness of GPs increases with accurate posterior estimation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge