Adherence and Constancy in LIME-RS Explanations for Recommendation

Paper and Code

Sep 05, 2021

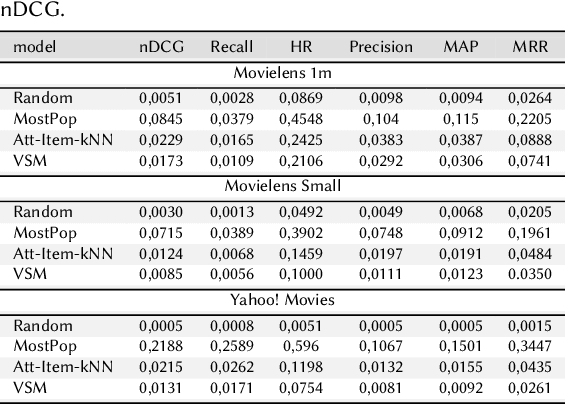

Explainable Recommendation has attracted a lot of attention due to a renewed interest in explainable artificial intelligence. In particular, post-hoc approaches have proved to be the most easily applicable ones to increasingly complex recommendation models, which are then treated as black-boxes. The most recent literature has shown that for post-hoc explanations based on local surrogate models, there are problems related to the robustness of the approach itself. This consideration becomes even more relevant in human-related tasks like recommendation. The explanation also has the arduous task of enhancing increasingly relevant aspects of user experience such as transparency or trustworthiness. This paper aims to show how the characteristics of a classical post-hoc model based on surrogates is strongly model-dependent and does not prove to be accountable for the explanations generated.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge