Adaptive Projected Residual Networks for Learning Parametric Maps from Sparse Data

Paper and Code

Dec 14, 2021

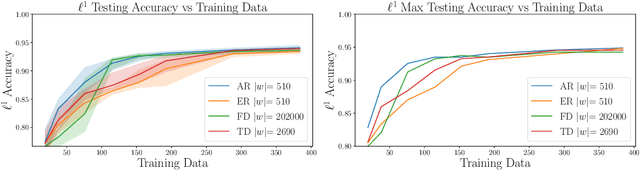

We present a parsimonious surrogate framework for learning high dimensional parametric maps from limited training data. The need for parametric surrogates arises in many applications that require repeated queries of complex computational models. These applications include such "outer-loop" problems as Bayesian inverse problems, optimal experimental design, and optimal design and control under uncertainty, as well as real time inference and control problems. Many high dimensional parametric mappings admit low dimensional structure, which can be exploited by mapping-informed reduced bases of the inputs and outputs. Exploiting this property, we develop a framework for learning low dimensional approximations of such maps by adaptively constructing ResNet approximations between reduced bases of their inputs and output. Motivated by recent approximation theory for ResNets as discretizations of control flows, we prove a universal approximation property of our proposed adaptive projected ResNet framework, which motivates a related iterative algorithm for the ResNet construction. This strategy represents a confluence of the approximation theory and the algorithm since both make use of sequentially minimizing flows. In numerical examples we show that these parsimonious, mapping-informed architectures are able to achieve remarkably high accuracy given few training data, making them a desirable surrogate strategy to be implemented for minimal computational investment in training data generation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge