A Multi-Head Ensemble Multi-Task Learning Approach for Dynamical Computation Offloading

Paper and Code

Sep 02, 2023

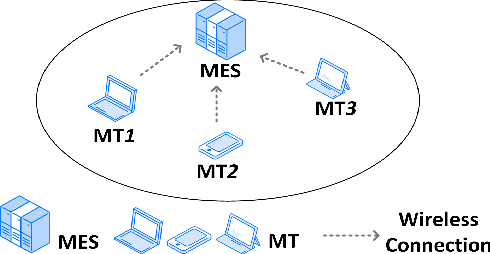

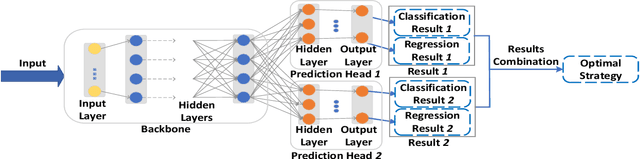

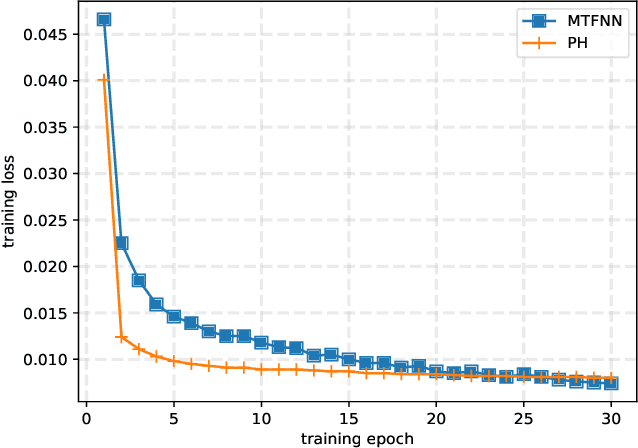

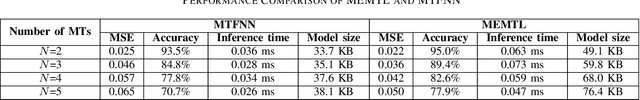

Computation offloading has become a popular solution to support computationally intensive and latency-sensitive applications by transferring computing tasks to mobile edge servers (MESs) for execution, which is known as mobile/multi-access edge computing (MEC). To improve the MEC performance, it is required to design an optimal offloading strategy that includes offloading decision (i.e., whether offloading or not) and computational resource allocation of MEC. The design can be formulated as a mixed-integer nonlinear programming (MINLP) problem, which is generally NP-hard and its effective solution can be obtained by performing online inference through a well-trained deep neural network (DNN) model. However, when the system environments change dynamically, the DNN model may lose efficacy due to the drift of input parameters, thereby decreasing the generalization ability of the DNN model. To address this unique challenge, in this paper, we propose a multi-head ensemble multi-task learning (MEMTL) approach with a shared backbone and multiple prediction heads (PHs). Specifically, the shared backbone will be invariant during the PHs training and the inferred results will be ensembled, thereby significantly reducing the required training overhead and improving the inference performance. As a result, the joint optimization problem for offloading decision and resource allocation can be efficiently solved even in a time-varying wireless environment. Experimental results show that the proposed MEMTL outperforms benchmark methods in both the inference accuracy and mean square error without requiring additional training data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge