Zu-Yun Shiau

TAX: Tendency-and-Assignment Explainer for Semantic Segmentation with Multi-Annotators

Feb 19, 2023

Abstract:To understand how deep neural networks perform classification predictions, recent research attention has been focusing on developing techniques to offer desirable explanations. However, most existing methods cannot be easily applied for semantic segmentation; moreover, they are not designed to offer interpretability under the multi-annotator setting. Instead of viewing ground-truth pixel-level labels annotated by a single annotator with consistent labeling tendency, we aim at providing interpretable semantic segmentation and answer two critical yet practical questions: "who" contributes to the resulting segmentation, and "why" such an assignment is determined. In this paper, we present a learning framework of Tendency-and-Assignment Explainer (TAX), designed to offer interpretability at the annotator and assignment levels. More specifically, we learn convolution kernel subsets for modeling labeling tendencies of each type of annotation, while a prototype bank is jointly observed to offer visual guidance for learning the above kernels. For evaluation, we consider both synthetic and real-world datasets with multi-annotators. We show that our TAX can be applied to state-of-the-art network architectures with comparable performances, while segmentation interpretability at both levels can be offered accordingly.

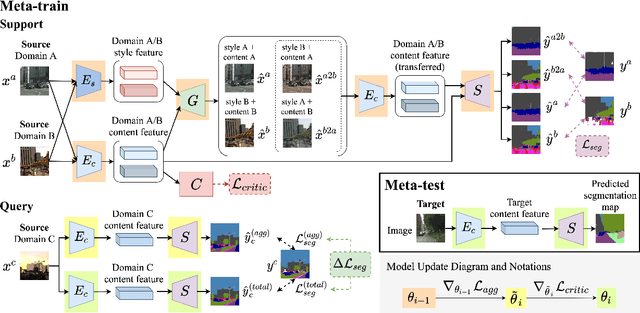

Meta-Learned Feature Critics for Domain Generalized Semantic Segmentation

Dec 27, 2021

Abstract:How to handle domain shifts when recognizing or segmenting visual data across domains has been studied by learning and vision communities. In this paper, we address domain generalized semantic segmentation, in which the segmentation model is trained on multiple source domains and is expected to generalize to unseen data domains. We propose a novel meta-learning scheme with feature disentanglement ability, which derives domain-invariant features for semantic segmentation with domain generalization guarantees. In particular, we introduce a class-specific feature critic module in our framework, enforcing the disentangled visual features with domain generalization guarantees. Finally, our quantitative results on benchmark datasets confirm the effectiveness and robustness of our proposed model, performing favorably against state-of-the-art domain adaptation and generalization methods in segmentation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge