Ziyi Zhong

E-Sports Talent Scouting Based on Multimodal Twitch Stream Data

Jul 02, 2019

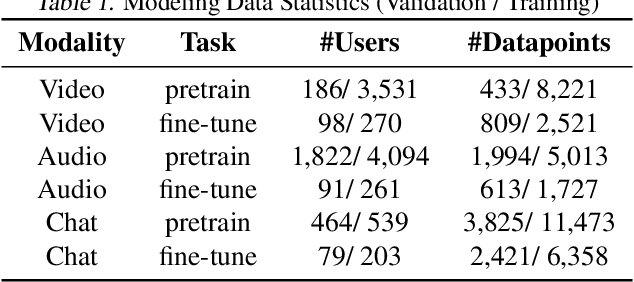

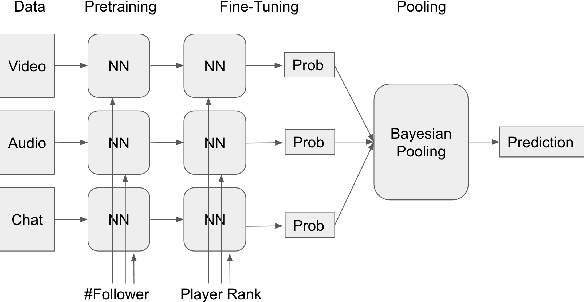

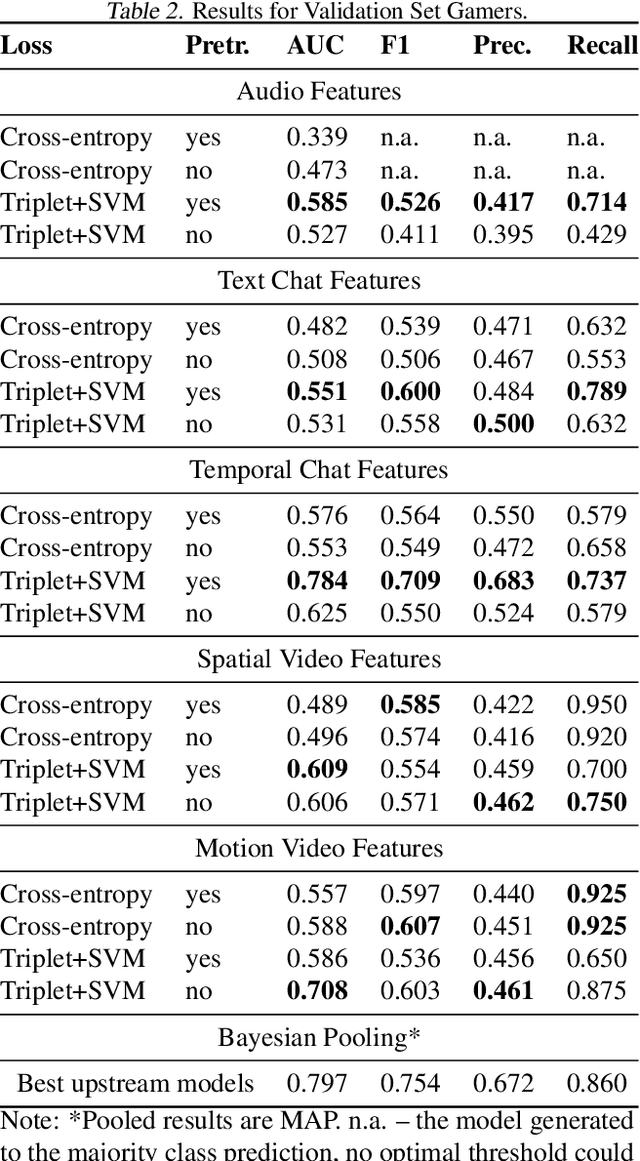

Abstract:We propose and investigate feasibility of a novel task that consists in finding e-sports talent using multimodal Twitch chat and video stream data. In that, we focus on predicting the ranks of Counter-Strike: Global Offensive (CS:GO) gamers who broadcast their games on Twitch. During January 2019-April 2019, we have built two Twitch stream collections: One for 425 publicly ranked CS:GO gamers and one for 9,928 unranked CS:GO gamers. We extract neural features from video, audio and text chat data and estimate modality-specific probabilities for a gamer to be top-ranked during the data collection time-frame. A hierarchical Bayesian model is then used to pool the evidence across modalities and generate estimates of intrinsic skill for each gamer. Our modeling is validated through correlating the intrinsic skill predictions with May 2019 ranks of the publicly profiled gamers.

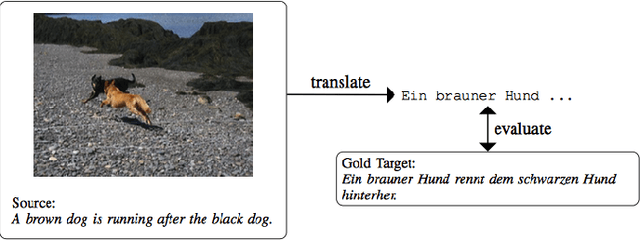

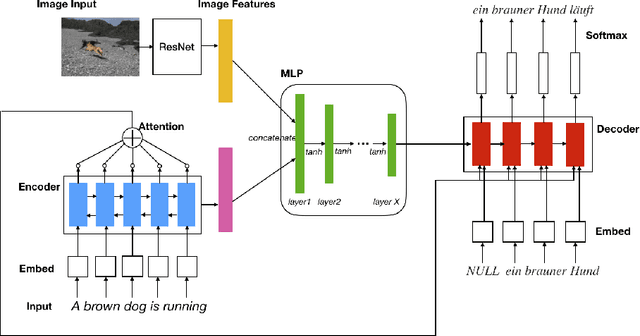

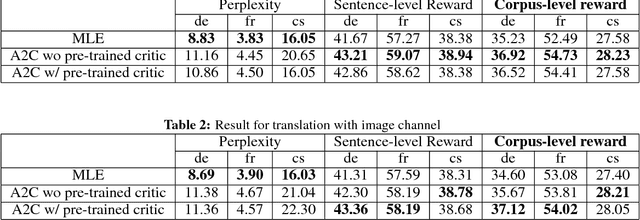

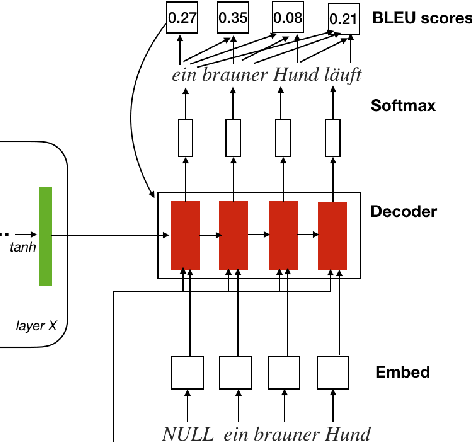

Multimodal Machine Translation with Reinforcement Learning

May 07, 2018

Abstract:Multimodal machine translation is one of the applications that integrates computer vision and language processing. It is a unique task given that in the field of machine translation, many state-of-the-arts algorithms still only employ textual information. In this work, we explore the effectiveness of reinforcement learning in multimodal machine translation. We present a novel algorithm based on the Advantage Actor-Critic (A2C) algorithm that specifically cater to the multimodal machine translation task of the EMNLP 2018 Third Conference on Machine Translation (WMT18). We experiment our proposed algorithm on the Multi30K multilingual English-German image description dataset and the Flickr30K image entity dataset. Our model takes two channels of inputs, image and text, uses translation evaluation metrics as training rewards, and achieves better results than supervised learning MLE baseline models. Furthermore, we discuss the prospects and limitations of using reinforcement learning for machine translation. Our experiment results suggest a promising reinforcement learning solution to the general task of multimodal sequence to sequence learning.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge