Zijie Lu

On Positional and Structural Node Features for Graph Neural Networks on Non-attributed Graphs

Jul 03, 2021

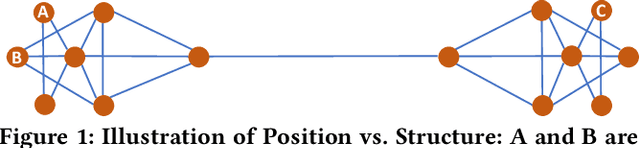

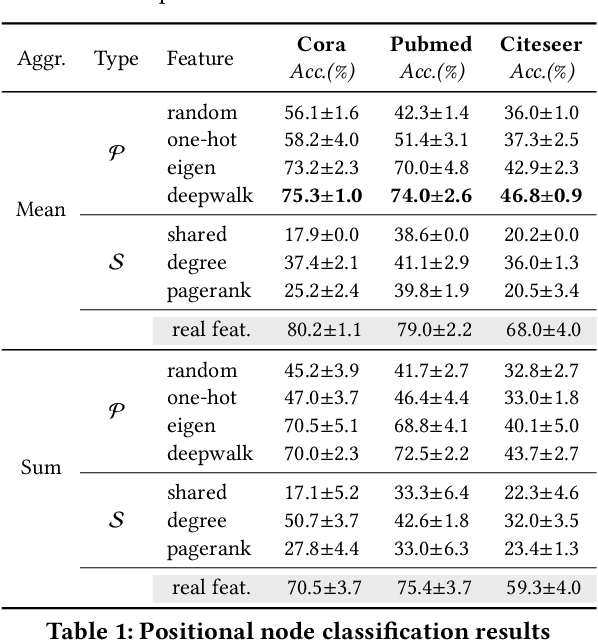

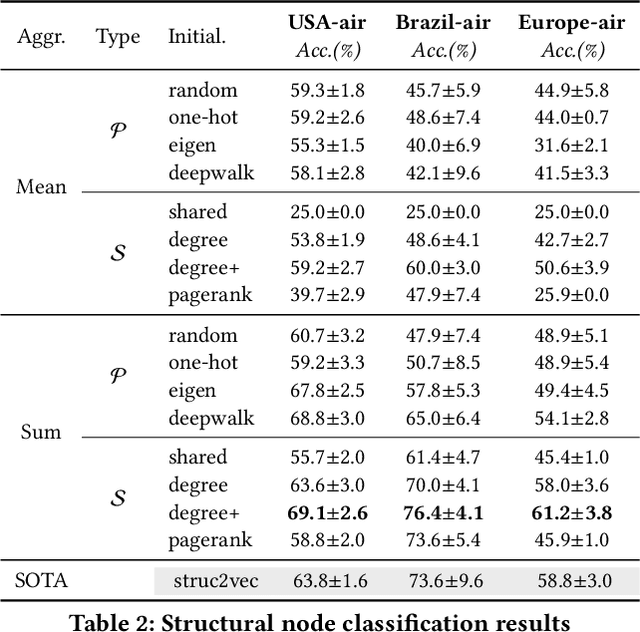

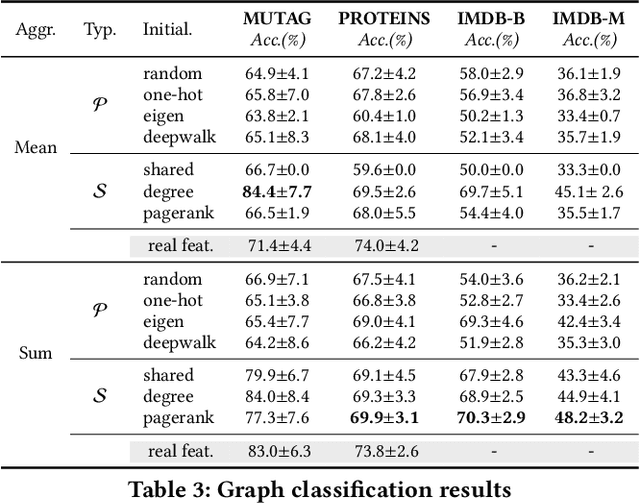

Abstract:Graph neural networks (GNNs) have been widely used in various graph-related problems such as node classification and graph classification, where the superior performance is mainly established when natural node features are available. However, it is not well understood how GNNs work without natural node features, especially regarding the various ways to construct artificial ones. In this paper, we point out the two types of artificial node features,i.e., positional and structural node features, and provide insights on why each of them is more appropriate for certain tasks,i.e., positional node classification, structural node classification, and graph classification. Extensive experimental results on 10 benchmark datasets validate our insights, thus leading to a practical guideline on the choices between different artificial node features for GNNs on non-attributed graphs. The code is available at https://github.com/zjzijielu/gnn-exp/.

Analysis of Bag-of-n-grams Representation's Properties Based on Textual Reconstruction

Sep 18, 2018

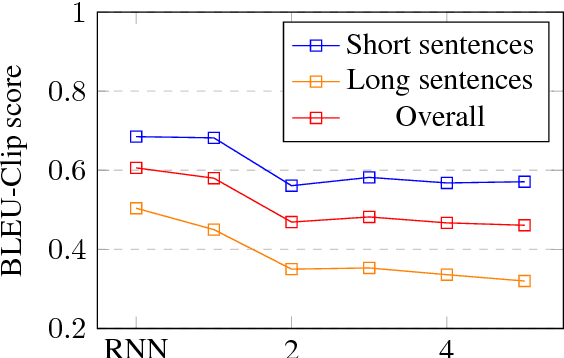

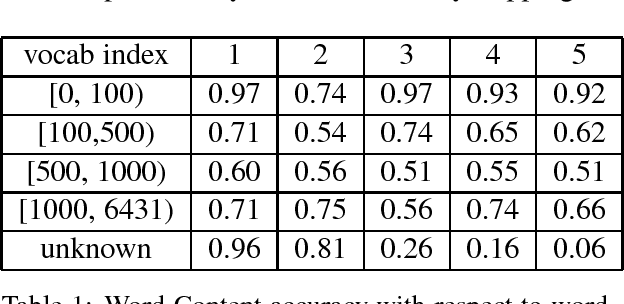

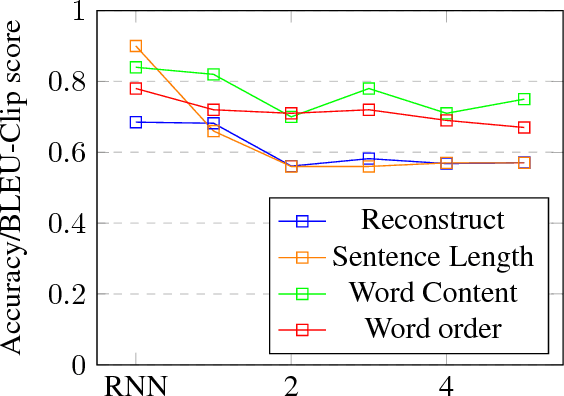

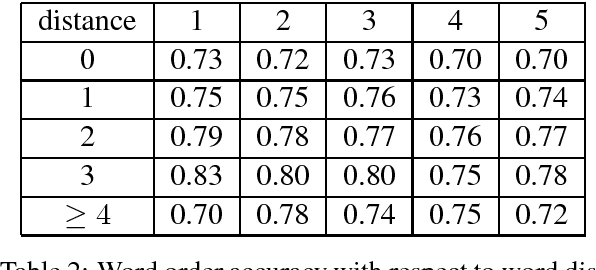

Abstract:Despite its simplicity, bag-of-n-grams sen- tence representation has been found to excel in some NLP tasks. However, it has not re- ceived much attention in recent years and fur- ther analysis on its properties is necessary. We propose a framework to investigate the amount and type of information captured in a general- purposed bag-of-n-grams sentence represen- tation. We first use sentence reconstruction as a tool to obtain bag-of-n-grams representa- tion that contains general information of the sentence. We then run prediction tasks (sen- tence length, word content, phrase content and word order) using the obtained representation to look into the specific type of information captured in the representation. Our analysis demonstrates that bag-of-n-grams representa- tion does contain sentence structure level in- formation. However, incorporating n-grams with higher order n empirically helps little with encoding more information in general, except for phrase content information.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge