Zhongzheng Huang

Class-Specific Distribution Alignment for Semi-Supervised Medical Image Classification

Jul 29, 2023

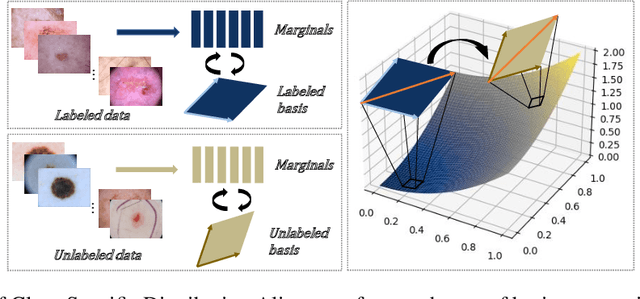

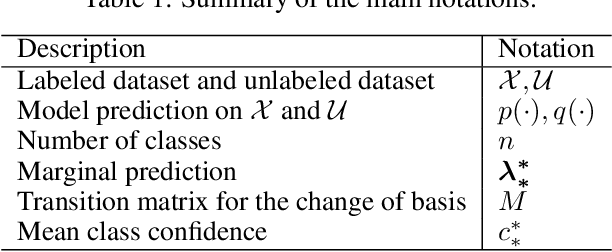

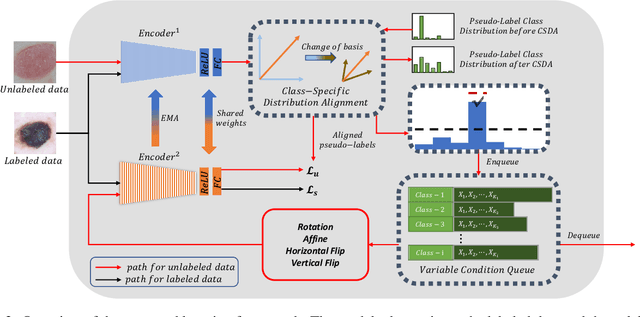

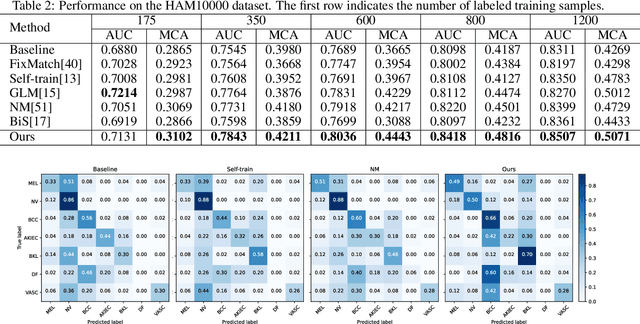

Abstract:Despite the success of deep neural networks in medical image classification, the problem remains challenging as data annotation is time-consuming, and the class distribution is imbalanced due to the relative scarcity of diseases. To address this problem, we propose Class-Specific Distribution Alignment (CSDA), a semi-supervised learning framework based on self-training that is suitable to learn from highly imbalanced datasets. Specifically, we first provide a new perspective to distribution alignment by considering the process as a change of basis in the vector space spanned by marginal predictions, and then derive CSDA to capture class-dependent marginal predictions on both labeled and unlabeled data, in order to avoid the bias towards majority classes. Furthermore, we propose a Variable Condition Queue (VCQ) module to maintain a proportionately balanced number of unlabeled samples for each class. Experiments on three public datasets HAM10000, CheXpert and Kvasir show that our method provides competitive performance on semi-supervised skin disease, thoracic disease, and endoscopic image classification tasks.

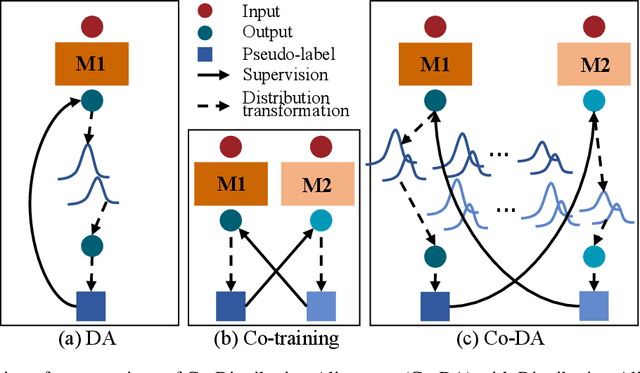

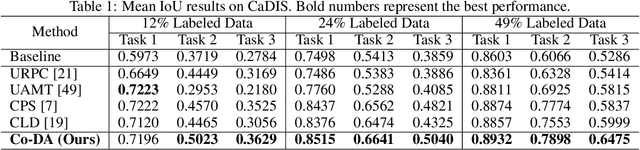

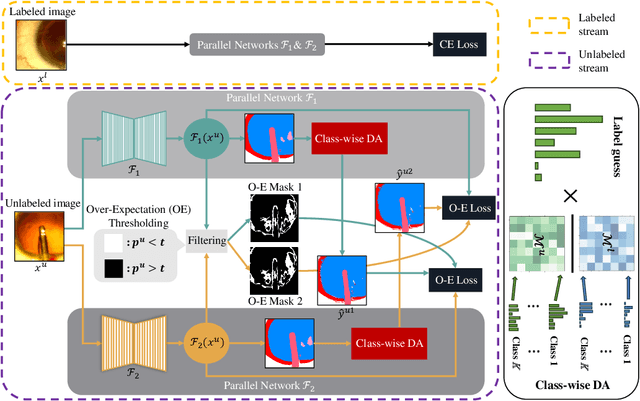

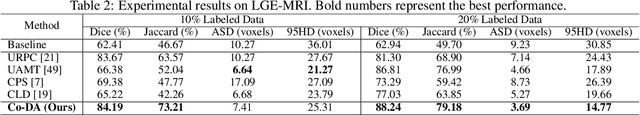

Semi-Supervised Medical Image Segmentation with Co-Distribution Alignment

Jul 24, 2023

Abstract:Medical image segmentation has made significant progress when a large amount of labeled data are available. However, annotating medical image segmentation datasets is expensive due to the requirement of professional skills. Additionally, classes are often unevenly distributed in medical images, which severely affects the classification performance on minority classes. To address these problems, this paper proposes Co-Distribution Alignment (Co-DA) for semi-supervised medical image segmentation. Specifically, Co-DA aligns marginal predictions on unlabeled data to marginal predictions on labeled data in a class-wise manner with two differently initialized models before using the pseudo-labels generated by one model to supervise the other. Besides, we design an over-expectation cross-entropy loss for filtering the unlabeled pixels to reduce noise in their pseudo-labels. Quantitative and qualitative experiments on three public datasets demonstrate that the proposed approach outperforms existing state-of-the-art semi-supervised medical image segmentation methods on both the 2D CaDIS dataset and the 3D LGE-MRI and ACDC datasets, achieving an mIoU of 0.8515 with only 24% labeled data on CaDIS, and a Dice score of 0.8824 and 0.8773 with only 20% data on LGE-MRI and ACDC, respectively.

A Multi-Scale Framework for Out-of-Distribution Detection in Dermoscopic Images

Jan 18, 2023Abstract:The automatic detection of skin diseases via dermoscopic images can improve the efficiency in diagnosis and help doctors make more accurate judgments. However, conventional skin disease recognition systems may produce high confidence for out-of-distribution (OOD) data, which may become a major security vulnerability in practical applications. In this paper, we propose a multi-scale detection framework to detect out-of-distribution skin disease image data to ensure the robustness of the system. Our framework extracts features from different layers of the neural network. In the early layers, rectified activation is used to make the output features closer to the well-behaved distribution, and then an one-class SVM is trained to detect OOD data; in the penultimate layer, an adapted Gram matrix is used to calculate the features after rectified activation, and finally the layer with the best performance is chosen to compute a normality score. Experiments show that the proposed framework achieves superior performance when compared with other state-of-the-art methods in the task of skin disease recognition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge