Zhixin Huang

Spatio-Temporal Attention Graph Neural Network for Remaining Useful Life Prediction

Jan 29, 2024Abstract:Remaining useful life prediction plays a crucial role in the health management of industrial systems. Given the increasing complexity of systems, data-driven predictive models have attracted significant research interest. Upon reviewing the existing literature, it appears that many studies either do not fully integrate both spatial and temporal features or employ only a single attention mechanism. Furthermore, there seems to be inconsistency in the choice of data normalization methods, particularly concerning operating conditions, which might influence predictive performance. To bridge these observations, this study presents the Spatio-Temporal Attention Graph Neural Network. Our model combines graph neural networks and temporal convolutional neural networks for spatial and temporal feature extraction, respectively. The cascade of these extractors, combined with multi-head attention mechanisms for both spatio-temporal dimensions, aims to improve predictive precision and refine model explainability. Comprehensive experiments were conducted on the C-MAPSS dataset to evaluate the impact of unified versus clustering normalization. The findings suggest that our model performs state-of-the-art results using only the unified normalization. Additionally, when dealing with datasets with multiple operating conditions, cluster normalization enhances the performance of our proposed model by up to 27%.

Fast Bayesian Updates for Deep Learning with a Use Case in Active Learning

Oct 12, 2022

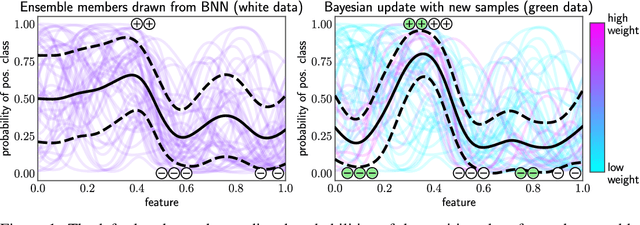

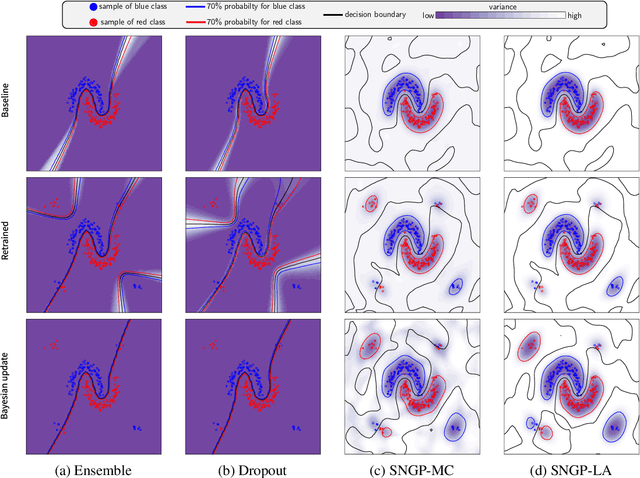

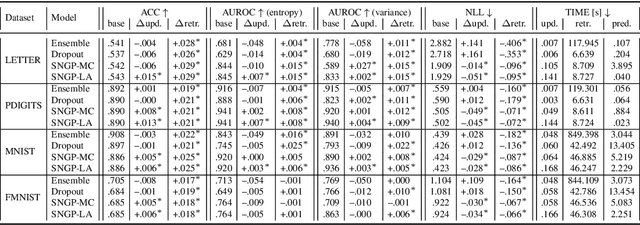

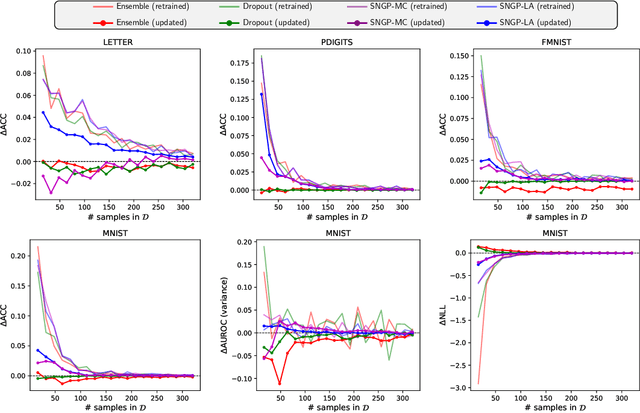

Abstract:Retraining deep neural networks when new data arrives is typically computationally expensive. Moreover, certain applications do not allow such costly retraining due to time or computational constraints. Fast Bayesian updates are a possible solution to this issue. Therefore, we propose a Bayesian update based on Monte-Carlo samples and a last-layer Laplace approximation for different Bayesian neural network types, i.e., Dropout, Ensemble, and Spectral Normalized Neural Gaussian Process (SNGP). In a large-scale evaluation study, we show that our updates combined with SNGP represent a fast and competitive alternative to costly retraining. As a use case, we combine the Bayesian updates for SNGP with different sequential query strategies to exemplarily demonstrate their improved selection performance in active learning.

Design of Explainability Module with Experts in the Loop for Visualization and Dynamic Adjustment of Continual Learning

Feb 14, 2022

Abstract:Continual learning can enable neural networks to evolve by learning new tasks sequentially in task-changing scenarios. However, two general and related challenges should be overcome in further research before we apply this technique to real-world applications. Firstly, newly collected novelties from the data stream in applications could contain anomalies that are meaningless for continual learning. Instead of viewing them as a new task for updating, we have to filter out such anomalies to reduce the disturbance of extremely high-entropy data for the progression of convergence. Secondly, fewer efforts have been put into research regarding the explainability of continual learning, which leads to a lack of transparency and credibility of the updated neural networks. Elaborated explanations about the process and result of continual learning can help experts in judgment and making decisions. Therefore, we propose the conceptual design of an explainability module with experts in the loop based on techniques, such as dimension reduction, visualization, and evaluation strategies. This work aims to overcome the mentioned challenges by sufficiently explaining and visualizing the identified anomalies and the updated neural network. With the help of this module, experts can be more confident in decision-making regarding anomaly filtering, dynamic adjustment of hyperparameters, data backup, etc.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge