Zhishe Wang

SwinFuse: A Residual Swin Transformer Fusion Network for Infrared and Visible Images

Apr 25, 2022

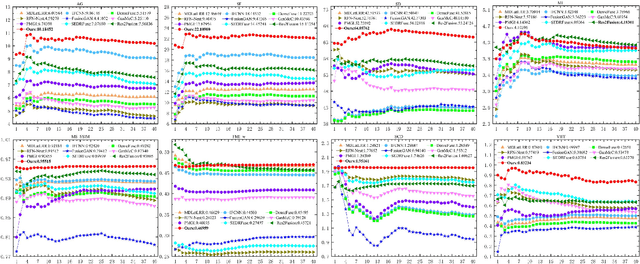

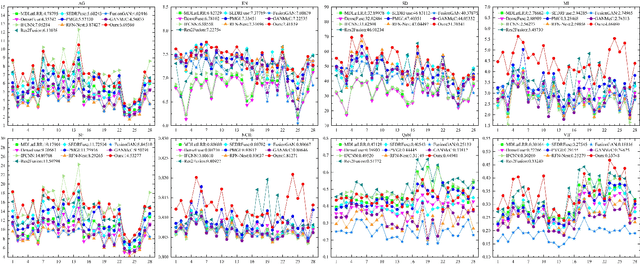

Abstract:The existing deep learning fusion methods mainly concentrate on the convolutional neural networks, and few attempts are made with transformer. Meanwhile, the convolutional operation is a content-independent interaction between the image and convolution kernel, which may lose some important contexts and further limit fusion performance. Towards this end, we present a simple and strong fusion baseline for infrared and visible images, namely\textit{ Residual Swin Transformer Fusion Network}, termed as SwinFuse. Our SwinFuse includes three parts: the global feature extraction, fusion layer and feature reconstruction. In particular, we build a fully attentional feature encoding backbone to model the long-range dependency, which is a pure transformer network and has a stronger representation ability compared with the convolutional neural networks. Moreover, we design a novel feature fusion strategy based on $L_{1}$-norm for sequence matrices, and measure the corresponding activity levels from row and column vector dimensions, which can well retain competitive infrared brightness and distinct visible details. Finally, we testify our SwinFuse with nine state-of-the-art traditional and deep learning methods on three different datasets through subjective observations and objective comparisons, and the experimental results manifest that the proposed SwinFuse obtains surprising fusion performance with strong generalization ability and competitive computational efficiency. The code will be available at https://github.com/Zhishe-Wang/SwinFuse.

Infrared and Visible Image Fusion via Interactive Compensatory Attention Adversarial Learning

Mar 29, 2022

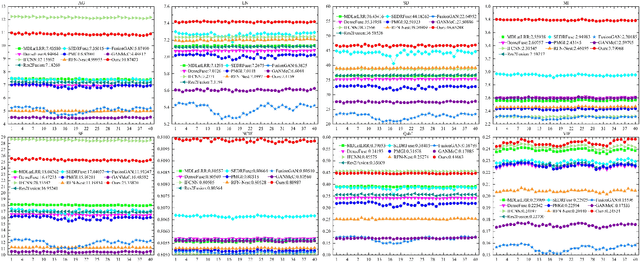

Abstract:The existing generative adversarial fusion methods generally concatenate source images and extract local features through convolution operation, without considering their global characteristics, which tends to produce an unbalanced result and is biased towards the infrared image or visible image. Toward this end, we propose a novel end-to-end mode based on generative adversarial training to achieve better fusion balance, termed as \textit{interactive compensatory attention fusion network} (ICAFusion). In particular, in the generator, we construct a multi-level encoder-decoder network with a triple path, and adopt infrared and visible paths to provide additional intensity and gradient information. Moreover, we develop interactive and compensatory attention modules to communicate their pathwise information, and model their long-range dependencies to generate attention maps, which can more focus on infrared target perception and visible detail characterization, and further increase the representation power for feature extraction and feature reconstruction. In addition, dual discriminators are designed to identify the similar distribution between fused result and source images, and the generator is optimized to produce a more balanced result. Extensive experiments illustrate that our ICAFusion obtains superior fusion performance and better generalization ability, which precedes other advanced methods in the subjective visual description and objective metric evaluation. Our codes will be public at \url{https://github.com/Zhishe-Wang/ICAFusion}

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge