Zhichao Zhu

Stochastic Forward-Forward Learning through Representational Dimensionality Compression

May 22, 2025Abstract:The Forward-Forward (FF) algorithm provides a bottom-up alternative to backpropagation (BP) for training neural networks, relying on a layer-wise "goodness" function to guide learning. Existing goodness functions, inspired by energy-based learning (EBL), are typically defined as the sum of squared post-synaptic activations, neglecting the correlations between neurons. In this work, we propose a novel goodness function termed dimensionality compression that uses the effective dimensionality (ED) of fluctuating neural responses to incorporate second-order statistical structure. Our objective minimizes ED for clamped inputs when noise is considered while maximizing it across the sample distribution, promoting structured representations without the need to prepare negative samples. We demonstrate that this formulation achieves competitive performance compared to other non-BP methods. Moreover, we show that noise plays a constructive role that can enhance generalization and improve inference when predictions are derived from the mean of squared outputs, which is equivalent to making predictions based on the energy term. Our findings contribute to the development of more biologically plausible learning algorithms and suggest a natural fit for neuromorphic computing, where stochasticity is a computational resource rather than a nuisance. The code is available at https://github.com/ZhichaoZhu/StochasticForwardForward

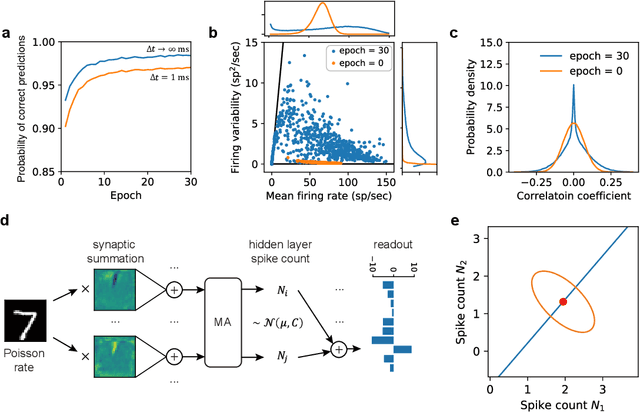

Towards free-response paradigm: a theory on decision-making in spiking neural networks

Apr 16, 2024Abstract:The energy-efficient and brain-like information processing abilities of Spiking Neural Networks (SNNs) have attracted considerable attention, establishing them as a crucial element of brain-inspired computing. One prevalent challenge encountered by SNNs is the trade-off between inference speed and accuracy, which requires sufficient time to achieve the desired level of performance. Drawing inspiration from animal behavior experiments that demonstrate a connection between decision-making reaction times, task complexity, and confidence levels, this study seeks to apply these insights to SNNs. The focus is on understanding how SNNs make inferences, with a particular emphasis on untangling the interplay between signal and noise in decision-making processes. The proposed theoretical framework introduces a new optimization objective for SNN training, highlighting the importance of not only the accuracy of decisions but also the development of predictive confidence through learning from past experiences. Experimental results demonstrate that SNNs trained according to this framework exhibit improved confidence expression, leading to better decision-making outcomes. In addition, a strategy is introduced for efficient decision-making during inference, which allows SNNs to complete tasks more quickly and can use stopping times as indicators of decision confidence. By integrating neuroscience insights with neuromorphic computing, this study opens up new possibilities to explore the capabilities of SNNs and advance their application in complex decision-making scenarios.

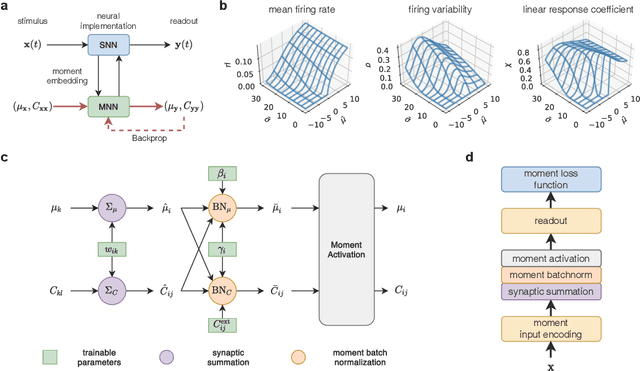

Learn to integrate parts for whole through correlated neural variability

Jan 01, 2024Abstract:Sensory perception originates from the responses of sensory neurons, which react to a collection of sensory signals linked to various physical attributes of a singular perceptual object. Unraveling how the brain extracts perceptual information from these neuronal responses is a pivotal challenge in both computational neuroscience and machine learning. Here we introduce a statistical mechanical theory, where perceptual information is first encoded in the correlated variability of sensory neurons and then reformatted into the firing rates of downstream neurons. Applying this theory, we illustrate the encoding of motion direction using neural covariance and demonstrate high-fidelity direction recovery by spiking neural networks. Networks trained under this theory also show enhanced performance in classifying natural images, achieving higher accuracy and faster inference speed. Our results challenge the traditional view of neural covariance as a secondary factor in neural coding, highlighting its potential influence on brain function.

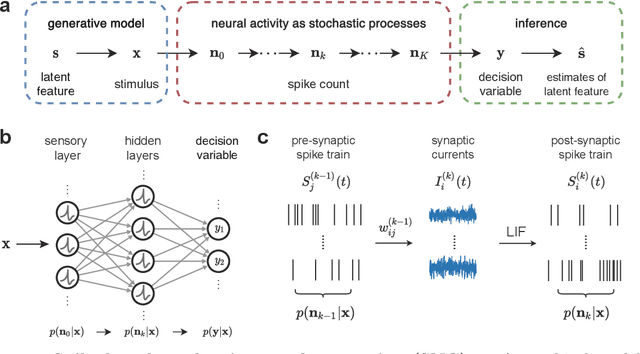

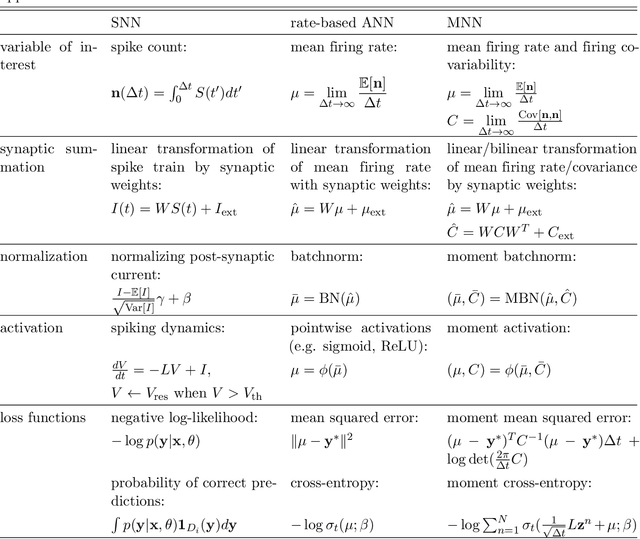

Toward spike-based stochastic neural computing

May 23, 2023

Abstract:Inspired by the highly irregular spiking activity of cortical neurons, stochastic neural computing is an attractive theory for explaining the operating principles of the brain and the ability to represent uncertainty by intelligent agents. However, computing and learning with high-dimensional joint probability distributions of spiking neural activity across large populations of neurons present as a major challenge. To overcome this, we develop a novel moment embedding approach to enable gradient-based learning in spiking neural networks accounting for the propagation of correlated neural variability. We show under the supervised learning setting a spiking neural network trained this way is able to learn the task while simultaneously minimizing uncertainty, and further demonstrate its application to neuromorphic hardware. Built on the principle of spike-based stochastic neural computing, the proposed method opens up new opportunities for developing machine intelligence capable of computing uncertainty and for designing unconventional computing architectures.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge