Zhenpeng Zheng

Adaptive Activation Network For Low Resource Multilingual Speech Recognition

May 28, 2022

Abstract:Low resource automatic speech recognition (ASR) is a useful but thorny task, since deep learning ASR models usually need huge amounts of training data. The existing models mostly established a bottleneck (BN) layer by pre-training on a large source language, and transferring to the low resource target language. In this work, we introduced an adaptive activation network to the upper layers of ASR model, and applied different activation functions to different languages. We also proposed two approaches to train the model: (1) cross-lingual learning, replacing the activation function from source language to target language, (2) multilingual learning, jointly training the Connectionist Temporal Classification (CTC) loss of each language and the relevance of different languages. Our experiments on IARPA Babel datasets demonstrated that our approaches outperform the from-scratch training and traditional bottleneck feature based methods. In addition, combining the cross-lingual learning and multilingual learning together could further improve the performance of multilingual speech recognition.

MLNET: An Adaptive Multiple Receptive-field Attention Neural Network for Voice Activity Detection

Aug 13, 2020

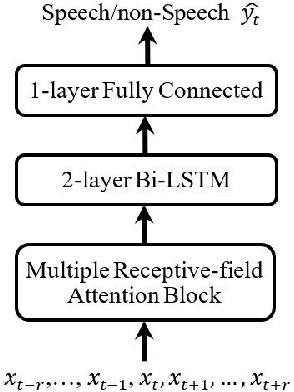

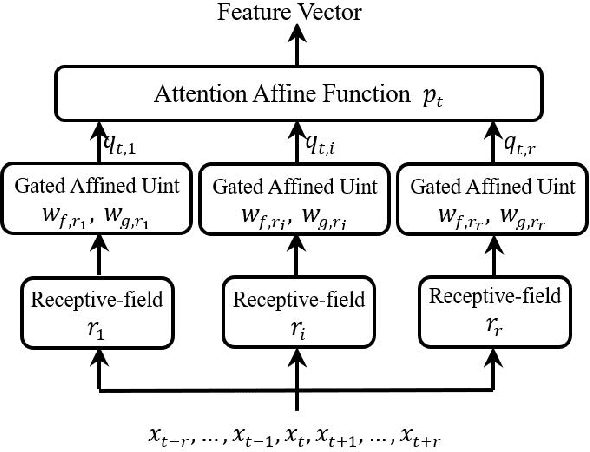

Abstract:Voice activity detection (VAD) makes a distinction between speech and non-speech and its performance is of crucial importance for speech based services. Recently, deep neural network (DNN)-based VADs have achieved better performance than conventional signal processing methods. The existed DNNbased models always handcrafted a fixed window to make use of the contextual speech information to improve the performance of VAD. However, the fixed window of contextual speech information can't handle various unpredicatable noise environments and highlight the critical speech information to VAD task. In order to solve this problem, this paper proposed an adaptive multiple receptive-field attention neural network, called MLNET, to finish VAD task. The MLNET leveraged multi-branches to extract multiple contextual speech information and investigated an effective attention block to weight the most crucial parts of the context for final classification. Experiments in real-world scenarios demonstrated that the proposed MLNET-based model outperformed other baselines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge