Zheming Zuo

Uncertainty-Aware Dual-Ranking Strategy for Offline Data-Driven Multi-Objective Optimization

Nov 09, 2025

Abstract:Offline data-driven Multi-Objective Optimization Problems (MOPs) rely on limited data from simulations, experiments, or sensors. This scarcity leads to high epistemic uncertainty in surrogate predictions. Conventional surrogate methods such as Kriging assume Gaussian distributions, which can yield suboptimal results when the assumptions fail. To address these issues, we propose a simple yet novel dual-ranking strategy, working with a basic multi-objective evolutionary algorithm, NSGA-II, where the built-in non-dominated sorting is kept and the second rank is devised for uncertainty estimation. In the latter, we utilize the uncertainty estimates given by several surrogate models, including Quantile Regression (QR), Monte Carlo Dropout (MCD), and Bayesian Neural Networks (BNNs). Concretely, with this dual-ranking strategy, each solution's final rank is the average of its non-dominated sorting rank and a rank derived from the uncertainty-adjusted fitness function, thus reducing the risk of misguided optimization under data constraints. We evaluate our approach on benchmark and real-world MOPs, comparing it to state-of-the-art methods. The results show that our dual-ranking strategy significantly improves the performance of NSGA-II in offline settings, achieving competitive outcomes compared with traditional surrogate-based methods. This framework advances uncertainty-aware multi-objective evolutionary algorithms, offering a robust solution for data-limited, real-world applications.

FedGA: Federated Learning with Gradient Alignment for Error Asymmetry Mitigation

Dec 21, 2024Abstract:Federated learning (FL) triggers intra-client and inter-client class imbalance, with the latter compared to the former leading to biased client updates and thus deteriorating the distributed models. Such a bias is exacerbated during the server aggregation phase and has yet to be effectively addressed by conventional re-balancing methods. To this end, different from the off-the-shelf label or loss-based approaches, we propose a gradient alignment (GA)-informed FL method, dubbed as FedGA, where the importance of error asymmetry (EA) in bias is observed and its linkage to the gradient of the loss to raw logits is explored. Concretely, GA, implemented by label calibration during the model backpropagation process, prevents catastrophic forgetting of rate and missing classes, hence boosting model convergence and accuracy. Experimental results on five benchmark datasets demonstrate that GA outperforms the pioneering counterpart FedAvg and its four variants in minimizing EA and updating bias, and accordingly yielding higher F1 score and accuracy margins when the Dirichlet distribution sampling factor $\alpha$ increases. The code and more details are available at \url{https://anonymous.4open.science/r/FedGA-B052/README.md}.

An Augmentation-based Model Re-adaptation Framework for Robust Image Segmentation

Sep 14, 2024

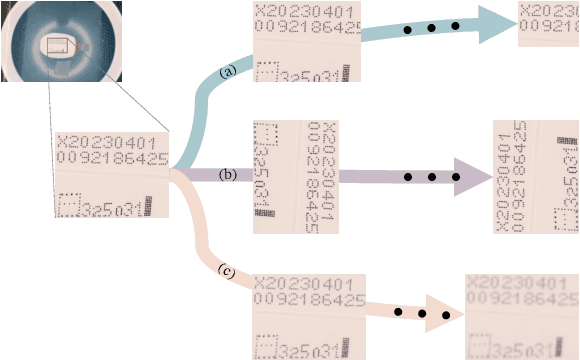

Abstract:Image segmentation is a crucial task in computer vision, with wide-ranging applications in industry. The Segment Anything Model (SAM) has recently attracted intensive attention; however, its application in industrial inspection, particularly for segmenting commercial anti-counterfeit codes, remains challenging. Unlike open-source datasets, industrial settings often face issues such as small sample sizes and complex textures. Additionally, computational cost is a key concern due to the varying number of trainable parameters. To address these challenges, we propose an Augmentation-based Model Re-adaptation Framework (AMRF). This framework leverages data augmentation techniques during training to enhance the generalisation of segmentation models, allowing them to adapt to newly released datasets with temporal disparity. By observing segmentation masks from conventional models (FCN and U-Net) and a pre-trained SAM model, we determine a minimal augmentation set that optimally balances training efficiency and model performance. Our results demonstrate that the fine-tuned FCN surpasses its baseline by 3.29% and 3.02% in cropping accuracy, and 5.27% and 4.04% in classification accuracy on two temporally continuous datasets. Similarly, the fine-tuned U-Net improves upon its baseline by 7.34% and 4.94% in cropping, and 8.02% and 5.52% in classification. Both models outperform the top-performing SAM models (ViT-Large and ViT-Base) by an average of 11.75% and 9.01% in cropping accuracy, and 2.93% and 4.83% in classification accuracy, respectively.

Robust and Explainable Fine-Grained Visual Classification with Transfer Learning: A Dual-Carriageway Framework

May 09, 2024Abstract:In the realm of practical fine-grained visual classification applications rooted in deep learning, a common scenario involves training a model using a pre-existing dataset. Subsequently, a new dataset becomes available, prompting the desire to make a pivotal decision for achieving enhanced and leveraged inference performance on both sides: Should one opt to train datasets from scratch or fine-tune the model trained on the initial dataset using the newly released dataset? The existing literature reveals a lack of methods to systematically determine the optimal training strategy, necessitating explainability. To this end, we present an automatic best-suit training solution searching framework, the Dual-Carriageway Framework (DCF), to fill this gap. DCF benefits from the design of a dual-direction search (starting from the pre-existing or the newly released dataset) where five different training settings are enforced. In addition, DCF is not only capable of figuring out the optimal training strategy with the capability of avoiding overfitting but also yields built-in quantitative and visual explanations derived from the actual input and weights of the trained model. We validated DCF's effectiveness through experiments with three convolutional neural networks (ResNet18, ResNet34 and Inception-v3) on two temporally continued commercial product datasets. Results showed fine-tuning pathways outperformed training-from-scratch ones by up to 2.13% and 1.23% on the pre-existing and new datasets, respectively, in terms of mean accuracy. Furthermore, DCF identified reflection padding as the superior padding method, enhancing testing accuracy by 3.72% on average. This framework stands out for its potential to guide the development of robust and explainable AI solutions in fine-grained visual classification tasks.

How Quality Affects Deep Neural Networks in Fine-Grained Image Classification

May 09, 2024

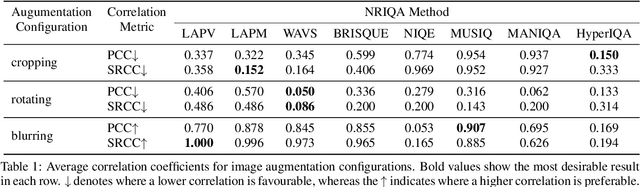

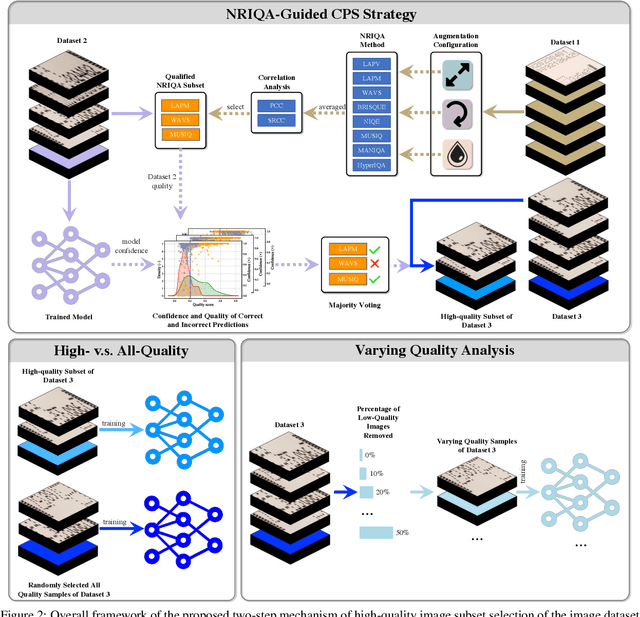

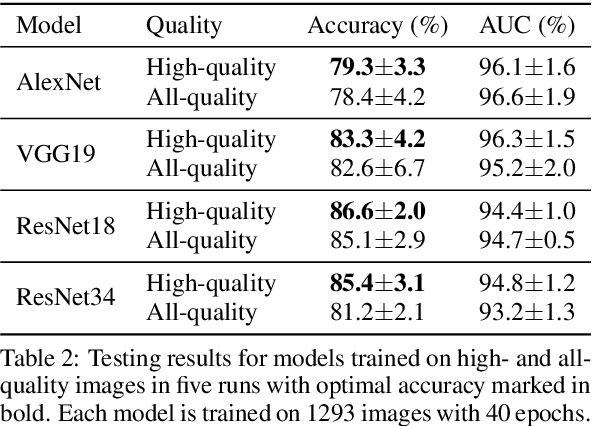

Abstract:In this paper, we propose a No-Reference Image Quality Assessment (NRIQA) guided cut-off point selection (CPS) strategy to enhance the performance of a fine-grained classification system. Scores given by existing NRIQA methods on the same image may vary and not be as independent of natural image augmentations as expected, which weakens their connection and explainability to fine-grained image classification. Taking the three most commonly adopted image augmentation configurations -- cropping, rotating, and blurring -- as the entry point, we formulate a two-step mechanism for selecting the most discriminative subset from a given image dataset by considering both the confidence of model predictions and the density distribution of image qualities over several NRIQA methods. Concretely, the cut-off points yielded by those methods are aggregated via majority voting to inform the process of image subset selection. The efficacy and efficiency of such a mechanism have been confirmed by comparing the models being trained on high-quality images against a combination of high- and low-quality ones, with a range of 0.7% to 4.2% improvement on a commercial product dataset in terms of mean accuracy through four deep neural classifiers. The robustness of the mechanism has been proven by the observations that all the selected high-quality images can work jointly with 70% low-quality images with 1.3% of classification precision sacrificed when using ResNet34 in an ablation study.

Seen to Unseen: When Fuzzy Inference System Predicts IoT Device Positioning Labels That Had Not Appeared in Training Phase

Sep 21, 2022

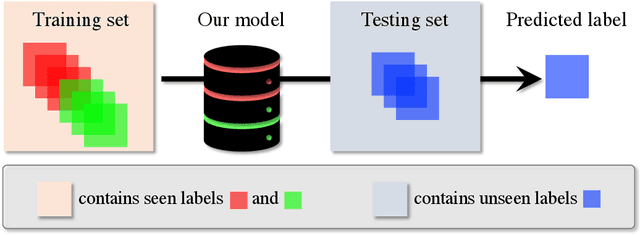

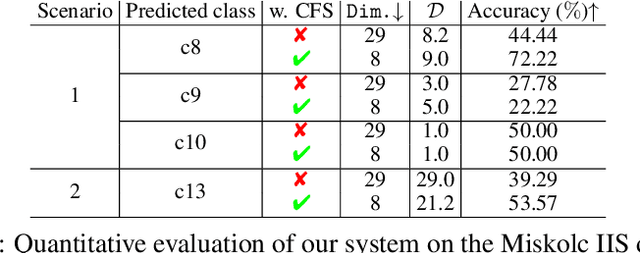

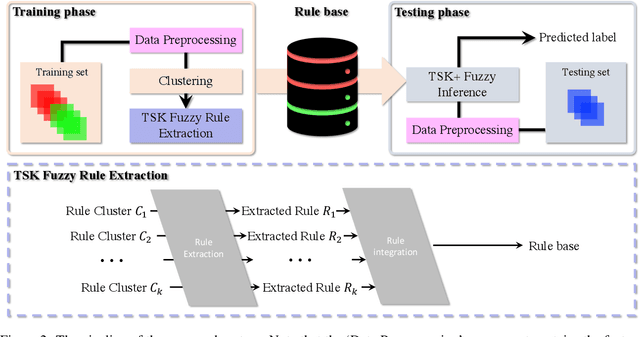

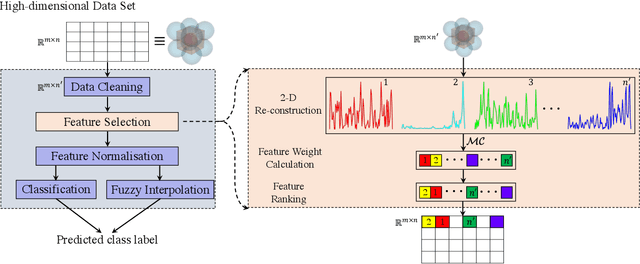

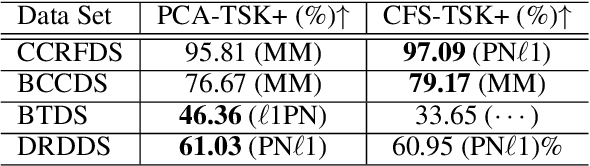

Abstract:Situating at the core of Artificial Intelligence (AI), Machine Learning (ML), and more specifically, Deep Learning (DL) have embraced great success in the past two decades. However, unseen class label prediction is far less explored due to missing classes being invisible in training ML or DL models. In this work, we propose a fuzzy inference system to cope with such a challenge by adopting TSK+ fuzzy inference engine in conjunction with the Curvature-based Feature Selection (CFS) method. The practical feasibility of our system has been evaluated by predicting the positioning labels of networking devices within the realm of the Internet of Things (IoT). Competitive prediction performance confirms the efficiency and efficacy of our system, especially when a large number of continuous class labels are unseen during the model training stage.

Curvature-based Feature Selection with Application in Classifying Electronic Health Records

Jan 10, 2021

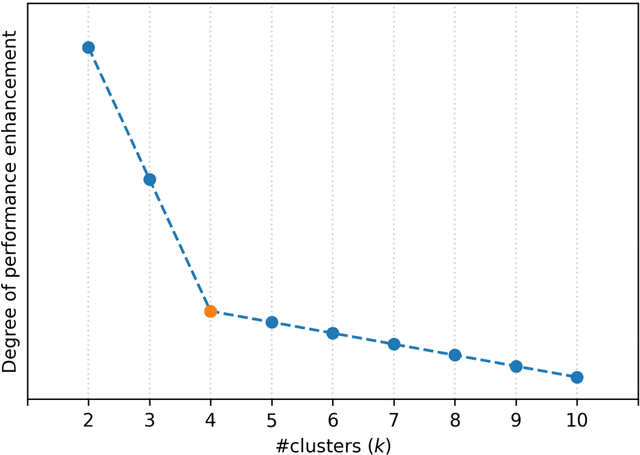

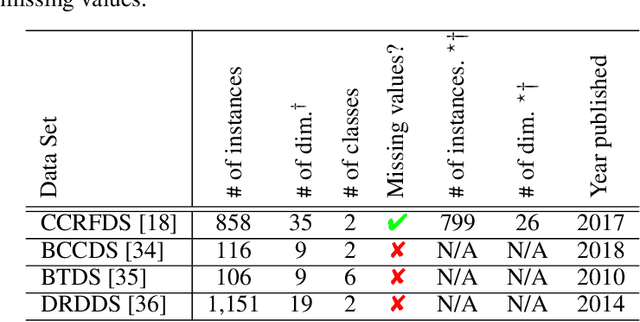

Abstract:Electronic Health Records (EHRs) are widely applied in healthcare facilities nowadays. Due to the inherent heterogeneity, unbalanced, incompleteness, and high-dimensional nature of EHRs, it is a challenging task to employ machine learning algorithms to analyse such EHRs for prediction and diagnostics within the scope of precision medicine. Dimensionality reduction is an efficient data preprocessing technique for the analysis of high dimensional data that reduces the number of features while improving the performance of the data analysis, e.g. classification. In this paper, we propose an efficient curvature-based feature selection method for supporting more precise diagnosis. The proposed method is a filter-based feature selection method, which directly utilises the Menger Curvature for ranking all the attributes in the given data set. We evaluate the performance of our method against conventional PCA and recent ones including BPCM, GSAM, WCNN, BLS II, VIBES, 2L-MJFA, RFGA, and VAF. Our method achieves state-of-the-art performance on four benchmark healthcare data sets including CCRFDS, BCCDS, BTDS, and DRDDS with impressive 24.73% and 13.93% improvements respectively on BTDS and CCRFDS, 7.97% improvement on BCCDS, and 3.63% improvement on DRDDS. Our CFS source code is publicly available at https://github.com/zhemingzuo/CFS.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge