Yuting Liang

Differentially Private Covariance Revisited

May 31, 2022

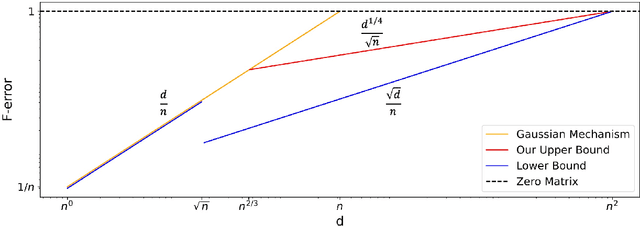

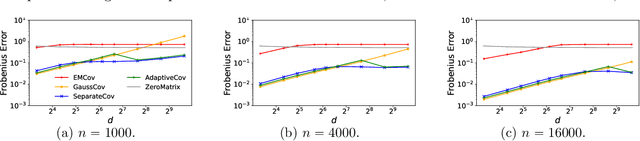

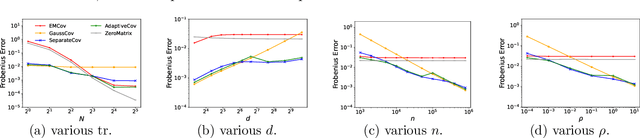

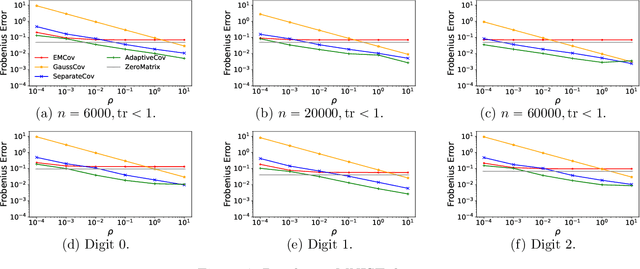

Abstract:In this paper, we present three new error bounds, in terms of the Frobenius norm, for covariance estimation under differential privacy: (1) a worst-case bound of $\tilde{O}(d^{1/4}/\sqrt{n})$, which improves the standard Gaussian mechanism $\tilde{O}(d/n)$ for the regime $d>\widetilde{\Omega}(n^{2/3})$; (2) a trace-sensitive bound that improves the state of the art by a $\sqrt{d}$-factor, and (3) a tail-sensitive bound that gives a more instance-specific result. The corresponding algorithms are also simple and efficient. Experimental results show that they offer significant improvements over prior work.

Towards Robust Deep Learning with Ensemble Networks and Noisy Layers

Jul 03, 2020

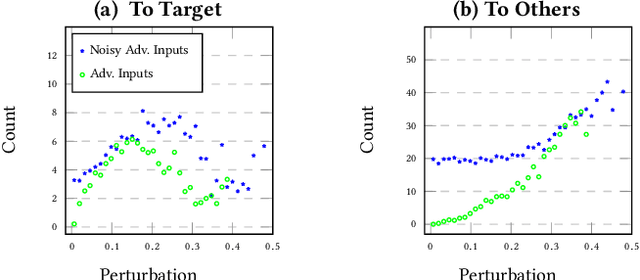

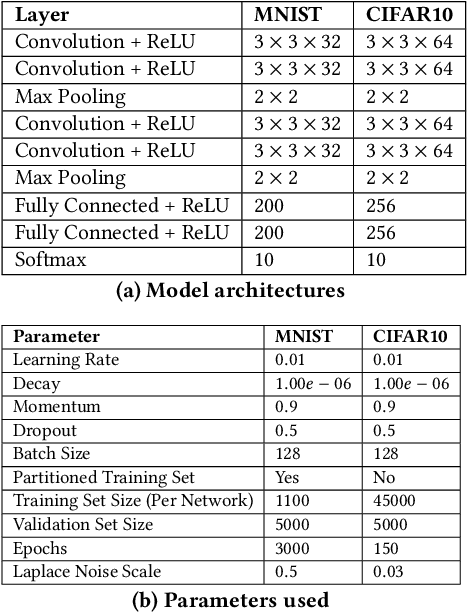

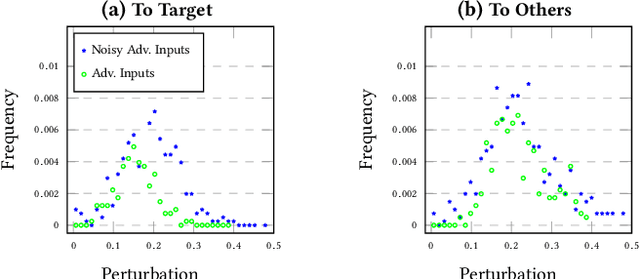

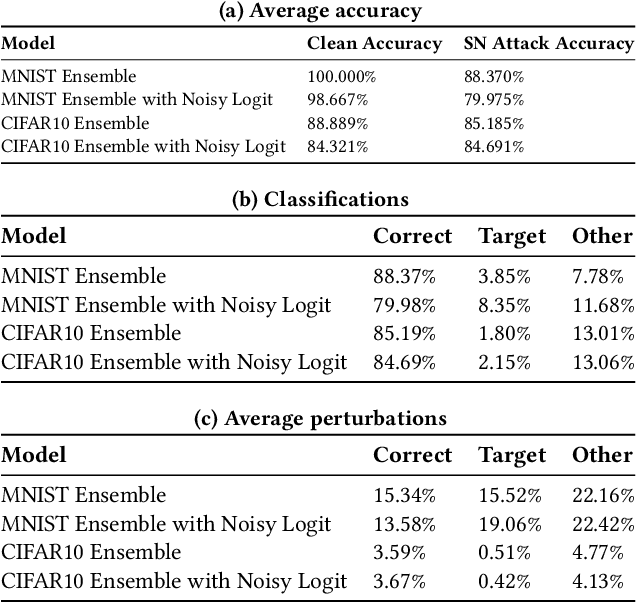

Abstract:In this paper we provide an approach for deep learning that protects against adversarial examples in image classification-type networks. The approach relies on two mechanisms:1) a mechanism that increases robustness at the expense of accuracy, and, 2) a mechanism that improves accuracy but does not always increase robustness. We show that an approach combining the two mechanisms can provide protection against adversarial examples while retaining accuracy. We formulate potential attacks on our approach and provide experimental results to demonstrate the effectiveness of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge