Yusuke Tashiro

Self-Supervised Learning of Time Series Representation via Diffusion Process and Imputation-Interpolation-Forecasting Mask

May 09, 2024

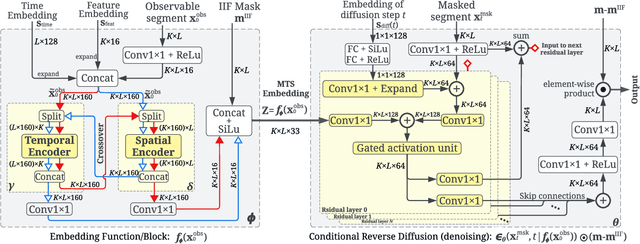

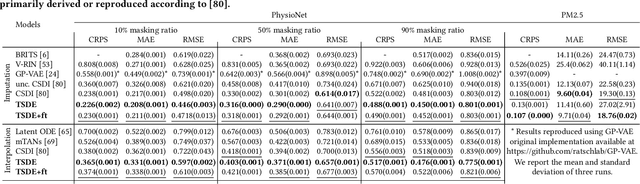

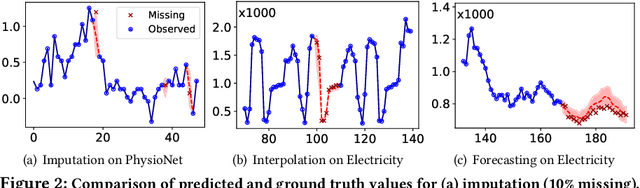

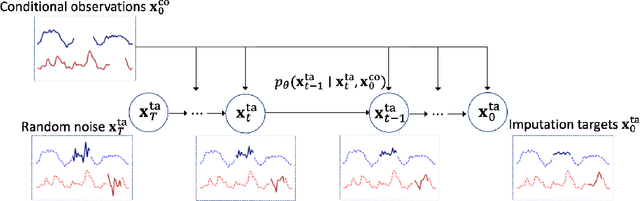

Abstract:Time Series Representation Learning (TSRL) focuses on generating informative representations for various Time Series (TS) modeling tasks. Traditional Self-Supervised Learning (SSL) methods in TSRL fall into four main categories: reconstructive, adversarial, contrastive, and predictive, each with a common challenge of sensitivity to noise and intricate data nuances. Recently, diffusion-based methods have shown advanced generative capabilities. However, they primarily target specific application scenarios like imputation and forecasting, leaving a gap in leveraging diffusion models for generic TSRL. Our work, Time Series Diffusion Embedding (TSDE), bridges this gap as the first diffusion-based SSL TSRL approach. TSDE segments TS data into observed and masked parts using an Imputation-Interpolation-Forecasting (IIF) mask. It applies a trainable embedding function, featuring dual-orthogonal Transformer encoders with a crossover mechanism, to the observed part. We train a reverse diffusion process conditioned on the embeddings, designed to predict noise added to the masked part. Extensive experiments demonstrate TSDE's superiority in imputation, interpolation, forecasting, anomaly detection, classification, and clustering. We also conduct an ablation study, present embedding visualizations, and compare inference speed, further substantiating TSDE's efficiency and validity in learning representations of TS data.

CSDI: Conditional Score-based Diffusion Models for Probabilistic Time Series Imputation

Jul 07, 2021

Abstract:The imputation of missing values in time series has many applications in healthcare and finance. While autoregressive models are natural candidates for time series imputation, score-based diffusion models have recently outperformed existing counterparts including autoregressive models in many tasks such as image generation and audio synthesis, and would be promising for time series imputation. In this paper, we propose Conditional Score-based Diffusion models for Imputation (CSDI), a novel time series imputation method that utilizes score-based diffusion models conditioned on observed data. Unlike existing score-based approaches, the conditional diffusion model is explicitly trained for imputation and can exploit correlations between observed values. On healthcare and environmental data, CSDI improves by 40-70% over existing probabilistic imputation methods on popular performance metrics. In addition, deterministic imputation by CSDI reduces the error by 5-20% compared to the state-of-the-art deterministic imputation methods. Furthermore, CSDI can also be applied to time series interpolation and probabilistic forecasting, and is competitive with existing baselines.

Output Diversified Initialization for Adversarial Attacks

Mar 15, 2020

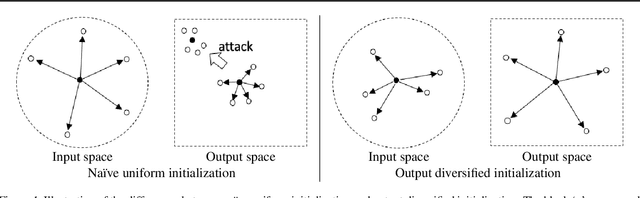

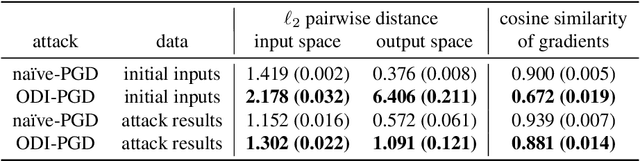

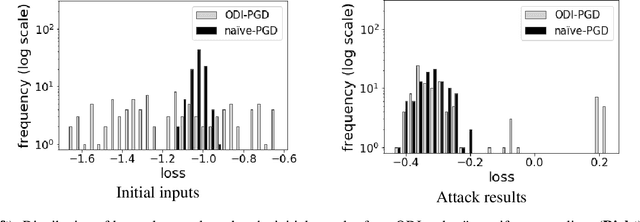

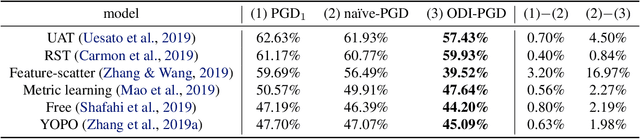

Abstract:Adversarial examples are often constructed by iteratively refining a randomly perturbed input. To improve diversity and thus also the success rates of attacks, we propose Output Diversified Initialization (ODI), a novel random initialization strategy that can be combined with most existing white-box adversarial attacks. Instead of using uniform perturbations in the input space, we seek diversity in the output logits space of the target model. Empirically, we demonstrate that existing $\ell_\infty$ and $\ell_2$ adversarial attacks with ODI become much more efficient on several datasets including MNIST, CIFAR-10 and ImageNet, reducing the accuracy of recently proposed defense models by 1--17\%. Moreover, PGD attack with ODI outperforms current state-of-the-art attacks against robust models, while also being roughly 50 times faster on CIFAR-10. The code is available on https://github.com/ermongroup/ODI/.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge