Yushuang Liu

Precise Representation Model of SAR Saturated Interference: Mechanism and Verification

Jul 09, 2025Abstract:Synthetic Aperture Radar (SAR) is highly susceptible to Radio Frequency Interference (RFI). Due to the performance limitations of components such as gain controllers and analog-to-digital converters in SAR receivers, high-power interference can easily cause saturation of the SAR receiver, resulting in nonlinear distortion of the interfered echoes, which are distorted in both the time domain and frequency domain. Some scholars have analyzed the impact of SAR receiver saturation on target echoes through simulations. However, the saturation function has non-smooth characteristics, making it difficult to conduct accurate analysis using traditional analytical methods. Current related studies have approximated and analyzed the saturation function based on the hyperbolic tangent function, but there are approximation errors. Therefore, this paper proposes a saturation interference analysis model based on Bessel functions, and verifies the accuracy of the proposed saturation interference analysis model by simulating and comparing it with the traditional saturation model based on smooth function approximation. This model can provide certain guidance for further work such as saturation interference suppression.

Dynamically Retrieving Knowledge via Query Generation for informative dialogue response

Jul 30, 2022

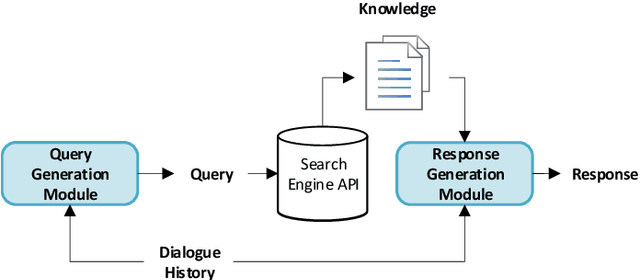

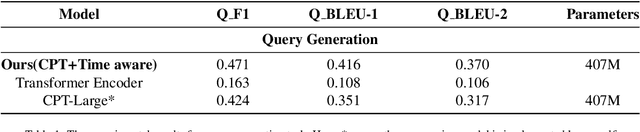

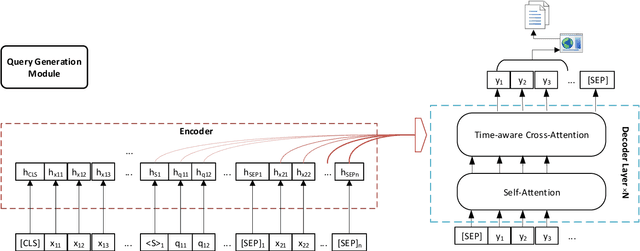

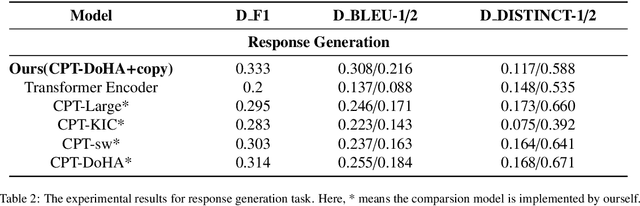

Abstract:Knowledge-driven dialogue generation has recently made remarkable breakthroughs. Compared with general dialogue systems, superior knowledge-driven dialogue systems can generate more informative and knowledgeable responses with pre-provided knowledge. However, in practical applications, the dialogue system cannot be provided with corresponding knowledge in advance. In order to solve the problem, we design a knowledge-driven dialogue system named DRKQG (\emph{Dynamically Retrieving Knowledge via Query Generation for informative dialogue response}). Specifically, the system can be divided into two modules: query generation module and dialogue generation module. First, a time-aware mechanism is utilized to capture context information and a query can be generated for retrieving knowledge. Then, we integrate copy Mechanism and Transformers, which allows the response generation module produces responses derived from the context and retrieved knowledge. Experimental results at LIC2022, Language and Intelligence Technology Competition, show that our module outperforms the baseline model by a large margin on automatic evaluation metrics, while human evaluation by Baidu Linguistics team shows that our system achieves impressive results in Factually Correct and Knowledgeable.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge