Young Geun Kim

CorGi: Contribution-Guided Block-Wise Interval Caching for Training-Free Acceleration of Diffusion Transformers

Dec 30, 2025Abstract:Diffusion transformer (DiT) achieves remarkable performance in visual generation, but its iterative denoising process combined with larger capacity leads to a high inference cost. Recent works have demonstrated that the iterative denoising process of DiT models involves substantial redundant computation across steps. To effectively reduce the redundant computation in DiT, we propose CorGi (Contribution-Guided Block-Wise Interval Caching), training-free DiT inference acceleration framework that selectively reuses the outputs of transformer blocks in DiT across denoising steps. CorGi caches low-contribution blocks and reuses them in later steps within each interval to reduce redundant computation while preserving generation quality. For text-to-image tasks, we further propose CorGi+, which leverages per-block cross-attention maps to identify salient tokens and applies partial attention updates to protect important object details. Evaluation on the state-of-the-art DiT models demonstrates that CorGi and CorGi+ achieve up to 2.0x speedup on average, while preserving high generation quality.

Not All Adapters Matter: Selective Adapter Freezing for Memory-Efficient Fine-Tuning of Language Models

Nov 26, 2024

Abstract:Transformer-based large-scale pre-trained models achieve great success, and fine-tuning, which tunes a pre-trained model on a task-specific dataset, is the standard practice to utilize these models for downstream tasks. Recent work has developed adapter-tuning, but these approaches either still require a relatively high resource usage. Through our investigation, we show that each adapter in adapter-tuning does not have the same impact on task performance and resource usage. Based on our findings, we propose SAFE, which gradually freezes less-important adapters that do not contribute to adaptation during the early training steps. In our experiments, SAFE reduces memory usage, computation amount, and training time by 42.85\%, 34.59\%, and 11.82\%, respectively, while achieving comparable or better performance compared to the baseline. We also demonstrate that SAFE induces regularization effect, thereby smoothing the loss landscape.

HeteroSwitch: Characterizing and Taming System-Induced Data Heterogeneity in Federated Learning

Mar 07, 2024Abstract:Federated Learning (FL) is a practical approach to train deep learning models collaboratively across user-end devices, protecting user privacy by retaining raw data on-device. In FL, participating user-end devices are highly fragmented in terms of hardware and software configurations. Such fragmentation introduces a new type of data heterogeneity in FL, namely \textit{system-induced data heterogeneity}, as each device generates distinct data depending on its hardware and software configurations. In this paper, we first characterize the impact of system-induced data heterogeneity on FL model performance. We collect a dataset using heterogeneous devices with variations across vendors and performance tiers. By using this dataset, we demonstrate that \textit{system-induced data heterogeneity} negatively impacts accuracy, and deteriorates fairness and domain generalization problems in FL. To address these challenges, we propose HeteroSwitch, which adaptively adopts generalization techniques (i.e., ISP transformation and SWAD) depending on the level of bias caused by varying HW and SW configurations. In our evaluation with a realistic FL dataset (FLAIR), HeteroSwitch reduces the variance of averaged precision by 6.3\% across device types.

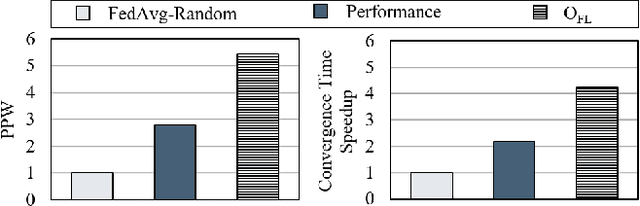

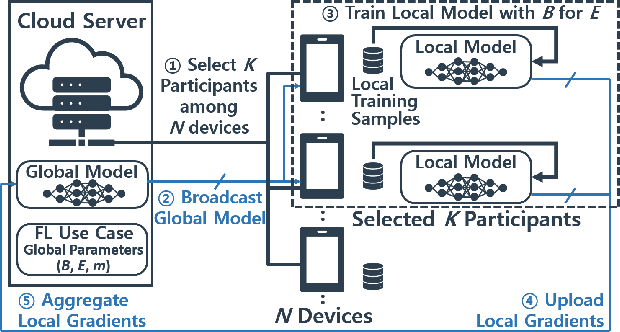

FedGPO: Heterogeneity-Aware Global Parameter Optimization for Efficient Federated Learning

Nov 30, 2022Abstract:Federated learning (FL) has emerged as a solution to deal with the risk of privacy leaks in machine learning training. This approach allows a variety of mobile devices to collaboratively train a machine learning model without sharing the raw on-device training data with the cloud. However, efficient edge deployment of FL is challenging because of the system/data heterogeneity and runtime variance. This paper optimizes the energy-efficiency of FL use cases while guaranteeing model convergence, by accounting for the aforementioned challenges. We propose FedGPO based on a reinforcement learning, which learns how to identify optimal global parameters (B, E, K) for each FL aggregation round adapting to the system/data heterogeneity and stochastic runtime variance. In our experiments, FedGPO improves the model convergence time by 2.4 times, and achieves 3.6 times higher energy efficiency over the baseline settings, respectively.

AutoFL: Enabling Heterogeneity-Aware Energy Efficient Federated Learning

Jul 16, 2021

Abstract:Federated learning enables a cluster of decentralized mobile devices at the edge to collaboratively train a shared machine learning model, while keeping all the raw training samples on device. This decentralized training approach is demonstrated as a practical solution to mitigate the risk of privacy leakage. However, enabling efficient FL deployment at the edge is challenging because of non-IID training data distribution, wide system heterogeneity and stochastic-varying runtime effects in the field. This paper jointly optimizes time-to-convergence and energy efficiency of state-of-the-art FL use cases by taking into account the stochastic nature of edge execution. We propose AutoFL by tailor-designing a reinforcement learning algorithm that learns and determines which K participant devices and per-device execution targets for each FL model aggregation round in the presence of stochastic runtime variance, system and data heterogeneity. By considering the unique characteristics of FL edge deployment judiciously, AutoFL achieves 3.6 times faster model convergence time and 4.7 and 5.2 times higher energy efficiency for local clients and globally over the cluster of K participants, respectively.

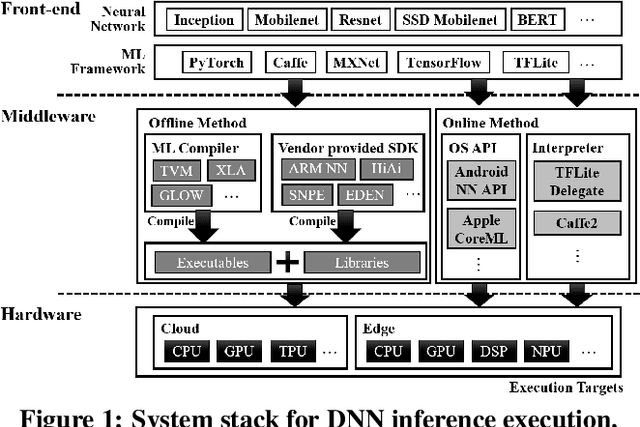

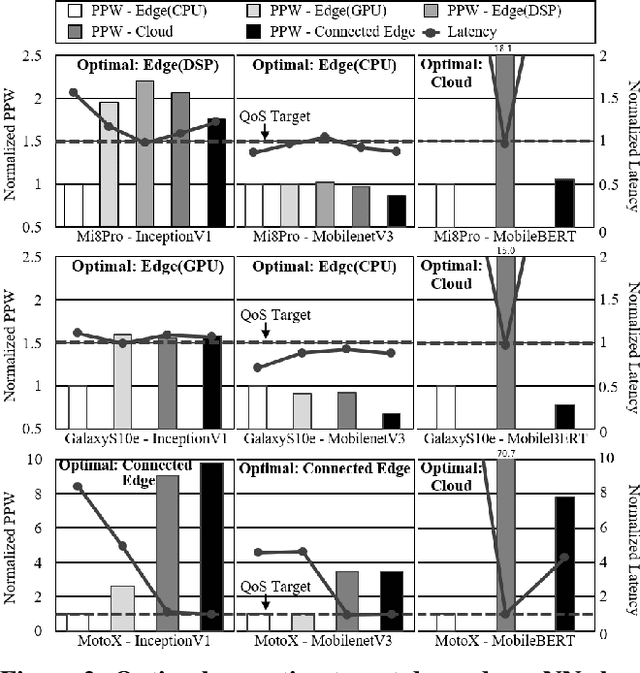

AutoScale: Optimizing Energy Efficiency of End-to-End Edge Inference under Stochastic Variance

May 06, 2020

Abstract:Deep learning inference is increasingly run at the edge. As the programming and system stack support becomes mature, it enables acceleration opportunities within a mobile system, where the system performance envelope is scaled up with a plethora of programmable co-processors. Thus, intelligent services designed for mobile users can choose between running inference on the CPU or any of the co-processors on the mobile system, or exploiting connected systems, such as the cloud or a nearby, locally connected system. By doing so, the services can scale out the performance and increase the energy efficiency of edge mobile systems. This gives rise to a new challenge - deciding when inference should run where. Such execution scaling decision becomes more complicated with the stochastic nature of mobile-cloud execution, where signal strength variations of the wireless networks and resource interference can significantly affect real-time inference performance and system energy efficiency. To enable accurate, energy-efficient deep learning inference at the edge, this paper proposes AutoScale. AutoScale is an adaptive and light-weight execution scaling engine built upon the custom-designed reinforcement learning algorithm. It continuously learns and selects the most energy-efficient inference execution target by taking into account characteristics of neural networks and available systems in the collaborative cloud-edge execution environment while adapting to the stochastic runtime variance. Real system implementation and evaluation, considering realistic execution scenarios, demonstrate an average of 9.8 and 1.6 times energy efficiency improvement for DNN edge inference over the baseline mobile CPU and cloud offloading, while meeting the real-time performance and accuracy requirement.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge