Yonatan Carlos Carranza Alarcón

Skeptical inferences in multi-label ranking with sets of probabilities

Oct 16, 2022

Abstract:In this paper, we consider the problem of making skeptical inferences for the multi-label ranking problem. We assume that our uncertainty is described by a convex set of probabilities (i.e. a credal set), defined over the set of labels. Instead of learning a singleton prediction (or, a completed ranking over the labels), we thus seek for skeptical inferences in terms of set-valued predictions consisting of completed rankings.

Skeptical binary inferences in multi-label problems with sets of probabilities

May 02, 2022

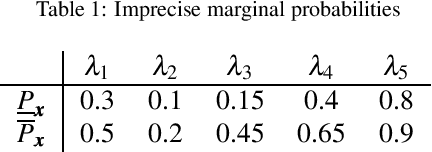

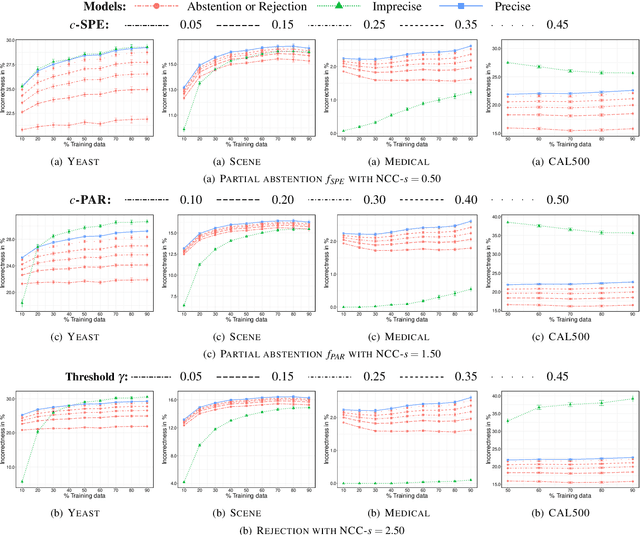

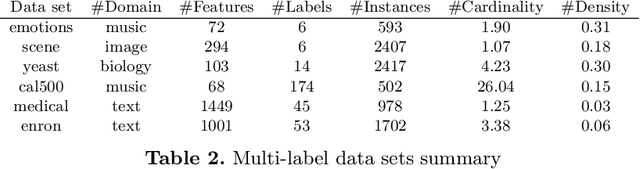

Abstract:In this paper, we consider the problem of making distributionally robust, skeptical inferences for the multi-label problem, or more generally for Boolean vectors. By distributionally robust, we mean that we consider a set of possible probability distributions, and by skeptical we understand that we consider as valid only those inferences that are true for every distribution within this set. Such inferences will provide partial predictions whenever the considered set is sufficiently big. We study in particular the Hamming loss case, a common loss function in multi-label problems, showing how skeptical inferences can be made in this setting. Our experimental results are organised in three sections; (1) the first one indicates the gain computational obtained from our theoretical results by using synthetical data sets, (2) the second one indicates that our approaches produce relevant cautiousness on those hard-to-predict instances where its precise counterpart fails, and (3) the last one demonstrates experimentally how our approach copes with imperfect information (generated by a downsampling procedure) better than the partial abstention [31] and the rejection rules.

Multi-label Chaining with Imprecise Probabilities

Jul 19, 2021

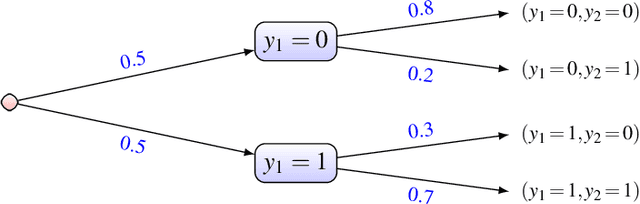

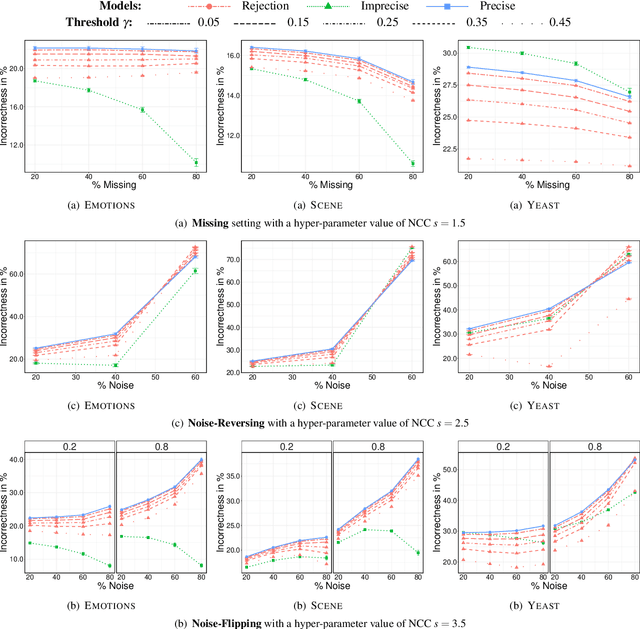

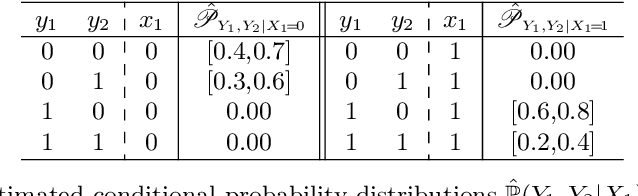

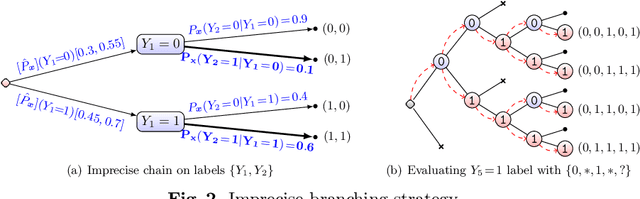

Abstract:We present two different strategies to extend the classical multi-label chaining approach to handle imprecise probability estimates. These estimates use convex sets of distributions (or credal sets) in order to describe our uncertainty rather than a precise one. The main reasons one could have for using such estimations are (1) to make cautious predictions (or no decision at all) when a high uncertainty is detected in the chaining and (2) to make better precise predictions by avoiding biases caused in early decisions in the chaining. We adapt both strategies to the case of the naive credal classifier, showing that this adaptations are computationally efficient. Our experimental results on missing labels, which investigate how reliable these predictions are in both approaches, indicate that our approaches produce relevant cautiousness on those hard-to-predict instances where the precise models fail.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge