Yazheng Liu

A Differential Geometric View and Explainability of GNN on Evolving Graphs

Mar 11, 2024

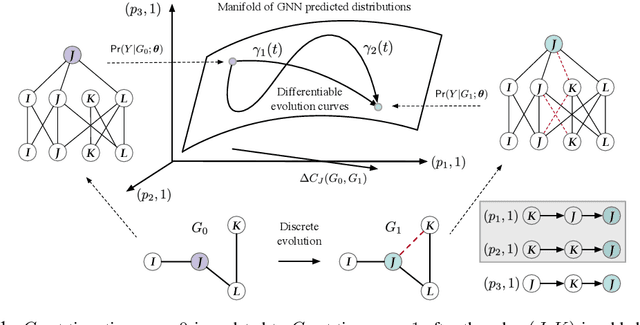

Abstract:Graphs are ubiquitous in social networks and biochemistry, where Graph Neural Networks (GNN) are the state-of-the-art models for prediction. Graphs can be evolving and it is vital to formally model and understand how a trained GNN responds to graph evolution. We propose a smooth parameterization of the GNN predicted distributions using axiomatic attribution, where the distributions are on a low-dimensional manifold within a high-dimensional embedding space. We exploit the differential geometric viewpoint to model distributional evolution as smooth curves on the manifold. We reparameterize families of curves on the manifold and design a convex optimization problem to find a unique curve that concisely approximates the distributional evolution for human interpretation. Extensive experiments on node classification, link prediction, and graph classification tasks with evolving graphs demonstrate the better sparsity, faithfulness, and intuitiveness of the proposed method over the state-of-the-art methods.

Inconsistent Matters: A Knowledge-guided Dual-consistency Network for Multi-modal Rumor Detection

Jun 19, 2023

Abstract:Rumor spreaders are increasingly utilizing multimedia content to attract the attention and trust of news consumers. Though quite a few rumor detection models have exploited the multi-modal data, they seldom consider the inconsistent semantics between images and texts, and rarely spot the inconsistency among the post contents and background knowledge. In addition, they commonly assume the completeness of multiple modalities and thus are incapable of handling handle missing modalities in real-life scenarios. Motivated by the intuition that rumors in social media are more likely to have inconsistent semantics, a novel Knowledge-guided Dual-consistency Network is proposed to detect rumors with multimedia contents. It uses two consistency detection subnetworks to capture the inconsistency at the cross-modal level and the content-knowledge level simultaneously. It also enables robust multi-modal representation learning under different missing visual modality conditions, using a special token to discriminate between posts with visual modality and posts without visual modality. Extensive experiments on three public real-world multimedia datasets demonstrate that our framework can outperform the state-of-the-art baselines under both complete and incomplete modality conditions. Our codes are available at https://github.com/MengzSun/KDCN.

Multi-objective Explanations of GNN Predictions

Nov 29, 2021

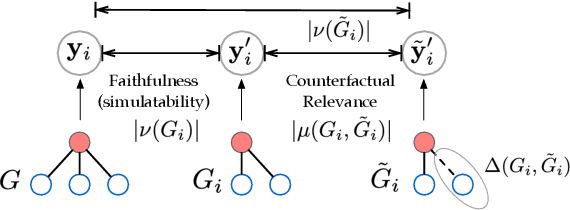

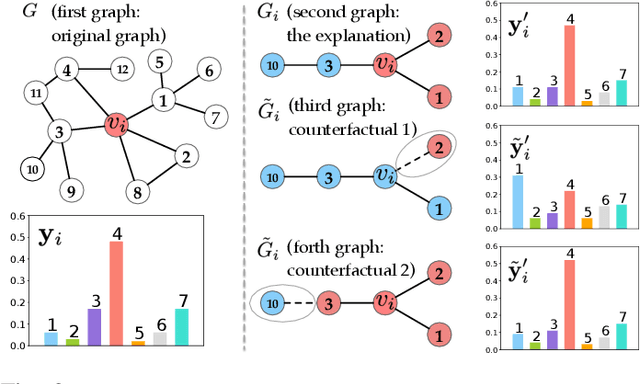

Abstract:Graph Neural Network (GNN) has achieved state-of-the-art performance in various high-stake prediction tasks, but multiple layers of aggregations on graphs with irregular structures make GNN a less interpretable model. Prior methods use simpler subgraphs to simulate the full model, or counterfactuals to identify the causes of a prediction. The two families of approaches aim at two distinct objectives, "simulatability" and "counterfactual relevance", but it is not clear how the objectives can jointly influence the human understanding of an explanation. We design a user study to investigate such joint effects and use the findings to design a multi-objective optimization (MOO) algorithm to find Pareto optimal explanations that are well-balanced in simulatability and counterfactual. Since the target model can be of any GNN variants and may not be accessible due to privacy concerns, we design a search algorithm using zeroth-order information without accessing the architecture and parameters of the target model. Quantitative experiments on nine graphs from four applications demonstrate that the Pareto efficient explanations dominate single-objective baselines that use first-order continuous optimization or discrete combinatorial search. The explanations are further evaluated in robustness and sensitivity to show their capability of revealing convincing causes while being cautious about the possible confounders. The diverse dominating counterfactuals can certify the feasibility of algorithmic recourse, that can potentially promote algorithmic fairness where humans are participating in the decision-making using GNN.

Explaining GNN over Evolving Graphs using Information Flow

Nov 19, 2021

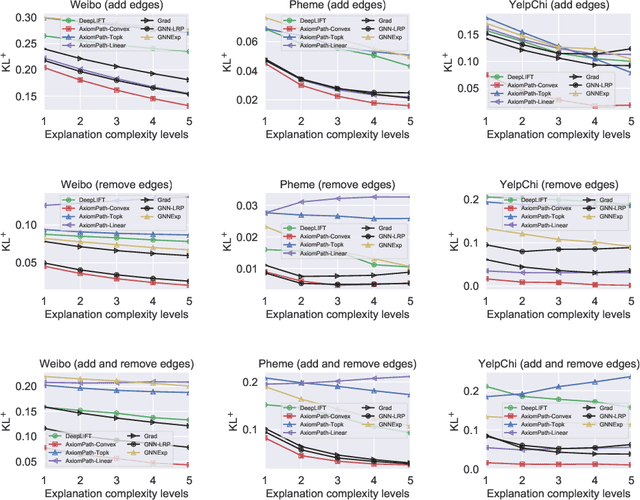

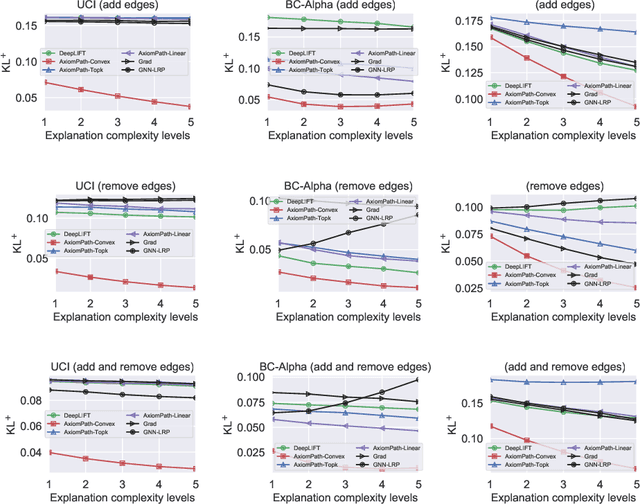

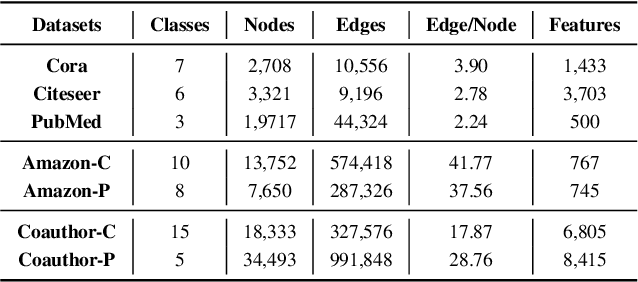

Abstract:Graphs are ubiquitous in many applications, such as social networks, knowledge graphs, smart grids, etc.. Graph neural networks (GNN) are the current state-of-the-art for these applications, and yet remain obscure to humans. Explaining the GNN predictions can add transparency. However, as many graphs are not static but continuously evolving, explaining changes in predictions between two graph snapshots is different but equally important. Prior methods only explain static predictions or generate coarse or irrelevant explanations for dynamic predictions. We define the problem of explaining evolving GNN predictions and propose an axiomatic attribution method to uniquely decompose the change in a prediction to paths on computation graphs. The attribution to many paths involving high-degree nodes is still not interpretable, while simply selecting the top important paths can be suboptimal in approximating the change. We formulate a novel convex optimization problem to optimally select the paths that explain the prediction evolution. Theoretically, we prove that the existing method based on Layer-Relevance-Propagation (LRP) is a special case of the proposed algorithm when an empty graph is compared with. Empirically, on seven graph datasets, with a novel metric designed for evaluating explanations of prediction change, we demonstrate the superiority of the proposed approach over existing methods, including LRP, DeepLIFT, and other path selection methods.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge