Yash Turkar

Adaptive Illumination Control for Robot Perception

Feb 13, 2026Abstract:Robot perception under low light or high dynamic range is usually improved downstream - via more robust feature extraction, image enhancement, or closed-loop exposure control. However, all of these approaches are limited by the image captured these conditions. An alternate approach is to utilize a programmable onboard light that adds to ambient illumination and improves captured images. However, it is not straightforward to predict its impact on image formation. Illumination interacts nonlinearly with depth, surface reflectance, and scene geometry. It can both reveal structure and induce failure modes such as specular highlights and saturation. We introduce Lightning, a closed-loop illumination-control framework for visual SLAM that combines relighting, offline optimization, and imitation learning. This is performed in three stages. First, we train a Co-Located Illumination Decomposition (CLID) relighting model that decomposes a robot observation into an ambient component and a light-contribution field. CLID enables physically consistent synthesis of the same scene under alternative light intensities and thereby creates dense multi-intensity training data without requiring us to repeatedly re-run trajectories. Second, using these synthesized candidates, we formulate an offline Optimal Intensity Schedule (OIS) problem that selects illumination levels over a sequence trading off SLAM-relevant image utility against power consumption and temporal smoothness. Third, we distill this ideal solution into a real-time controller through behavior cloning, producing an Illumination Control Policy (ILC) that generalizes beyond the initial training distribution and runs online on a mobile robot to command discrete light-intensity levels. Across our evaluation, Lightning substantially improves SLAM trajectory robustness while reducing unnecessary illumination power.

Active Illumination Control in Low-Light Environments using NightHawk

Jun 05, 2025Abstract:Subterranean environments such as culverts present significant challenges to robot vision due to dim lighting and lack of distinctive features. Although onboard illumination can help, it introduces issues such as specular reflections, overexposure, and increased power consumption. We propose NightHawk, a framework that combines active illumination with exposure control to optimize image quality in these settings. NightHawk formulates an online Bayesian optimization problem to determine the best light intensity and exposure-time for a given scene. We propose a novel feature detector-based metric to quantify image utility and use it as the cost function for the optimizer. We built NightHawk as an event-triggered recursive optimization pipeline and deployed it on a legged robot navigating a culvert beneath the Erie Canal. Results from field experiments demonstrate improvements in feature detection and matching by 47-197% enabling more reliable visual estimation in challenging lighting conditions.

PANOS: Payload-Aware Navigation in Offroad Scenarios

Sep 25, 2024

Abstract:Nature has evolved humans to walk on different terrains by developing a detailed understanding of their physical characteristics. Similarly, legged robots need to develop their capability to walk on complex terrains with a variety of task-dependent payloads to achieve their goals. However, conventional terrain adaptation methods are susceptible to failure with varying payloads. In this work, we introduce PANOS, a weakly supervised approach that integrates proprioception and exteroception from onboard sensing to achieve a stable gait while walking by a legged robot over various terrains. Our work also provides evidence of its adaptability over varying payloads. We evaluate our method on multiple terrains and payloads using a legged robot. PANOS improves the stability up to 44% without any payload and 53% with 15 lbs payload. We also notice a reduction in the vibration cost of 20% with the payload for various terrain types when compared to state-of-the-art methods.

PQM: A Point Quality Evaluation Metric for Dense Maps

Jun 06, 2023

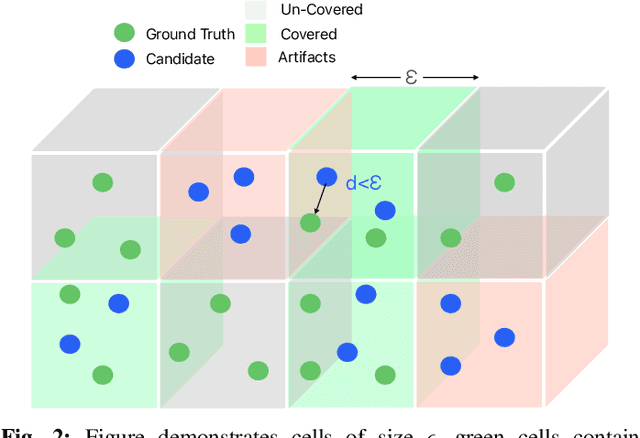

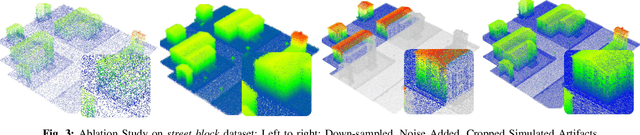

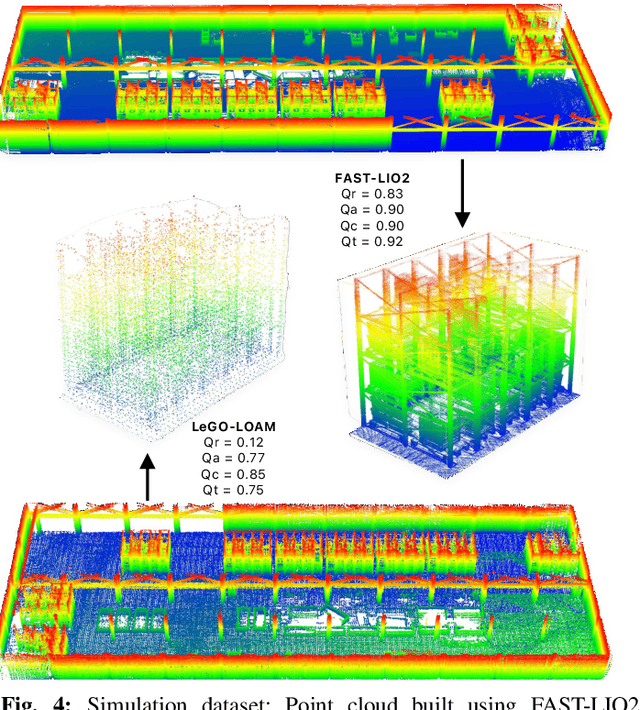

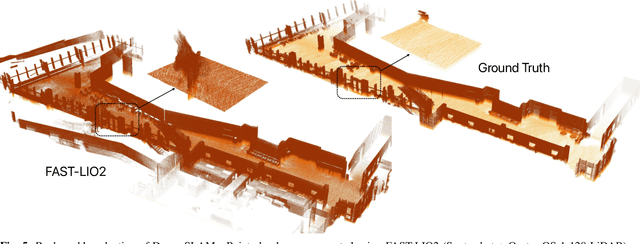

Abstract:LiDAR-based mapping/reconstruction are important for various applications, but evaluating the quality of the dense maps they produce is challenging. The current methods have limitations, including the inability to capture completeness, structural information, and local variations in error. In this paper, we propose a novel point quality evaluation metric (PQM) that consists of four sub-metrics to provide a more comprehensive evaluation of point cloud quality. The completeness sub-metric evaluates the proportion of missing data, the artifact score sub-metric recognizes and characterizes artifacts, the accuracy sub-metric measures registration accuracy, and the resolution sub-metric quantifies point cloud density. Through an ablation study using a prototype dataset, we demonstrate the effectiveness of each of the sub-metrics and compare them to popular point cloud distance measures. Using three LiDAR SLAM systems to generate maps, we evaluate their output map quality and demonstrate the metrics robustness to noise and artifacts. Our implementation of PQM, datasets and detailed documentation on how to integrate with your custom dense mapping pipeline can be found at github.com/droneslab/pqm

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge