Yanglong Yu

Evolutionary Reinforcement Learning via Cooperative Coevolutionary Negatively Correlated Search

Sep 08, 2020

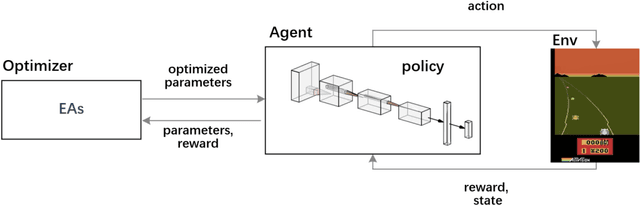

Abstract:Evolutionary algorithms (EAs) have been successfully applied to optimize the policies for Reinforcement Learning (RL) tasks due to their exploration ability. The recently proposed Negatively Correlated Search (NCS) provides a distinct parallel exploration search behavior and is expected to facilitate RL more effectively. Considering that the commonly adopted neural policies usually involves millions of parameters to be optimized, the direct application of NCS to RL may face a great challenge of the large-scale search space. To address this issue, this paper presents an NCS-friendly Cooperative Coevolution (CC) framework to scale-up NCS while largely preserving its parallel exploration search behavior. The issue of traditional CC that can deteriorate NCS is also discussed. Empirical studies on 10 popular Atari games show that the proposed method can significantly outperform three state-of-the-art deep RL methods with 50% less computational time by effectively exploring a 1.7 million-dimensional search space.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge