Xueqing Shi

Less is More for RAG: Information Gain Pruning for Generator-Aligned Reranking and Evidence Selection

Jan 24, 2026Abstract:Retrieval-augmented generation (RAG) grounds large language models with external evidence, but under a limited context budget, the key challenge is deciding which retrieved passages should be injected. We show that retrieval relevance metrics (e.g., NDCG) correlate weakly with end-to-end QA quality and can even become negatively correlated under multi-passage injection, where redundancy and mild conflicts destabilize generation. We propose \textbf{Information Gain Pruning (IGP)}, a deployment-friendly reranking-and-pruning module that selects evidence using a generator-aligned utility signal and filters weak or harmful passages before truncation, without changing existing budget interfaces. Across five open-domain QA benchmarks and multiple retrievers and generators, IGP consistently improves the quality--cost trade-off. In a representative multi-evidence setting, IGP delivers about +12--20% relative improvement in average F1 while reducing final-stage input tokens by roughly 76--79% compared to retriever-only baselines.

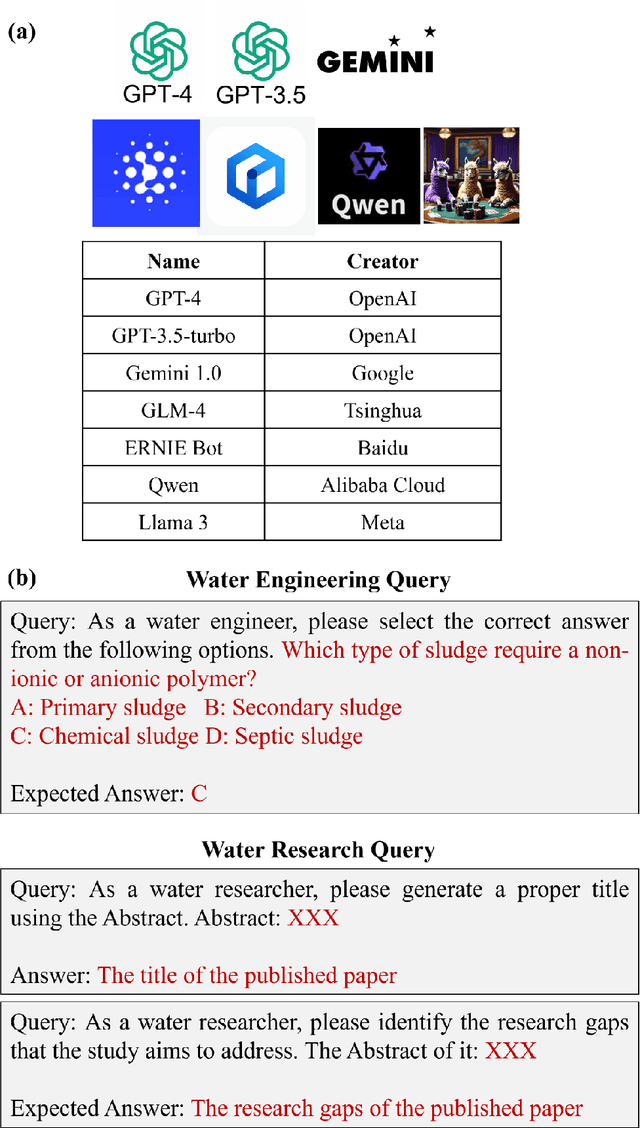

Unlocking the Potential: Benchmarking Large Language Models in Water Engineering and Research

Jul 22, 2024

Abstract:Recent advancements in Large Language Models (LLMs) have sparked interest in their potential applications across various fields. This paper embarked on a pivotal inquiry: Can existing LLMs effectively serve as "water expert models" for water engineering and research tasks? This study was the first to evaluate LLMs' contributions across various water engineering and research tasks by establishing a domain-specific benchmark suite, namely, WaterER. Herein, we prepared 983 tasks related to water engineering and research, categorized into "wastewater treatment", "environmental restoration", "drinking water treatment and distribution", "sanitation", "anaerobic digestion" and "contaminants assessment". We evaluated the performance of seven LLMs (i.e., GPT-4, GPT-3.5, Gemini, GLM-4, ERNIE, QWEN and Llama3) on these tasks. We highlighted the strengths of GPT-4 in handling diverse and complex tasks of water engineering and water research, the specialized capabilities of Gemini in academic contexts, Llama3's strongest capacity to answer Chinese water engineering questions and the competitive performance of Chinese-oriented models like GLM-4, ERNIE and QWEN in some water engineering tasks. More specifically, current LLMs excelled particularly in generating precise research gaps for papers on "contaminants and related water quality monitoring and assessment". Additionally, they were more adept at creating appropriate titles for research papers on "treatment processes for wastewaters", "environmental restoration", and "drinking water treatment". Overall, this study pioneered evaluating LLMs in water engineering and research by introducing the WaterER benchmark to assess the trustworthiness of their predictions. This standardized evaluation framework would also drive future advancements in LLM technology by using targeting datasets, propelling these models towards becoming true "water expert".

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge