Xudong Jian

Automating Traffic Monitoring with SHM Sensor Networks via Vision-Supervised Deep Learning

Jun 23, 2025Abstract:Bridges, as critical components of civil infrastructure, are increasingly affected by deterioration, making reliable traffic monitoring essential for assessing their remaining service life. Among operational loads, traffic load plays a pivotal role, and recent advances in deep learning - particularly in computer vision (CV) - have enabled progress toward continuous, automated monitoring. However, CV-based approaches suffer from limitations, including privacy concerns and sensitivity to lighting conditions, while traditional non-vision-based methods often lack flexibility in deployment and validation. To bridge this gap, we propose a fully automated deep-learning pipeline for continuous traffic monitoring using structural health monitoring (SHM) sensor networks. Our approach integrates CV-assisted high-resolution dataset generation with supervised training and inference, leveraging graph neural networks (GNNs) to capture the spatial structure and interdependence of sensor data. By transferring knowledge from CV outputs to SHM sensors, the proposed framework enables sensor networks to achieve comparable accuracy of vision-based systems, with minimal human intervention. Applied to accelerometer and strain gauge data in a real-world case study, the model achieves state-of-the-art performance, with classification accuracies of 99% for light vehicles and 94% for heavy vehicles.

Modal Decomposition and Identification for a Population of Structures Using Physics-Informed Graph Neural Networks and Transformers

May 06, 2025Abstract:Modal identification is crucial for structural health monitoring and structural control, providing critical insights into structural dynamics and performance. This study presents a novel deep learning framework that integrates graph neural networks (GNNs), transformers, and a physics-informed loss function to achieve modal decomposition and identification across a population of structures. The transformer module decomposes multi-degrees-of-freedom (MDOF) structural dynamic measurements into single-degree-of-freedom (SDOF) modal responses, facilitating the identification of natural frequencies and damping ratios. Concurrently, the GNN captures the structural configurations and identifies mode shapes corresponding to the decomposed SDOF modal responses. The proposed model is trained in a purely physics-informed and unsupervised manner, leveraging modal decomposition theory and the independence of structural modes to guide learning without the need for labeled data. Validation through numerical simulations and laboratory experiments demonstrates its effectiveness in accurately decomposing dynamic responses and identifying modal properties from sparse structural dynamic measurements, regardless of variations in external loads or structural configurations. Comparative analyses against established modal identification techniques and model variations further underscore its superior performance, positioning it as a favorable approach for population-based structural health monitoring.

Transferring self-supervised pre-trained models for SHM data anomaly detection with scarce labeled data

Dec 05, 2024Abstract:Structural health monitoring (SHM) has experienced significant advancements in recent decades, accumulating massive monitoring data. Data anomalies inevitably exist in monitoring data, posing significant challenges to their effective utilization. Recently, deep learning has emerged as an efficient and effective approach for anomaly detection in bridge SHM. Despite its progress, many deep learning models require large amounts of labeled data for training. The process of labeling data, however, is labor-intensive, time-consuming, and often impractical for large-scale SHM datasets. To address these challenges, this work explores the use of self-supervised learning (SSL), an emerging paradigm that combines unsupervised pre-training and supervised fine-tuning. The SSL-based framework aims to learn from only a very small quantity of labeled data by fine-tuning, while making the best use of the vast amount of unlabeled SHM data by pre-training. Mainstream SSL methods are compared and validated on the SHM data of two in-service bridges. Comparative analysis demonstrates that SSL techniques boost data anomaly detection performance, achieving increased F1 scores compared to conventional supervised training, especially given a very limited amount of labeled data. This work manifests the effectiveness and superiority of SSL techniques on large-scale SHM data, providing an efficient tool for preliminary anomaly detection with scarce label information.

Neural Modal ODEs: Integrating Physics-based Modeling with Neural ODEs for Modeling High Dimensional Monitored Structures

Jul 16, 2022

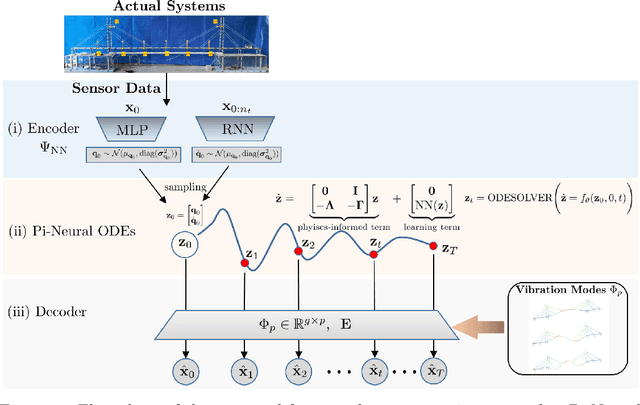

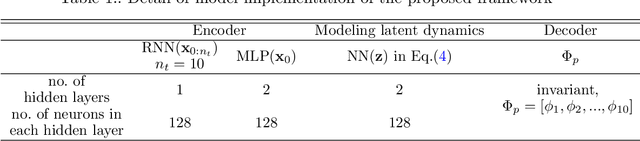

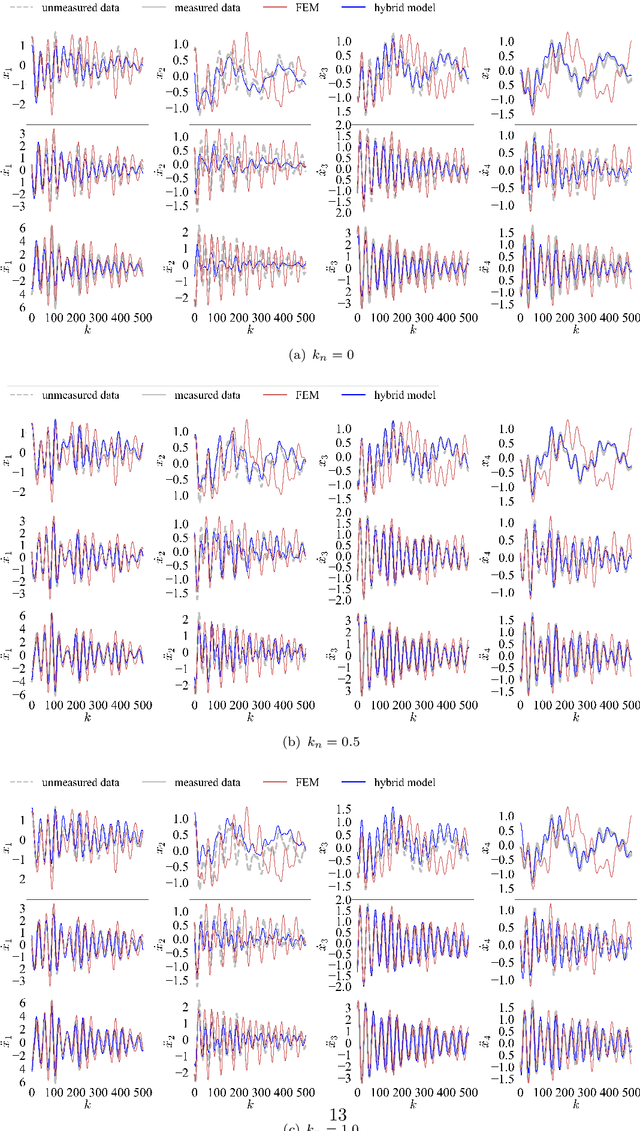

Abstract:The order/dimension of models derived on the basis of data is commonly restricted by the number of observations, or in the context of monitored systems, sensing nodes. This is particularly true for structural systems (e.g. civil or mechanical structures), which are typically high-dimensional in nature. In the scope of physics-informed machine learning, this paper proposes a framework - termed Neural Modal ODEs - to integrate physics-based modeling with deep learning (particularly, Neural Ordinary Differential Equations -- Neural ODEs) for modeling the dynamics of monitored and high-dimensional engineered systems. In this initiating exploration, we restrict ourselves to linear or mildly nonlinear systems. We propose an architecture that couples a dynamic version of variational autoencoders with physics-informed Neural ODEs (Pi-Neural ODEs). An encoder, as a part of the autoencoder, learns the abstract mappings from the first few items of observational data to the initial values of the latent variables, which drive the learning of embedded dynamics via physics-informed Neural ODEs, imposing a \textit{modal model} structure to that latent space. The decoder of the proposed model adopts the eigenmodes derived from an eigen-analysis applied to the linearized portion of a physics-based model: a process implicitly carrying the spatial relationship between degrees-of-freedom (DOFs). The framework is validated on a numerical example, and an experimental dataset of a scaled cable-stayed bridge, where the learned hybrid model is shown to outperform a purely physics-based approach to modeling. We further show the functionality of the proposed scheme within the context of virtual sensing, i.e., the recovery of generalized response quantities in unmeasured DOFs from spatially sparse data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge