Ximena Gutierrez-Vasques

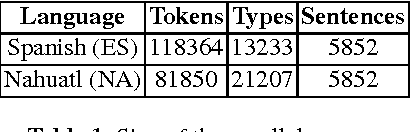

Comparing morphological complexity of Spanish, Otomi and Nahuatl

Aug 13, 2018

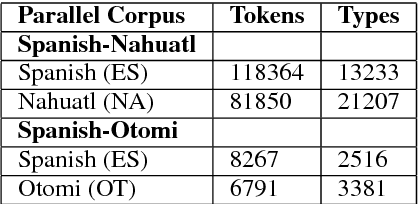

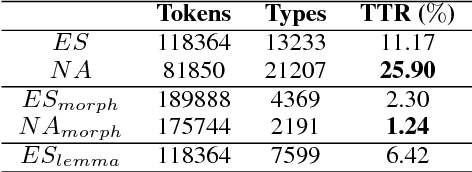

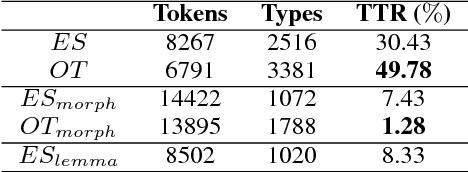

Abstract:We use two small parallel corpora for comparing the morphological complexity of Spanish, Otomi and Nahuatl. These are languages that belong to different linguistic families, the latter are low-resourced. We take into account two quantitative criteria, on one hand the distribution of types over tokens in a corpus, on the other, perplexity and entropy as indicators of word structure predictability. We show that a language can be complex in terms of how many different morphological word forms can produce, however, it may be less complex in terms of predictability of its internal structure of words.

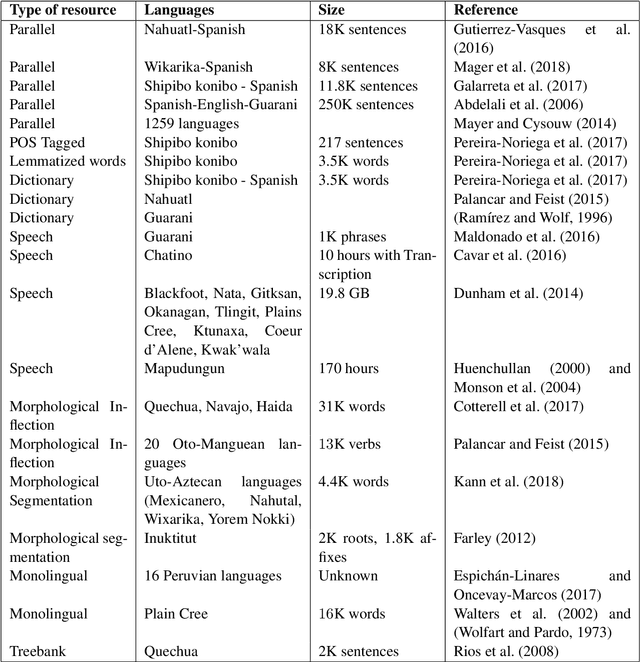

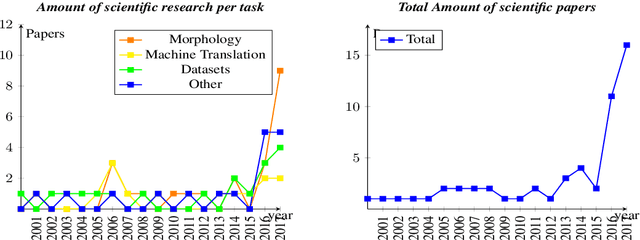

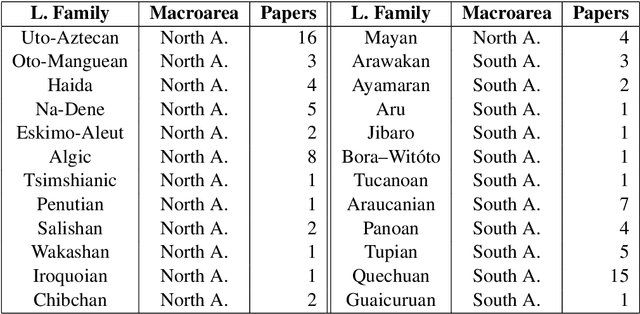

Challenges of language technologies for the indigenous languages of the Americas

Jun 12, 2018

Abstract:Indigenous languages of the American continent are highly diverse. However, they have received little attention from the technological perspective. In this paper, we review the research, the digital resources and the available NLP systems that focus on these languages. We present the main challenges and research questions that arise when distant languages and low-resource scenarios are faced. We would like to encourage NLP research in linguistically rich and diverse areas like the Americas.

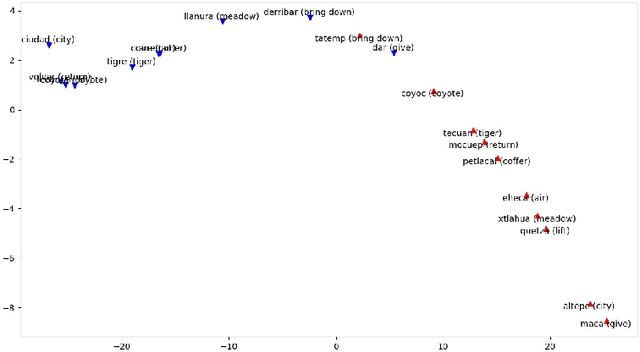

Low-resource bilingual lexicon extraction using graph based word embeddings

Oct 06, 2017

Abstract:In this work we focus on the task of automatically extracting bilingual lexicon for the language pair Spanish-Nahuatl. This is a low-resource setting where only a small amount of parallel corpus is available. Most of the downstream methods do not work well under low-resources conditions. This is specially true for the approaches that use vectorial representations like Word2Vec. Our proposal is to construct bilingual word vectors from a graph. This graph is generated using translation pairs obtained from an unsupervised word alignment method. We show that, in a low-resource setting, these type of vectors are successful in representing words in a bilingual semantic space. Moreover, when a linear transformation is applied to translate words from one language to another, our graph based representations considerably outperform the popular setting that uses Word2Vec.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge