Xiaozheng Xie

GuidedNet: Semi-Supervised Multi-Organ Segmentation via Labeled Data Guide Unlabeled Data

Aug 09, 2024Abstract:Semi-supervised multi-organ medical image segmentation aids physicians in improving disease diagnosis and treatment planning and reduces the time and effort required for organ annotation.Existing state-of-the-art methods train the labeled data with ground truths and train the unlabeled data with pseudo-labels. However, the two training flows are separate, which does not reflect the interrelationship between labeled and unlabeled data.To address this issue, we propose a semi-supervised multi-organ segmentation method called GuidedNet, which leverages the knowledge from labeled data to guide the training of unlabeled data. The primary goals of this study are to improve the quality of pseudo-labels for unlabeled data and to enhance the network's learning capability for both small and complex organs.A key concept is that voxel features from labeled and unlabeled data that are close to each other in the feature space are more likely to belong to the same class.On this basis, a 3D Consistent Gaussian Mixture Model (3D-CGMM) is designed to leverage the feature distributions from labeled data to rectify the generated pseudo-labels.Furthermore, we introduce a Knowledge Transfer Cross Pseudo Supervision (KT-CPS) strategy, which leverages the prior knowledge obtained from the labeled data to guide the training of the unlabeled data, thereby improving the segmentation accuracy for both small and complex organs. Extensive experiments on two public datasets, FLARE22 and AMOS, demonstrated that GuidedNet is capable of achieving state-of-the-art performance.

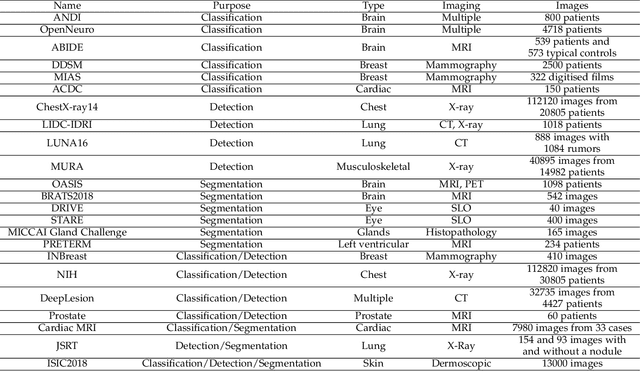

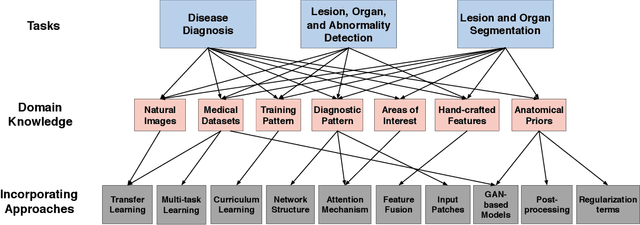

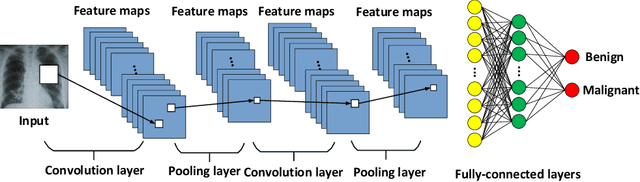

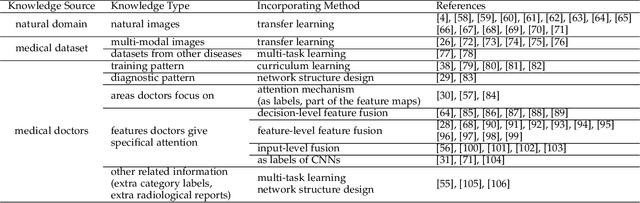

A Survey on Domain Knowledge Powered Deep Learning for Medical Image Analysis

Apr 28, 2020

Abstract:Although deep learning models like CNNs have achieved a great success in medical image analysis, small-sized medical datasets remain to be the major bottleneck in this area. To address this problem, researchers start looking for external information beyond the current available medical datasets. Traditional approaches generally leverage the information from natural images. More recent works utilize the domain knowledge from medical doctors, by letting networks either resemble how they are trained, mimic their diagnostic patterns, or focus on the features or areas they particular pay attention to. In this survey, we summarize the current progress on introducing medical domain knowledge in deep learning models for various tasks like disease diagnosis, lesion, organ and abnormality detection, lesion and organ segmentation. For each type of task, we systematically categorize different kinds of medical domain knowledge that have been utilized and the corresponding integrating methods. We end with a summary of challenges, open problems, and directions for future research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge