Xiangming Jiang

Bayesian Layer Graph Convolutioanl Network for Hyperspetral Image Classification

Nov 14, 2022Abstract:In recent years, research on hyperspectral image (HSI) classification has continuous progress on introducing deep network models, and recently the graph convolutional network (GCN) based models have shown impressive performance. However, these deep learning frameworks based on point estimation suffer from low generalization and inability to quantify the classification results uncertainty. On the other hand, simply applying the Bayesian Neural Network (BNN) based on distribution estimation to classify the HSI is unable to achieve high classification accuracy due to the large amount of parameters. In this paper, we design a Bayesian layer with Bayesian idea as an insertion layer into point estimation based neural networks, and propose a Bayesian Layer Graph Convolutional Network (BLGCN) model by combining graph convolution operations, which can effectively extract graph information and estimate the uncertainty of classification results. Moreover, a Generative Adversarial Network (GAN) is built to solve the sample imbalance problem of HSI dataset. Finally, we design a dynamic control training strategy based on the confidence interval of the classification results, which will terminate the training early when the confidence interval reaches the preseted threshold. The experimental results show that our model achieves a balance between high classification accuracy and strong generalization. In addition, it can quantifies the uncertainty of the classification results.

Towards Consistency and Complementarity: A Multiview Graph Information Bottleneck Approach

Oct 11, 2022

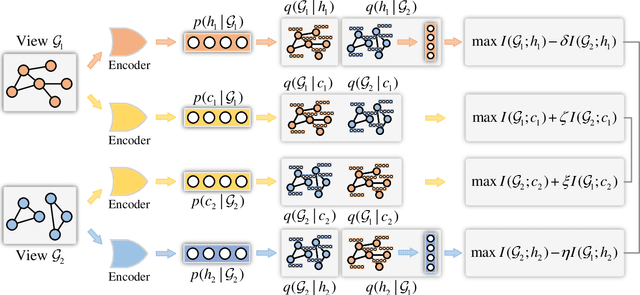

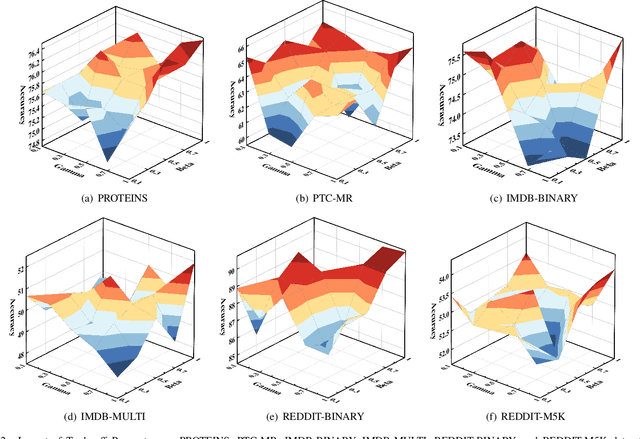

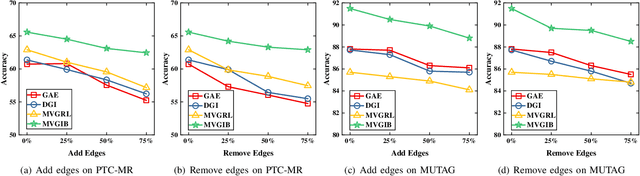

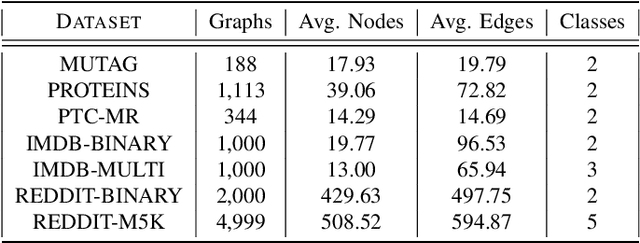

Abstract:The empirical studies of Graph Neural Networks (GNNs) broadly take the original node feature and adjacency relationship as singleview input, ignoring the rich information of multiple graph views. To circumvent this issue, the multiview graph analysis framework has been developed to fuse graph information across views. How to model and integrate shared (i.e. consistency) and view-specific (i.e. complementarity) information is a key issue in multiview graph analysis. In this paper, we propose a novel Multiview Variational Graph Information Bottleneck (MVGIB) principle to maximize the agreement for common representations and the disagreement for view-specific representations. Under this principle, we formulate the common and view-specific information bottleneck objectives across multiviews by using constraints from mutual information. However, these objectives are hard to directly optimize since the mutual information is computationally intractable. To tackle this challenge, we derive variational lower and upper bounds of mutual information terms, and then instead optimize variational bounds to find the approximate solutions for the information objectives. Extensive experiments on graph benchmark datasets demonstrate the superior effectiveness of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge